When Gary Schildhorn picked up the phone on his way to work in 2020, he heard his son Brett’s panicked voice on the other end of the line.

Or so he thought.

A distraught Brett told Schildhorn that he wrecked his car and needed $9,000 to make bail. Brett said he had a broken nose, hit a pregnant woman’s car, and instructed his father to call the prosecutor assigned to his case.

Mr. Schildhorn did as he was told, but a brief call with the supposed public defender, who ordered him to send the money through a Bitcoin kiosk, made the worried father uncomfortable about the situation. After a follow-up call with Brett, Mr. Schildhorn realized he almost fell victim to what the Federal Trade Commission has called a “family emergency scam.”

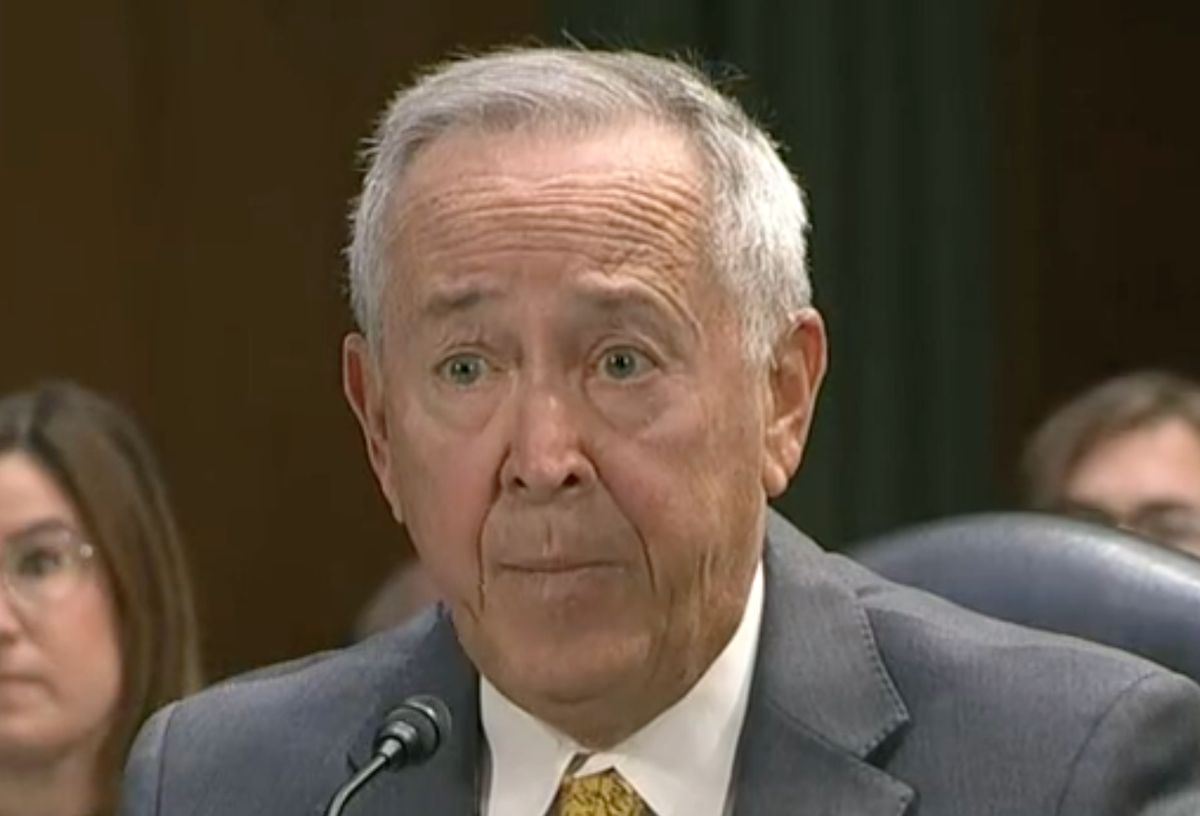

“A FaceTime call from my son, pointing at his nose and saying, ‘My nose is fine, I’m fine, you’re being scammed,'” Mr. Schildhorn, a corporate lawyer in Philadelphia, told Congress. during a hearing earlier this month. “I sat there in my car, I was physically affected by it. It was shock, anger and relief.”

The complex scheme involves fraudsters using artificial intelligence to clone a person’s voice, which is then used to trick loved ones into sending money to cover a supposed emergency. When he contacted the local law enforcement department, Mr. Schildhorn was redirected to the FBI, which then told him that the agency was aware of the nature of the fraud but could not get involved unless the money was sent overseas.

The FTC first raised the alarm in March. Don’t trust the voice. The agency has warned consumers that the era of easily identifiable clumsy scams is over and that advanced technologies have brought along a new set of challenges that officials are still trying to address.

All it takes to reproduce a human voice is a short audio of that person speaking — in some cases easily accessible through content posted on social media. The voice is imitated with an AI voice cloning program to sound exactly like the original clip.

New AI scams impersonate loved ones in trouble

Some programs only require a three-second audio clip to create what the scammer intends with a certain emotion or speaking style, according to PC Magazine. There is also the threat that unsuspecting family members who answer the phone and ask questions to corroborate the scammer’s story may also be recorded, thus inadvertently providing the scammers with more ammunition.

“Scammers ask you to pay or send money in ways that make it difficult to get your money back,” the FTC advisory states. “If the caller says to deposit money, send cryptocurrency or buy gift cards and gives them card numbers and PINs, these could be signs of a scam.”

The rise of artificial intelligence voice cloning scams has forced lawmakers to explore areas to regulate the use of the new technology. During a Senate hearing in June, Pennsylvania mother Jennifer DeStefano shared her own experience with voice cloning scams and how it scared her.

At the June hearing, Ms Stefano recounted hearing who she thought was her 15-year-old daughter she cries and cries on the phone before a man tells her he’s going to “pump [the teen’s] stomach full of drugs and send her to Mexico” if the mother called the police. A call to her daughter confirmed she was safe.

“I will never be able to shake that voice and that desperate cry for help out of my head,” Ms. DeStefano said at the time. “There is no limit to the evil that artificial intelligence can bring. If left unchecked and unchecked, it will rewrite the concept of what is real and what is not.”

(C-SPAN)

Unfortunately, existing legislation falls short of protecting victims of this type of fraud.

IP expert Michael Teich wrote in an August column about IPWatchdog that laws designed to protect privacy may apply in some cases of voice cloning fraud, but can only be enforced by the person whose voice was used, not the victim of the fraud.

Meanwhile, existing copyright laws do not recognize ownership of an individual’s voice.

“This has frustrated me because I’ve been involved in consumer fraud cases and I almost fell for it,” Mr. Schildhorn told Congress. “The only thing I thought I could do was warn people… I’ve gotten calls from people all over the country who… lost money and were devastated. They were hurt emotionally and physically, they almost called to hug them.”

The FTC has yet to set requirements for the companies that develop the voice cloning programs, but according to Mr. Teich, they could face legal consequences if they fail to provide safeguards in the future.

To address the growing number of voice cloning scams, the FTC has announced an open call for action. Entrants are challenged to develop solutions that protect consumers from the harms of voice cloning, and winners will receive a $25,000 prize.

The agency is asking victims of voice cloning scams to report them on its website.