In today’s world, email has become a crucial way for people to communicate. But along with the benefits of email, there’s a big problem: spam. Spam is those annoying emails we didn’t ask for, like ads or scams, that fill up our email inboxes. Thankfully, we can use machine learning to help us deal with this spam issue automatically. In this blog post, we’ll learn how machine learning can help us find and block spam emails, using easy-to-understand Python code and popular machine learning tools.

So here, we start by importing necessary libraries like pandas for data handling and matplotlib/seaborn for visualization. Then, we perform data preprocessing tasks like removing unnecessary words, and punctuation and converting text to lowercase using NLTK. We also import the dataset containing email data from a CSV file. This dataset will be used to train our machine-learning models. Finally, we import various machine learning algorithms from the sklearn library for model building, including SVC (Support Vector Classifier), Random Forest Classifier, and Naive Bayes Classifier. These algorithms will help us classify emails as spam or non-spam.

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

# data preprocessing

import nltk

nltk.download('stopwords')

nltk.download('punkt')

import string

from nltk.corpus import stopwords

from nltk.stem import PorterStemmer

from nltk.tokenize import sent_tokenize, word_tokenize

import re

from collections import Counter

from wordcloud import WordCloud

from sklearn.preprocessing import LabelEncoder

# Model Building

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.model_selection import train_test_split

from sklearn.metrics import accuracy_score,confusion_matrix,precision_score

from sklearn.svm import SVC

from sklearn.ensemble import RandomForestClassifier

from sklearn.naive_bayes import MultinomialNB# ### Importing the Dataset

data = pd.read_csv("spam.csv",encoding='latin1')

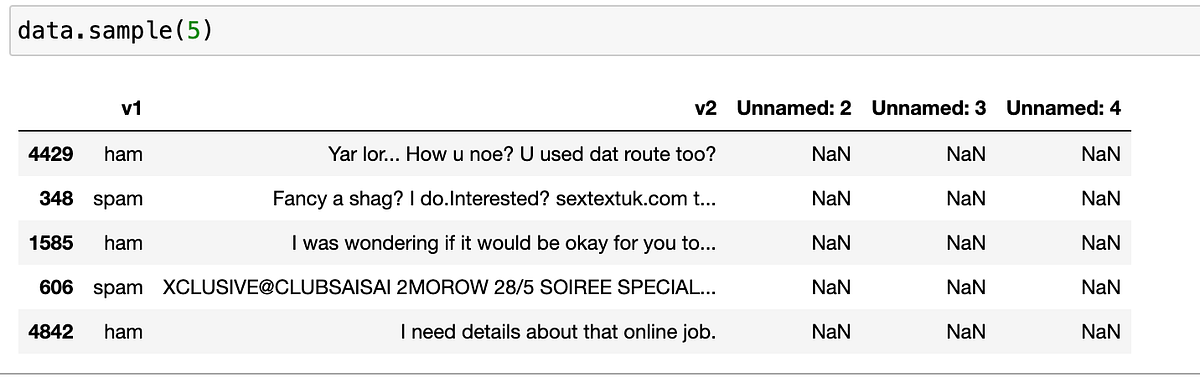

data.sample(5)

data.sample(5) will randomly pick 5 emails from the dataset to show us. Each email has two parts: a label (like “ham” for regular emails or “spam” for unwanted ones) and the actual content of the email. However, it seems like there are some extra columns in the dataset that don’t have useful information, so they’re labeled as “Unnamed” with missing values. By looking at this sample, we can get an idea of what kinds of emails are in the dataset and how they’re organized, helping us understand the data better.Initial Exploration And Data Cleaning

data.shape

data.drop(columns=["Unnamed: 2", "Unnamed: 3", "Unnamed: 4"], inplace=True)

data.rename(columns={'v1': 'result', 'v2': 'emails'}, inplace=True)

data

data.isnull().sum()

data.duplicated().sum()

data = data.drop_duplicates(keep='first')

data.shape

data.head(5)

Here In our Exploratory Data Analysis (EDA), we dig into the dataset to understand important things about spam and regular (ham) emails. We look at how many emails are spam and how many are ham, using easy-to-read charts like pie charts. We also check the average length, word count, and sentence count of emails to see if there’s a difference between spam and ham. Plus, we use special pictures called heatmaps to see if these things are related to each other. This helps us see patterns and understand the data better before we start working with it.

1- Distribution of Labels

data['result'].value_counts()# Plotting

plt.figure(figsize=(8, 6))

plt.pie(data['result'].value_counts(), labels=data['result'].value_counts().index, autopct='%1.1f%%', startangle=140)

plt.title('Distribution of Spam and Non-Spam Emails')

plt.axis('equal')

plt.show()

2- Average Length of Emails for Spam and Ham

data['Length'] = data['emails'].apply(len)

data['num_words'] = data['emails'].apply(word_tokenize).apply(len)

data['num_sentence'] = data['emails'].apply(sent_tokenize).apply(len)

data.head(2)

avg_length_spam = data[data['result'] == 'spam']['Length'].mean()

avg_length_ham = data[data['result'] == 'ham']['Length'].mean()

#plotting

print("Average Length of Spam Emails:", avg_length_spam)

print("Average Length of Ham Emails:", avg_length_ham)

plt.bar(['Spam', 'Ham'], [avg_length_spam, avg_length_ham], color=['Blue', 'green'])

plt.title('Average Length of Emails for Spam and Ham')

plt.xlabel('Email Type')

plt.ylabel('Average Length')

plt.show()

3- Average Word of Emails for Spam and Ham

avg_word_spam = data[data['result'] == 'spam']['num_words'].mean()

avg_word_ham = data[data['result'] == 'ham']['num_words'].mean()

print("Average Words of Spam Emails:", avg_word_spam)

print("Average Words of Ham Emails:", avg_word_ham)# Plotting the graph

plt.bar(['Spam', 'Ham'], [avg_word_spam, avg_word_ham], color=['Blue', 'orange'])

plt.title('Average Words of Emails for Spam and Ham')

plt.xlabel('Email Type')

plt.ylabel('Average Words')

plt.show()

4- Average Sentence of Emails for Spam and Ham

avg_sentence_spam = data[data['result'] == 'spam']['num_sentence'].mean()

avg_sentence_ham = data[data['result'] == 'ham']['num_sentence'].mean()

print("Average Sentence of Spam Emails:", avg_sentence_spam)

print("Average Sentence of Ham Emails:", avg_sentence_ham)# Plotting the graph

plt.bar(['Spam', 'Ham'], [avg_sentence_spam, avg_sentence_ham], color=['Blue', 'black'])

plt.title('Average Sentence of Emails for Spam and Ham')

plt.xlabel('Email Type')

plt.ylabel('Average Sentence')

plt.show()

5- Relationship between Length and Spam

correlation = data['Length'].corr((data['result'] == 'spam').astype(int))

print("Correlation coefficient between email length and spam classification:", correlation)sns.violinplot(data=data, x='Length', y='result', hue='result')

plt.xlabel('Email Length')

plt.ylabel('Spam Classification')

plt.title('Relationship between Email Length and Spam Classification')

plt.show()

6- Relationship between Features

correlation_matrix = data[['Length', 'num_words', 'num_sentence']].corr()

print("The Relationship between Features are ",correlation_matrix )

# Visualize the correlation matrix using a heatmap

plt.figure(figsize=(8, 6))

sns.heatmap(correlation_matrix, annot=True, cmap='coolwarm', fmt=".2f", linewidths=.5)

plt.title('Correlation Matrix of Features')

plt.xlabel('Features')

plt.ylabel('Features')

plt.show()

Before building machine learning models, we preprocess the email data to convert it into a suitable format for analysis. This involves tasks such as lowercasing, tokenization, removing special characters, stopwords, and punctuation, as well as stemming to reduce words to their root forms.

data['transform_text'] = data['emails'].str.lower()

# Tokenization

data['transform_text'] = data['transform_text'].apply(word_tokenize)# Removing special characters

data['transform_text'] = data['transform_text'].apply(lambda x: [re.sub(r'[^a-zA-Z0-9\s]', '', word) for word in x])

# Removing stop words and punctuation

stop_words = set(stopwords.words('english'))

data['transform_text'] = data['transform_text'].apply(lambda x: [word for word in x if word not in stop_words and word not in string.punctuation])

# Stemming

ps = PorterStemmer()

data['transform_text'] = data['transform_text'].apply(lambda x: [ps.stem(word) for word in x])

# Convert the preprocessed text back to string

data['transform_text'] = data['transform_text'].apply(lambda x: ' '.join(x))

# Display the preprocessed data

print(data[['emails', 'transform_text']].head())

In the data preprocessing step, we’re getting our email data ready for analysis. First, we convert all text to lowercase so that uppercase and lowercase letters don’t cause confusion. Then, we break down each email into smaller parts called tokens using a process called tokenization. After that, we remove any special characters like symbols or emojis that don’t add useful information. Next, we take out common words like “the” or “and,” as well as punctuation marks, because they’re not helpful for identifying spam. Finally, we reduce words to their base form using a process called stemming, which helps in simplifying the data for analysis.

7- Most Common Words in Spam Emails

spam_emails = data[data['result'] == 'spam']['transform_text']

# Tokenize the text in spam emails

spam_words = ' '.join(spam_emails).split()

# Count occurrences of each word

word_counts = Counter(spam_words)

# Find the most common words

most_common_words = word_counts.most_common(10)

print("Top 10 Most Common Words in Spam Emails:")

for word, count in most_common_words:

print(f"{word}: {count} occurrences")

# Generate Word Cloud

wordcloud = WordCloud(width=800, height=400, background_color='white').generate_from_frequencies(dict(most_common_words))

# Plot Word Cloud

plt.figure(figsize=(12, 6))

plt.subplot(1, 2, 1)

plt.imshow(wordcloud, interpolation='bilinear')

plt.title('Word Cloud for Most Common Words in Spam Emails')

plt.axis('off')

# Plot Bar Graph

plt.subplot(1, 2, 2)

words, counts = zip(*most_common_words)

plt.bar(words, counts, color='orange')

plt.title('Bar Graph for Most Common Words in Spam Emails')

plt.xlabel('Words')

plt.ylabel('Frequency')

plt.xticks(rotation=45, ha='right')

plt.tight_layout()

plt.show()

8- Most Common Words in Ham Emails

ham_emails = data[data['result'] == 'ham']['transform_text']

# Tokenize the text in spam emails

ham_words = ' '.join(ham_emails).split()

# Count occurrences of each word

word_counts = Counter(ham_words)

# Find the most common words

most_common_words = word_counts.most_common(10)print("Top 10 Most Common Words in ham Emails:")

for word, count in most_common_words:

print(f"{word}: {count} occurrences")

# Generate Word Cloud

wordcloud = WordCloud(width=800, height=400, background_color='white').generate_from_frequencies(dict(most_common_words))

# Plot Word Cloud

plt.figure(figsize=(12, 6))

plt.subplot(1, 2, 1)

plt.imshow(wordcloud, interpolation='bilinear')

plt.title('Word Cloud for Most Common Words in ham Emails')

plt.axis('off')

# Plot Bar Graph

plt.subplot(1, 2, 2)

words, counts = zip(*most_common_words)

plt.bar(words, counts, color='orange')

plt.title('Bar Graph for Most Common Words in ham Emails')

plt.xlabel('Words')

plt.ylabel('Frequency')

plt.xticks(rotation=45, ha='right')

plt.tight_layout()

plt.show()

STEP 5- “Preparing Data for Machine Learning: Label Encoding and Vectorization”

encoder = LabelEncoder()

data['result'] = encoder.fit_transform(data['result'])

data.sample(2)#data spliting and vectorization

tfidf = TfidfVectorizer(max_features=3000)

X = tfidf.fit_transform(data['emails']).toarray()

y = data['result']

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)

Here, First, we use a LabelEncoder to convert the ‘result’ column, which contains labels for spam (‘spam’) and non-spam (‘ham’) emails, into numerical values. This step is essential because machine learning algorithms typically work with numerical data. Then, we split our data into training and testing sets using train_test_split, where 80% of the data is used for training (X_train and y_train), and 20% is used for testing (X_test and y_test), which helps evaluate the performance of our model. Finally, we use TfidfVectorizer to convert our email text data into numerical vectors, making it suitable for machine learning algorithms to understand and analyze effectively.

STEP 6- Model Building

As we know Our Target variable is a classification type so to achieve this, we explore various machine learning models: Support Vector Machines (SVM), Random Forest, and Naive Bayes classifiers. These models learn from our preprocessed email data to distinguish between spam and legitimate messages. We evaluate each model’s performance using metrics like accuracy and precision. By comparing their performance, we determine the most effective model for accurately identifying spam emails. This process aids in enhancing email security by ensuring that our detection system effectively filters out unwanted messages, providing users with a safer and more efficient email experience.

Model 1- SVC

svc_classifier = SVC()

svc_classifier.fit(X_train, y_train)

y_pred_svc = svc_classifier.predict(X_test)

accuracy_svc = accuracy_score(y_test, y_pred_svc)

print(f"SVM Accuracy: {accuracy_svc:.2f}")

print("confusion Matrix :",confusion_matrix(y_test,y_pred_svc))

print("Precision Score: ",precision_score(y_test,y_pred_svc))

Model 2- Random Forest classifier

rf_classifier = RandomForestClassifier()

rf_classifier.fit(X_train, y_train)

y_pred_rf = rf_classifier.predict(X_test)

accuracy_rf = accuracy_score(y_test, y_pred_rf)

print(f"Random Forest Accuracy: {accuracy_rf:.2f}")

print("confusion Matrix :",confusion_matrix(y_test,y_pred_rf))

print("Precision Score: ",precision_score(y_test,y_pred_rf))

Model 3- Naive Bayes classifier

nb_classifier = MultinomialNB()

nb_classifier.fit(X_train, y_train)

y_pred_nb = nb_classifier.predict(X_test)

accuracy_nb = accuracy_score(y_test, y_pred_nb)

print(f"Naive Bayes Accuracy: {accuracy_nb:.2f}")

print("confusion Matrix :",confusion_matrix(y_test,y_pred_nb))

print("Precision Score: ",precision_score(y_test,y_pred_nb))

Choosing the Best Classifier for Email Spam Detection

# Calculate precision scores for each classifier

precision_svc = precision_score(y_test, y_pred_svc)

precision_rf = precision_score(y_test, y_pred_rf)

precision_nb = precision_score(y_test, y_pred_nb)# Create lists to store accuracies and precision scores

classifiers = ['SVC', 'Random Forest', 'Naive Bayes']

accuracies = [accuracy_svc, accuracy_rf, accuracy_nb]

precision_scores = [precision_svc, precision_rf, precision_nb]

# Plot bar graph for accuracies and precision scores side by side

fig, axes = plt.subplots(1, 2, figsize=(15, 5))

# Plot bar graph for accuracies

axes[0].bar(classifiers, accuracies, color='skyblue')

axes[0].set_xlabel('Classifier')

axes[0].set_ylabel('Accuracy')

axes[0].set_title('Accuracy Comparison of Different Classifiers')

axes[0].set_ylim(0, 1)

# Plot bar graph for precision scores

axes[1].bar(classifiers, precision_scores, color='lightgreen')

axes[1].set_xlabel('Classifier')

axes[1].set_ylabel('Precision Score')

axes[1].set_title('Precision Score Comparison of Different Classifiers')

axes[1].set_ylim(0, 1)

plt.tight_layout()

plt.show()

STEP 6- Model Prediction

Finally, we demonstrate how our trained SVC model can be used to predict whether new emails are spam or ham. We provide examples of predicting spam or ham status for sample email texts, showcasing the model’s ability to classify emails in real-world scenarios.

Type 1- Predict with new data

new_emails = [

"Get a free iPhone now!",

"Hey, how's it going?",

"Congratulations! You've won a prize!",

"Reminder: Meeting at 2 PM tomorrow."

]# Convert new data into numerical vectors using the trained tfidf_vectorizer

new_X = tfidf.transform(new_emails)

new_X_dense = new_X.toarray()

# Use the trained SVM model to make predictions

svm_predictions = svc_classifier.predict(new_X_dense)

# Print the predictions

for email, prediction in zip(new_emails, svm_predictions):

if prediction == 1:

print(f"'{email}' is predicted as spam.")

else:

print(f"'{email}' is predicted as ham.")

Type 2- User Input Data Prediction

def predict_email(email):

# Convert email into numerical vector using the trained TF-IDF vectorizer

email_vector = tfidf.transform([email])# Convert sparse matrix to dense array

email_vector_dense = email_vector.toarray()

# Use the trained SVM model to make predictions

prediction = svc_classifier.predict(email_vector_dense)

# Print the prediction

if prediction[0] == 1:

print("The email is predicted as spam.")

else:

print("The email is predicted as ham.")

# Get user input for email

user_email = input("Enter the email text: ")

# Predict whether the input email is spam or ham

predict_email(user_email)

predict_email function takes an email as input, converts it into a numerical vector using TF-IDF, and predicts whether it’s spam or ham using a trained SVM model. For instance, if you enter an email claiming you’ve won a prize, it’ll classify it as spam. This process ensures emails are accurately categorized to help users distinguish between legitimate and unwanted messages. By analyzing the email’s content and comparing it to known patterns, the function helps maintain inbox cleanliness and security. It simplifies the complex task of spam detection, making email management more efficient and user-friendly.Email spam detection using machine learning provides a strong solution to the annoying issue of unwanted messages. By cleaning up and organizing the data, creating useful features, and building smart models, we can make effective filters that keep our emails safe. Since email is so important for communication, it’s crucial to have good spam filters. These filters help us avoid clutter in our inboxes and make sure our digital conversations stay secure. With continued development, we can keep improving these systems to ensure our email experience is smooth and hassle-free.