In the rapidly evolving Generative AI (GenAI) landscape, data scientists and AI builders are constantly looking for powerful tools to build innovative applications using large language models (LLM). DataRobot has introduced a range of advanced LLM assessment, testing and evaluation metrics to their Playground, offering unique features that set it apart from other platforms.

These metrics, including fidelity, correctness, referrals, Rouge-1, cost, and latency, provide a comprehensive and standardized approach to validating the quality and performance of GenAI applications. By leveraging these metrics, AI customers and manufacturers can deploy reliable, efficient and high-value GenAI solutions with increased confidence, accelerating time to market and gaining a competitive advantage. In this blog post, we’ll take a deep dive into these metrics and explore how they can help you unlock the full potential of LLMs on the DataRobot platform.

Investigating overall assessment metrics

DataRobot’s Playground offers a comprehensive set of evaluation metrics that allow users to benchmark, compare performance, and rank their recovery-augmented-generation (RAG) experiments. These metrics include:

- Faithfulness: This metric evaluates how the responses generated by LLM reflect the data coming from the vector databases, ensuring the reliability of the information.

- Correctness: By comparing the generated responses to the ground truth, the correctness metric assesses the accuracy of the LLM outputs. This is especially valuable for applications where accuracy is critical, such as in the healthcare, financial or legal fields, enabling customers to trust the information provided by the GenAI application.

- References: This metric tracks documents retrieved by LLM when queried from the vector database, providing information about the sources used to generate the responses. It helps users ensure that their app leverages the most appropriate sources, enhancing the relevance and credibility of the content produced. Playground’s custodial models can help verify the quality and relevance of reports used by LLMs.

- Rouge-1: The Rouge-1 metric calculates the monogram overlap (per word) between the generated response and the documents retrieved from the vector databases, allowing users to assess the relevance of the generated content.

- Cost and delay: We also provide metrics to track the cost and latency associated with running LLM, enabling users to optimize their experiments for efficiency and cost effectiveness. These metrics help organizations strike the right balance between performance and budget constraints, ensuring the feasibility of deploying GenAI applications at scale.

- Guard models: Our platform allows users to apply protection models from the DataRobot Registry or custom models to evaluate LLM responses. Models such as toxicity probes and PII can be added to the playground to evaluate each LLM output. This allows for easy testing of protection models in LLM responses prior to deployment in production.

Effective Experimentation

DataRobot’s Playground enables AI customers and builders to freely experiment with different LLMs, slicing strategies, embedding methods, and prompting methods. Evaluation metrics play a critical role in helping users navigate this experimentation process effectively. By providing a standardized set of evaluation metrics, DataRobot allows users to easily compare the performance of different LLM configurations and experiments. This allows AI customers and manufacturers to make data-driven decisions when choosing the best approach for their specific use case, saving time and resources in the process.

For example, by experimenting with different slicing strategies or embedding methods, users have been able to significantly improve the accuracy and relevance of their GenAI applications in real-world scenarios. This level of experimentation is crucial to developing high-performance GenAI solutions tailored to specific industry requirements.

Optimization and user feedback

Evaluation metrics in the Playground act as a valuable tool for evaluating the performance of GenAI applications. By analyzing metrics like Rouge-1 or reports, AI customers and developers can identify areas where their models can be improved, such as enhancing the relevance of the responses generated or ensuring that the application leverages the most appropriate sources of the vector databases. These metrics provide a quantitative approach to evaluating the quality of generated responses.

In addition to rating metrics, DataRobot’s Playground allows users to provide instant feedback on generated responses through thumbs up/down ratings. This user feedback is the primary method for generating a small data set. Users can review answers generated by LLM and vote on their quality and relevance. The upvoted responses are then used to create a dataset to fine-tune the GenAI app, allowing it to learn from the user’s preferences and generate more accurate and relevant responses in the future. This means that users can collect as much feedback as they need to create a comprehensive set of fine-tuning data that reflects real user preferences and requirements.

By combining evaluation metrics and user feedback, AI customers and manufacturers can make data-driven decisions to optimize their GenAI applications. They can use the metrics to identify high-performing responses and include them in the fine-tuning dataset, ensuring the model learns from the best examples. This iterative process of evaluation, feedback and refinement enables organizations to continuously improve GenAI applications and deliver high-quality user-centric experiences.

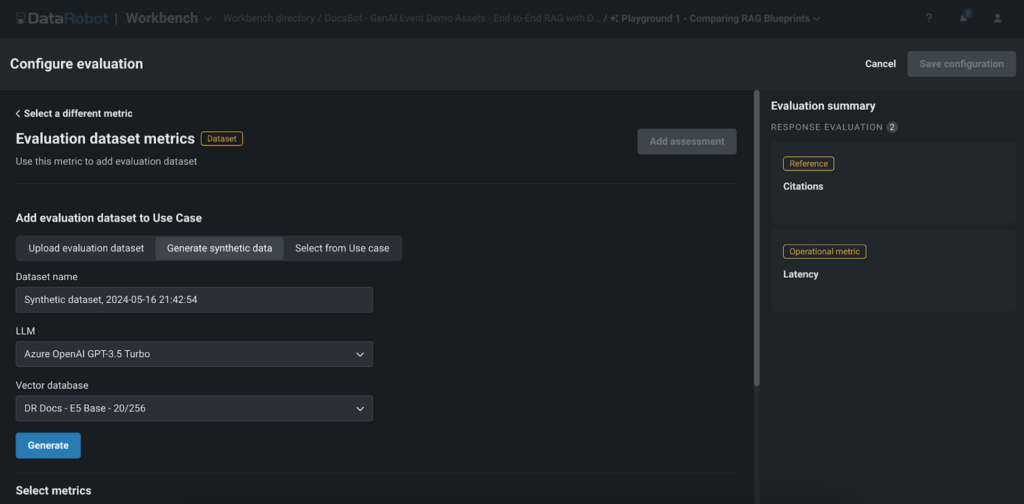

Generating Synthetic Data for Rapid Assessment

One of the unique features of DataRobot’s Playground is the generation of synthetic data for immediate evaluation and response. This feature allows users to quickly and effortlessly generate question-answer pairs based on the user’s vector database, enabling them to thoroughly evaluate the performance of their RAG experiments without the need for manual data generation.

The production of synthetic data offers several key advantages:

- Save time: Creating large datasets manually can be time-consuming. DataRobot’s synthetic data generation automates this process, saving valuable time and resources and enabling AI customers and developers to rapidly prototype and test their GenAI applications.

- Scalability: With the ability to generate thousands of question-answer pairs, users can thoroughly test their RAG experiments and ensure robustness across a wide range of scenarios. This comprehensive testing approach helps AI customers and manufacturers deliver high-quality applications that meet the needs and expectations of their end users.

- Quality assessment: By comparing generated responses to synthetic data, users can easily assess the quality and accuracy of their GenAI application. This accelerates the time-to-value for their GenAI applications, allowing organizations to bring their innovative solutions to market faster and gain a competitive advantage in their respective industries.

It is important to note that while synthetic data provide a quick and efficient way to evaluate GenAI applications, they may not always capture the full complexity and nuances of real-world data. Therefore, it is crucial to use synthetic data in combination with real user feedback and other evaluation methods to ensure the robustness and effectiveness of the GenAI application.

conclusion

DataRobot’s advanced LLM evaluation, testing, and assessment metrics in Playground provide AI customers and developers with a powerful set of tools to build high-quality, reliable, and efficient GenAI applications. By offering comprehensive evaluation metrics, effective experimentation and optimization capabilities, incorporating user feedback, and generating synthetic data for rapid evaluation, DataRobot enables users to fully unlock the potential of LLMs and drive meaningful results.

With increased confidence in model performance, accelerated time to value, and the ability to optimize their applications, AI customers and manufacturers can focus on delivering innovative solutions that solve real-world problems and create value for their end users. DataRobot’s Playground, with its advanced evaluation metrics and unique features, is a game-changer in the GenAI landscape, enabling organizations to push the boundaries of what is possible with large language models.

Don’t miss the opportunity to optimize your projects with the most advanced LLM testing and evaluation platform available. Visit DataRobot’s playground now and start your journey to building superior GenAI applications that truly stand out in the competitive AI landscape.

About the Author

Nathaniel Daly is a Senior Product Manager at DataRobot focusing on AutoML and time series products. It has focused on bringing advances in data science to users so they can leverage this value to solve real-world business problems. He holds a BA in Mathematics from the University of California, Berkeley.

Meet Nathaniel Daly