Imagine scrolling through your phone’s photos and you come across an image that you can’t recognize at first. It looks like something fuzzy on the couch. could it be a pillow or a coat? After a few seconds it clicks — of course! This ball of fluff is your friend’s cat, Mocha. While some of your photos could be understood in an instant, why was this cat photo so much more difficult?

Researchers at the MIT Computer Science and Artificial Intelligence Laboratory (CSAIL) were surprised to find that despite the critical importance of understanding visual data in key areas ranging from healthcare to transportation to home appliances, the notion of difficulty recognizing an image for the man was almost entirely ignored. One of the main drivers of progress in deep learning-based AI is datasets, yet we know little about how data drives progress in large-scale deep learning beyond that bigger is better.

In real-world applications that require understanding visual data, humans outperform object recognition models despite the fact that the models perform well on current datasets, including those explicitly designed to challenge machines with degraded images or distribution shifts. This problem persists, in part, because we have no guidance on the absolute difficulty of an image or data set. Without controlling the difficulty of the images used for evaluation, it is difficult to objectively assess progress toward human-level performance, cover the range of human capabilities, and increase the challenge posed by a dataset.

To fill this knowledge gap, David Mayo, an MIT PhD student in Electrical and Computer Science and a CSAIL affiliate, delved into the deep world of image datasets, investigating why some images are harder for humans and machines to recognize from others. “Some images inherently take longer to recognize, and it is important to understand brain activity during this process and how it relates to machine learning models. Perhaps there are complex neural circuits or unique mechanisms missing in our current models, visible only when are tested with challenging visual This exploration is crucial to understanding and improving machine vision models,” says Mayo, lead author of a new paper in the project.

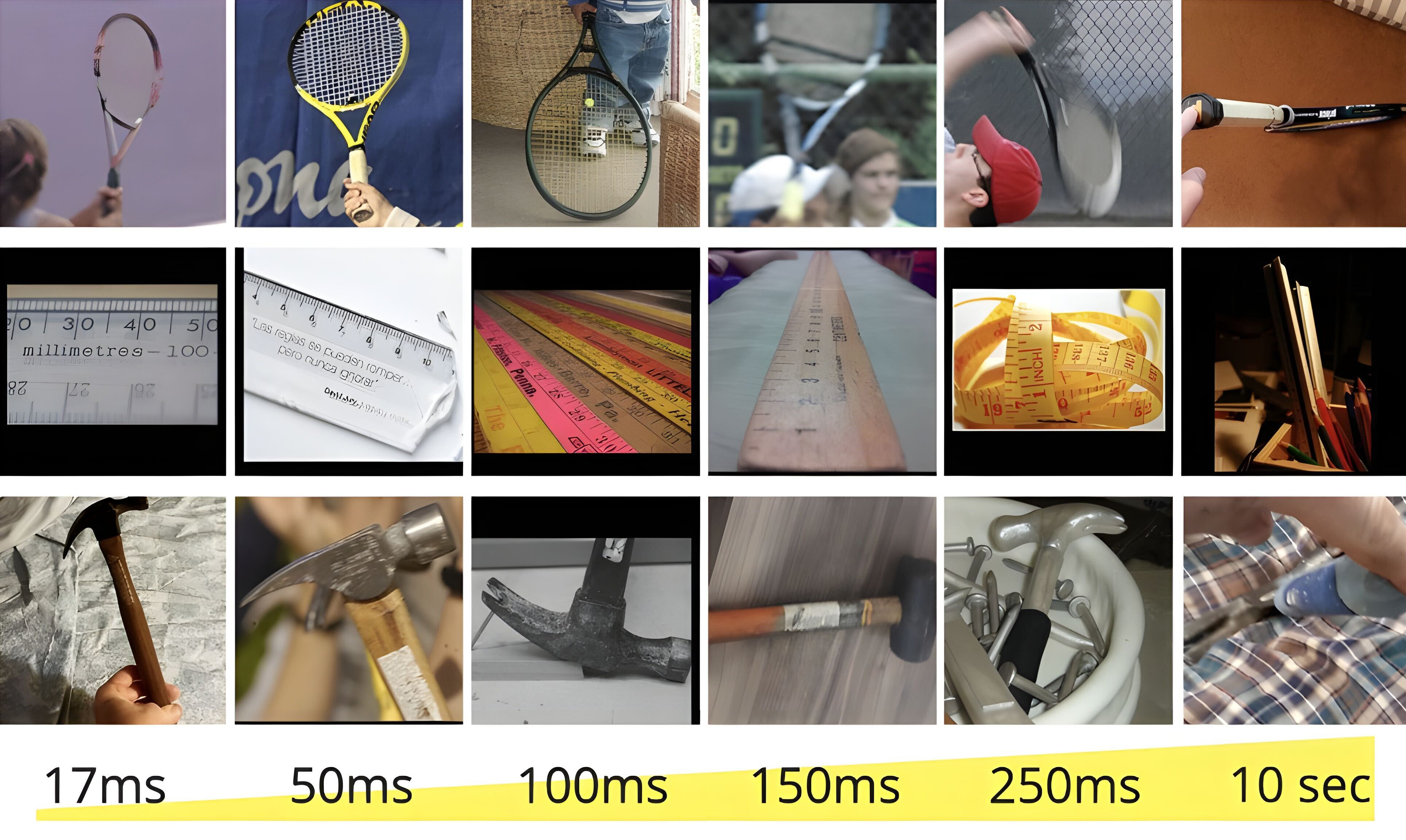

This led to the development of a new measurement, the “minimum viewing time” (MVT), which quantifies the difficulty of recognizing an image based on the time it takes a person to view it before making the correct identification. Using a subset of ImageNet, a popular dataset in machine learning, and ObjectNet, a dataset designed to test the robustness of object recognition, the team showed participants images for varying durations from 17 milliseconds to 10 seconds and asked them. to select the correct item from a set of 50 options. After more than 200,000 image presentation tests, the team found that existing test sets, including ObjectNet, appeared biased toward easier, shorter MVT images, with the vast majority of benchmark performances coming from human-friendly images.

The project identified interesting trends in model performance — particularly with respect to scaling. Larger models showed significant improvement on simpler images, but made less progress on more challenging images. CLIP models, which incorporate both language and vision, stood out as they moved in the direction of more human-like recognition.

“Traditionally, object recognition datasets have been skewed toward less complex images, a practice that has led to inflated model performance metrics that do not truly reflect the robustness of a model or its ability to handle complex visual tasks. Our research reveals that harder images pose a more acute challenge, causing a distributional shift that is often not accounted for in standard assessments,” says Mayo. “We published sets of difficulty-tagged images along with tools to automatically calculate MVT, allowing MVT to be added to existing benchmarks and extended to various applications. These include measuring test set difficulty before developing real-world systems, discovering neural correlates of image difficulty, and advancing object recognition techniques to close the gap between benchmark and real-world performance.”

“One of my biggest takeaways is that we now have another dimension to evaluating models. We want models that are able to recognize any image even if—perhaps especially if—it is difficult for a human to recognize. We are the first to quantify what that would mean. Our results show that not only is this not the case with the current state of the art, but current assessment methods are not able to tell us when it is because standard datasets are so skewed towards easy images.” says Jesse Cummings, an MIT graduate student in Electrical and Computer Science and first author with Mayo on the paper.

From ObjectNet to MVT

A few years ago, the team behind this project identified a major challenge in machine learning: Models were struggling with images that were out of distribution, or images that were poorly represented in the training data. Enter ObjectNet, a dataset consisting of images collected from real-world settings. The dataset helped illuminate the performance gap between machine learning models and human recognition abilities by eliminating false correlations that exist in other reference points — for example, between an object and its background. ObjectNet illuminated the gap between the performance of machine vision models on datasets and in real-world applications, encouraging usage for many researchers and developers — which then improved model performance.

Fast forward to the present, and the team has taken their research a step further with the MVT. Unlike traditional methods that focus on absolute performance, this new approach evaluates the models’ performance by contrasting their responses to the easiest and most difficult images. The study further explored how image difficulty could be explained and tested for similarity to human visual processing. Using metrics such as c-score, prediction depth and adversary resilience, the team found that harder images are processed differently by networks. “While there are observable trends, such as easier images being more original, a comprehensive semantic explanation of image difficulty continues to elude the scientific community,” says Mayo.

In healthcare, for example, the relevance of understanding visual complexity becomes even more pronounced. The ability of artificial intelligence models to interpret medical images, such as X-rays, depends on the diversity and difficulty of segmentation of the images. The researchers advocate a thorough analysis of the difficulty distribution tailored for professionals, ensuring that AI systems are evaluated against the standards of experts rather than the interpretations of ordinary people.

Mayo and Cummings are currently also examining the neurological underpinnings of visual recognition, investigating whether the brain shows differential activity when processing easy and challenging images. The study aims to reveal whether complex images recruit additional areas of the brain not normally associated with visual processing, hopefully helping to unravel how our brains accurately and efficiently decode the visual world.

Towards human-level performance

Looking ahead, researchers are not only focused on exploring ways to improve AI’s predictive capabilities on image difficulty. The team is working to identify correlations with view time difficulty in order to create harder or easier versions of images.

Despite the study’s important strides, the researchers acknowledge limitations, particularly in separating object recognition from visual search tasks. Current methodology focuses on object recognition, omitting the complexities introduced by cluttered images.

“This integrated approach addresses the long-standing challenge of objectively assessing progress toward human-level performance in object recognition and opens new avenues for understanding and advancing the field,” says Mayo. “By being able to adjust the Minimum Viewing Time difficulty metric for a variety of visual tasks, this work paves the way for more robust, human-like performance in object recognition, ensuring that models are truly tested and ready for complexities of real-world visual understanding ».

“This is a fascinating study of how human perception can be used to identify weaknesses in the way AI vision models are compared, which overestimate the performance of AI by focusing on easy images,” says Alan L. Yuille, Distinguished Professor of Cognitive Science and Bloomberg. Computer Science at Johns Hopkins University, who was not involved in the work. “This will help develop more realistic benchmarks that lead not only to improvements in AI, but also to fairer comparisons between AI and human perception.”

“It’s widely argued that computer vision systems now outperform humans, and on some benchmark datasets, that’s true,” says Anthropic technical staff member Simon Kornblith PhD ’17, who also wasn’t involved. the job. “However, much of the difficulty in these benchmarks comes from the ambiguity of what is in the images. the average person simply does not know enough to classify different dog breeds. This project focuses on images that people can only get right if given enough time. These images are generally much more difficult for computer vision systems, but the best systems are only slightly worse than humans.”

Mayo, Cummings and Xinyu Lin MEng ’22 wrote the paper along with CSAIL Research Scientist Andrei Barbu, CSAIL Principal Research Scientist Boris Katz, and MIT-IBM Watson AI Lab Principal Investigator Dan Gutfreund. The researchers are partners of the MIT Center for Brains, Minds, and Machines.

The team is presenting their work at the 2023 Conference on Neural Information Processing Systems (NeurIPS).