The clustering operation involves sliding a 2D filter over each channel of the feature map and summarizing the features located in the area covered by the filter.

For a feature map that has dimensions nh x nw x ncthe dimensions of the output obtained after a pooling layer are

(nh - f + 1)/s *(nw - f+ 1)/s *nc

where

nh - height of feature map

nw - width of feature map

nc - number of channels in the feature map

f - size of filter

s - stride length

A common CNN model architecture is to have a number of convolution and aggregation layers stacked one after the other.

Pooling layers?

- Aggregation levels are used to reduce the dimensionality of feature maps. Thus, it reduces the number of parameters to learn and the amount of computation performed on the network.

- The aggregation layer summarizes the features present in a region of the feature map created by a convolution layer. Thus, further operations are performed on summary features instead of precisely positioned features generated by the convolution layer. This makes the model more robust to variations in the location of features in the input image.

Concentration types:

- MaxPooling

- Average concentration

- Global gathering

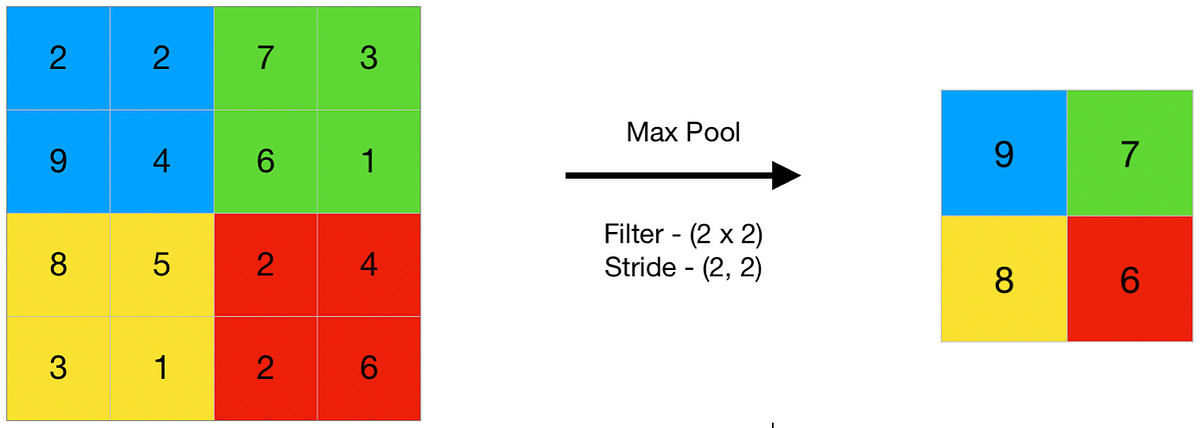

Max Pooling

Max Aggregation is an aggregation operation that selects the maximum element from the region of the feature map covered by the filter. Thus, the output after the max-pooling layer will be a feature map that contains the most important features of the previous feature map.

Average concentration

The mean concentration averages the elements present in the region of the feature map covered by the filter. Thus, while the maximum concentration gives the most important feature in a particular patch of the feature map, the average concentration gives the average of the features present in a patch.

Aggregation reduces each channel in the feature map to a single value. So, one nh x nw x nc the feature map is reduced to 1 x 1 x nc feature map. This is equivalent to using a dimensional filter nh x nw that is, the dimensions of the feature map.

Additionally, it can be either a global maximum concentration or a global average concentration.

MaxPooling is a downsampling operation often used in Convolutional Neural Networks (CNNs) to reduce the spatial dimensions of the input volume. It is a form of aggregation layer and helps retain the most important information while discarding less important details. MaxPooling is usually applied after convolutional layers in a CNN.

The basic idea behind MaxPooling is to divide the input image into non-overlapping rectangular regions and, for each region, extract the maximum value. This function is performed independently for each channel in the input.

Here’s a simple explanation of how MaxPooling works:

Import area:

- The input image is divided into small regions (typically 2×2 or 3×3).

- For each region, the maximum value is calculated.

Output feature map:

- The maximum value for each region is taken and forms the output of that region.

- The result is a sampled version of the input, with reduced spatial dimensions.

Mathematically, if we denote the input as X and the output as Ythe MaxPooling function can be defined as:

Y[i,j,k]=max(X[2i:2i+2,2j:2j+2,k])

where I and i repeat the height and width dimensions of the input and K iterates over the channels.

Common choices for the pooling window size are 2×2 or 3×3, and the stride (the step size when moving the pooling window) is often set to be equal to the window size for non-overlapping pooling.

import numpy as np

from keras.models import Sequential

from keras.layers import MaxPooling2D# define input image

image = np.array([[2, 2, 7, 3],

[9, 4, 6, 1],

[8, 5, 2, 4],

[3, 1, 2, 6]])

image = image.reshape(1, 4, 4, 1)

# define model containing just a single max pooling layer

model = Sequential(

[MaxPooling2D(pool_size = 2, strides = 2)])

# generate pooled output

output = model.predict(image)

# print output image

output = np.squeeze(output)

print(output)

[[9. 7.]

[8. 6.]]

Let’s look at a simple MaxPooling example with a 2×2 pooling window. Consider a small 4×4 input array:

Now, let’s apply 2×2 MaxPooling to this input array. The clustering operation involves moving a 2×2 window along the input and, for each window, taking the maximum value. The output table, Ywill have reduced spatial dimensions.

Y[i,j]=max(X[2i:2i+2,2j:2j+2])

Let’s calculate Y step by step:

- For I=0 and i=0:

[0,0]=max(X[0:2,0:2])

=max([1 5

3 6]) =6

- For I=0 and i=1:

Y[0,1]=max(X[0:2,2:4])

=max([2 7

4 8])=8

- For I=1 and i=0:

Y[1,0]=max(X[2:4,0:2])

max([9 13

10 14])=14

- For I=1 and i=1:

Y[1,1]=max(X[2:4,2:4])

=max([11 15

12 16])=16

The resulting output matrix Y is:Y=[ 6 14

8 16 ]

Max pooling offers several benefits in the context of CNNs:

- Invariant feature: Max pooling helps to make the model invariant to feature position and orientation. This means that the network can identify an object in an image regardless of where it is located.

- Dimension reduction: By downsampling the input, max pooling significantly reduces the number of parameters and computations in the network, thereby speeding up the learning process and reducing the risk of overfitting.

- Noise Suppression: Peaking helps suppress noise in the input data. By taking the maximum value within the window, it emphasizes the presence of strong features and reduces weaker ones.

In practice, the maximum concentration levels are placed after convolutional layers in a CNN. After a convolutional layer extracts features from the input image, the maximum aggregation layer reduces the spatial size of the convolutional feature map, retaining only the most important information. This process is repeated for multiple levels of convolution and aggregation, allowing the network to learn a hierarchy of features at various levels of abstraction.

Max Pooling is a simple yet effective technique that has been instrumental in the success of CNNs in various applications, particularly in image and video recognition tasks. Its ability to reduce the computational load while preserving essential features has made it a key component in deep learning architectures.

Despite its advantages, max pooling is not without its challenges. One criticism is that it can sometimes be too aggressive, discarding potentially useful information that could be important to the classification task. Additionally, max-pooling is a fixed function and does not learn from data, unlike convolutional layers that have learnable parameters.

As a result, some modern CNN architectures have begun to move away from traditional max-pooling levels, using alternatives such as stepwise convolutions for downsampling or incorporating pooling functions that can learn to adapt to the data.

The link to the last article containing the original part of the article

from tensorflow import keras

from tensorflow.keras import layersmodel = keras.Sequential([

layers.Conv2D(filters=64, kernel_size=3), # activation is None

layers.MaxPool2D(pool_size=2),

# More layers follow

])

A MaxPool2D layer is very similar to a Conv2Dlayer, except that it uses a simple max function instead of a kernel, with the pool_size parameter proportional to the kernel_Size. However, a MaxPool2D layer does not have training weights like a convolutional layer at its core.

Let’s take another look at the export scheme from the last lesson. Remember that MaxPool2D is the Sum up step.

Note that after applying the ReLU function (They detect) the feature map ends up with a lot of “dead space”, i.e. large areas containing only 0 (the black areas in the image). Performing these 0 activations on the entire network will increase the size of the model without adding much useful information. On the contrary, we would like to sum up the feature map to keep only the most useful part — the feature itself.

This is actually maximum concentration does. Max pooling takes a patch of activations in the original feature map and replaces them with the maximum activation in that patch.

When applied after ReLU activation, it has the effect of “boosting” the features. The pooling step increases the ratio of active pixels to zero pixels.

Translation Invariant

We called zero pixels “trivial”. Does that mean they have no information at all? In fact, zero pixels carry location information. The blank space still places the attribute inside the image. when MaxPool2D removes some of those pixels, removes some of the location information in the feature map. This gives a convnet a property called translation invariant. This means that a maximally clustered convnet will tend not to distinguish features from their features location in the picture. (“Translation” is the math word for changing the position of something without rotating it or changing its shape or size.)

Watch what happens when we repeatedly apply maximum concentration in the feature map below.

The two dots in the original image became indiscernible after repeated concentration. In other words, the gathering destroyed some of their location information. Since the network can no longer distinguish them in the feature maps, it cannot distinguish them in the original image either: it has been unchanged in this difference in position.

In fact, aggregation only creates variability of translation in a network in short distances, as with the two dots in the image. Features that start too far away will remain distinct after focusing. only some location information was lost, but not all.

This variability to small differences in feature positions is a nice property for an image classifier. Just because of differences in perspective or framing, the same feature may be placed in different parts of the original image, but we would like the classifier to recognize that they are the same.

Other layers of concentration

import numpy as np

from keras.models import Sequential

from keras.layers import AveragePooling2D# define input image

image = np.array([[2, 2, 7, 3],

[9, 4, 6, 1],

[8, 5, 2, 4],

[3, 1, 2, 6]])

image = image.reshape(1, 4, 4, 1)

# define model containing just a single average pooling layer

model = Sequential(

[AveragePooling2D(pool_size = 2, strides = 2)])

# generate pooled output

output = model.predict(image)

# print output image

output = np.squeeze(output)

print(output)

[[4.25 4.25]

[4.25 3.5 ]]

import numpy as np

from keras.models import Sequential

from keras.layers import GlobalMaxPooling2D

from keras.layers import GlobalAveragePooling2D# define input image

image = np.array([[2, 2, 7, 3],

[9, 4, 6, 1],

[8, 5, 2, 4],

[3, 1, 2, 6]])

image = image.reshape(1, 4, 4, 1)

# define gm_model containing just a single global-max pooling layer

gm_model = Sequential(

[GlobalMaxPooling2D()])

# define ga_model containing just a single global-average pooling layer

ga_model = Sequential(

[GlobalAveragePooling2D()])

# generate pooled output

gm_output = gm_model.predict(image)

ga_output = ga_model.predict(image)

# print output image

gm_output = np.squeeze(gm_output)

ga_output = np.squeeze(ga_output)

print("gm_output: ", gm_output)

print("ga_output: ", ga_output)

This ends the basic understanding of MaxPooling Layer in CNN architecture