kMeans clustering of the web visitors to Google’s Merchandise Store

Many data science projects that I have seen on segmenting customers for marketing have used purchase data. But this has mostly been based on “purchase” data obtained from actual sales records from CRM tools or a revenue amount attached to a Google Analytics conversion goal.

But not every visitor arriving on a website converts to a customer. And this process of conversion, from arriving on a website and eventually converting, can be a long one, taking days or weeks in the case of a B2C product or even months up to a year in the case of B2B sales.

This is where “content marketing” is practiced, where marketing teams develop content to attract those visitors and entice them to return to the website for repeated visits to build a relationship and loyalty over time and eventually convert when the need arises.

When I used to work in content marketing, one of the early stages in strategy development for this practice involved the creation of “personas”. This is where we would come up with buckets or categories if you will, of audience groups that would be representative of a significant set of people and distinct enough that they required slightly different marketing strategies.

This has usually been done based on external research in the form of survey and reports and some superficial metrics like social media following. While this is useful, it is also helpful to see what types of people are already interacting with your website.

So I thought it would be a good exercise to see if this can be done, using Google Analytics data on the visitors to a website. In this project, I perform clustering of the web visitors to Google’s Merchandise Store using kMeans clustering, using their web analytics data. The clustering stage is preceded by the standard steps of data science, including cleaning, exploration, feature selection and preprocessing.

(If you are pressed for time, check out a more condensed version of this article with just the key insights here.)

The data for this project are from select files used for the Google Customer Revenue Prediction Competition on Kaggle.

The first dataset involves 903653 observations of visits to Google’s Merchandise Store for a period of a year between the 1st of August 2016 to 1st of August 2017.

The second is another dataset related to this same Google store data, that includes more numerical variables like Sessions, Average Session Duration, Bounce Rate, Transcations and Goal Conversion Rate. This was not part of the original Kaggle competition but it came to be as a result of a “leak” in the competition dataset which sparked some discussion in their forum after which the competition was shut down.

The data has been provided by a Kaggle user in corresponding csv files here. We can use this to marge into the first dataset when needed.

According to the instructions from Google on the Kaggle competition, due to formatting issues, IDs like fullVisitorId should be loaded as strings to be properly unique.

#Loading files with some ID fields as strings

df = pd.read_csv('extracted_fields_train', dtype={'date': str,'fullVisitorId': str, 'sessionId':str, 'visitId': np.int64})

# df.shape

The original dataset consists of 903653 observations and 30 features.

We do some basic exploration first, after checking for duplicate observations, unique values, missing values and some renaming of columns for simplicity.

duplicates = df.duplicated().sum()

# duplicates

#0 so there are no duplicates#Exploring the different categories of channelGrouping

unique_values = df['channelGrouping'].unique()

#print('Unique values:', unique_values)

# They are ['Organic Search' 'Referral' 'Paid Search' 'Affiliates' 'Direct' 'Display''Social' '(Other)']

Now it is useful to really understand what all these variables mean.

- channelGrouping : The channel via which the user came to the Store. Could be one of ‘Organic Search’ ‘Referral’ ‘Paid Search’ ‘Affiliates’ ‘Direct’ ‘Display’ ‘Social’ or ‘(Other)’

- date : The date of the visit

- fullVisitorId : A unique identifier assigned to each individual user who visits a website.

- sessionId : A unique identifier assigned to each user session, which refers to the period between first visit and a set period of inactivity or when the user leaves the website.

- visitId : A unique identifier assigned to each session that is used to distinguish one session from another.

- visitNumber : Count of the number of sessions a user has had on the site. Each time a user starts a new session, visitNumber +1

- visitStartTime : The timestamp (expressed as POSIX time).

- device.browser, device.deviceCategory, device.isMobile and device.operatingSystem : The details for the device used to access the Store. 54 categories for browser, 3 for deviceCategory (desktop, mobile, tablet), 20 for OS, and 2 for isMobile (1 or 0 binary)

- geoNetwork.city, geoNetwork.continent, geoNetwork.country, geoNetwork.metro, geoNetwork.networkDomain, geoNetwork.region, geoNetwork.subContinent : All information about the geography of the user (649 cities, 6 continents, 222 countries, 94 metros, 28064 network domains, 376 regions, 23 subcontinents

- totals.bounces — measures the number of “single-page sessions” (where the user lands on a page and then leaves the website without visiting any other pages)

NOTE: The totals.bounces metric does not take into account the duration of the session. Even if a user spends a significant amount of time on a single page, if they do not visit any other pages on the website or app before leaving, it will still be counted as a bounce.

Date seems to be in an object data type. We convert it to datetime format.

df['date'] = pd.to_datetime(df['date'], format='%Y-%m-%d')

Some cleaning as follows:

- Some of these column names are a bit long and unwieldy so we rename them for simplicity

- Since we have the device category variable, there is no need for the is device.isMobile variable as it is redundant

- Since if channel is not Direct, isTrueDirect will always be false and vice versa, we can drop this too

## Renaming some of the columns with unwieldy names, dropping unnesesary ones and changing the date variable to a datetime format

renamed = df.rename(columns={

'channelGrouping': 'channel',

'fullVisitorId': 'visitorID',

'device.browser': 'browser',

'device.deviceCategory': 'device',

'device.operatingSystem': 'os',

'geoNetwork.city': 'city',

'geoNetwork.continent': 'continent',

'geoNetwork.country': 'country',

'geoNetwork.metro': 'metro',

'geoNetwork.networkDomain': 'networkDomain',

'geoNetwork.region': 'region',

'geoNetwork.subContinent': 'subContinent',

'totals.bounces': 'bounces',

'totals.hits': 'hits',

'totals.newVisits': 'newVisits',

'totals.pageviews': 'pageviews',

'totals.transactionRevenue': 'transactionRevenue',

'trafficSource.adContent': 'adContent',

'trafficSource.campaign': 'campaign',

'trafficSource.keyword': 'keyword',

'trafficSource.medium': 'medium',

'trafficSource.referralPath': 'referralPath',

'trafficSource.source': 'source'})renamed = renamed.drop(['device.isMobile', 'trafficSource.isTrueDirect'], axis=1)

renamed['date'] = pd.to_datetime(renamed['date'], format='%Y-%m-%d')

Some further exploration using visualizations may be useful to understand the distribution of some of the numerical variables, and also the proportions of observations that fall in different categories of the categorical variables.

# Visualize the distributions of some of the variables

fig, axes = plt.subplots(3, 2, figsize=(12, 12))# Plot 1

sns.barplot(x="count", y="index", data=renamed['channel'].value_counts().reset_index().rename(columns={'channel': 'count', 'index': 'index'}), ax=axes[0, 0])

axes[0, 0].set_title('Distribution of Channels')

# Plot 2

sns.barplot(x="index", y="count", data=renamed['continent'].value_counts().reset_index().rename(columns={'continent': 'count', 'index': 'index'}), ax=axes[0, 1])

axes[0, 1].set_title('Distribution of Continents')

# Plot 3

sns.barplot(x="count", y="index", data=renamed['country'].value_counts().reset_index().rename(columns={'country': 'count', 'index': 'index'}).head(10), ax=axes[1, 0])

axes[1, 0].set_title('Distribution of Top 10 Countries')

# Plot 4 - Browser

sns.barplot(x="count", y="index", data=renamed['browser'].value_counts().reset_index().rename(columns={'browser': 'count', 'index': 'index'}).head(10), ax=axes[1, 1])

axes[1, 1].set_title('Distribution of Browsers')

# Plot 5 - Devices

sns.barplot(x="index", y="count", data=renamed['device'].value_counts().reset_index().rename(columns={'device': 'count', 'index': 'index'}), ax=axes[2, 0])

axes[2, 0].set_title('Distribution of Devices')

# Plot 6 - OS

sns.barplot(x="count", y="index", data=renamed['os'].value_counts().reset_index().rename(columns={'os': 'count', 'index': 'index'}), ax=axes[2, 1])

axes[2, 1].set_title('Distribution of OS')

fig.tight_layout()

plt.show()

From these graphs, we can see that:

- Channels: Google search is the most popular source of visits, followed by Social channels and Direct. Paid search and ads are not a major source.

- Continents: The Americas receive a greater number of visits compared to any other continent, nearly twice as many as Asia and Europe combined.

- Countries: When considering individual countries, the USA and India attract the highest number of visitors, followed by the UK.

- Browser: Chrome is the most commonly used browser, with Safari being the second most popular choice.

- Device: Most visitors come to the website on a desktop, but there are also a lot of visitors on mobile and a few from tablets as well

- OS: Most visitors are Windows users, then Apple Mac users. Then comes Android users on mobile, followed by iOS users. This may just be useful to get an idea of visitors’ “lifestyle choices,” such as their preferred operating systems (for example, Mac users versus PC users).

We can also visualise the distribution of visitors across the globe through a world map graphic as follows.

#Visualising the distribution of country

import geopandas as gpd

import matplotlib.pyplot as plt# group data by country and count the number of rows for each country

counts = renamed.groupby('country').size().reset_index(name='count')

# read world map shapefile into geopandas dataframe

world_map = gpd.read_file(gpd.datasets.get_path('naturalearth_lowres'))

# merge counts with world map dataframe

merged = world_map.merge(counts, left_on='name', right_on='country', how='left')

# create figure and axis

fig, ax = plt.subplots(figsize=(12,6))

# plot the world map with circles at the coordinates of each country

merged.plot(column='count', ax=ax, legend=True, legend_kwds={'label': "Count of Rows by Country"})

# set plot title

ax.set_title("World Map with Count of Rows by Country", fontsize=16)

# show plot

plt.show()

Next it is useful to explore how the visits change over time, throughout the year and on a weekly basis to see if there are any patterns we can take advantage of in the eventual clustering.

# Visualize the distributions of some of the variables

fig, axes = plt.subplots(2, 2, figsize=(12, 8))# Plot 1

ax1 = axes[0, 0]

renamed.date.value_counts().sort_index().plot(label="train", ax=ax1)

ax1.set_title('Daily Visits Through the Year')

# Plot 2, for extracting weekday to visualise the observations per day of the Week

ax2 = axes[0, 1]

day_of_week = renamed['date'].dt.dayofweek

day_count = day_of_week.value_counts()

ax2.bar(day_count.index, day_count.values)

ax2.set_xticks(day_count.index)

ax2.set_xticklabels(['Mon', 'Tue', 'Wed', 'Thu', 'Fri', 'Sat', 'Sun'])

ax2.set_title('Visits in the Weeek')

ax2.set_xlabel('Day of the Week')

ax2.set_ylabel('Count')

# Plot 3, for extracting the hour of the day they started the sessions from visitStartTime

ax3 = axes[1, 0]

renamed['visitStartTime'] = pd.to_datetime(renamed['visitStartTime'], unit='s')

renamed['Hour'] = renamed['visitStartTime'].dt.hour

renamed['Hour'].hist(bins=24, edgecolor='black', ax=ax3)

ax3.set_xlabel('Hour of the Day')

ax3.set_ylabel('Frequency')

ax3.set_title('Visits at Hour in the Day')

# Remove empty subplot

fig.delaxes(axes[1, 1])

# Adjust the spacing between subplots

fig.tight_layout()

# Display the plots

plt.show()

As we can see here, the store experienced a higher number of visits during the period from October to January. This can be attributed to the start of the holiday season, as people engage in extensive shopping for themselves or to purchase gifts for others.

Unexpectedly, the number of visits is lower on weekends compared to weekdays.

We already know that we can drop some of the features. Just to be thorough, we also check for multicollinearity to see if there are any highly correlated variables

corr_matrix = renamed.corr()# Visualize the correlation matrix using a heatmap

sns.heatmap(corr_matrix, annot=True)

plt.title('Correlation Matrix')

plt.show()

When 2 or more are highly correlated, it can cause issues with model interpretation and stability.

We see that hits and pageviews are very highly correlated Bounces, newVisits and isTrueDirect are binary categorical variables so they don’t show up numbers in the above heatmap.

Pageviews and hits are highly correlated so we have to remove one of them.

Additionally, any kind of IDs don’t mean anything for clustering or any model, so we can drop ‘sessionId’, ‘visitId’ (‘fullVisitorId’ we need to keep for clustering).

Also, since we have the device category variable, there is no need for the is device.isMobile variable as it is redundant.

And lastly, if channel is not Direct, isTrueDirect will always be false and vice versa (except for nan missing values), we can drop this too.

We first try clustering based only on the numerical variables first. So for the first clustering, we will remove all the other variables.

For clustering we use the k-Means algorithm.

The k-Means algorithm is an unsupervised machine learning algorithm that groups similar data points together based on features.

It iteratively assigns each data point to a cluster based on the distance between the data point and the centroid of the cluster. We will specify the number of clusters k beforehand.

firstclust = renamed.drop(['channel','hits', 'date', 'sessionId', 'visitId',

'visitStartTime', 'browser','bounces', 'newVisits',

'device', 'os',

'city', 'continent', 'country',

'metro', 'networkDomain', 'region',

'subContinent', 'adContent', 'campaign', 'keyword',

'medium', 'referralPath',

'source'], axis=1)#Converted transactions into log

firstclust['transactionRevenue'] = np.log(firstclust['transactionRevenue'])

For the kMeans algorithm to work, we cannot have any missing values. To address this we will impute any missing values with the mean.

from sklearn.impute import SimpleImputer# Replace missing values with mean

imputer = SimpleImputer(strategy='mean')

firstclust[['visitNumber', 'pageviews', 'transactionRevenue']] = imputer.fit_transform(firstclust[[ 'visitNumber', 'pageviews', 'transactionRevenue']])

We also have to standardize the variables before clustering. This controls the variability of the dataset, as it converts data into specific range using a linear transformation which generate good quality clusters and improve the accuracy of clustering algorithms. The idea is that if the different features of the data have different scales, then the gradient-based optimization algorithm that powers the kMeans optimization will involve derivatives that tend to align along directions with higher variance, which leads to poorer/slower convergence.

from sklearn.preprocessing import OneHotEncoder, StandardScalerscaler = StandardScaler()

df_scaled = scaler.fit_transform(firstclust[['visitNumber', 'pageviews', 'transactionRevenue']])

from sklearn.cluster import KMeans

kmeans = KMeans(n_clusters=5, random_state=42)

clusters = kmeans.fit_predict(df_scaled)

The clusters themselves are simply arrays that denote the number of the cluster into which each observation in the dataframe lies in. To understand what exactly each cluster involves, we will have to map them back to the original, unscaled dataset. For this we get the unscaled dataset using inverse transform.

# Inverse transform to get original data

original_data = scaler.inverse_transform(df_scaled)

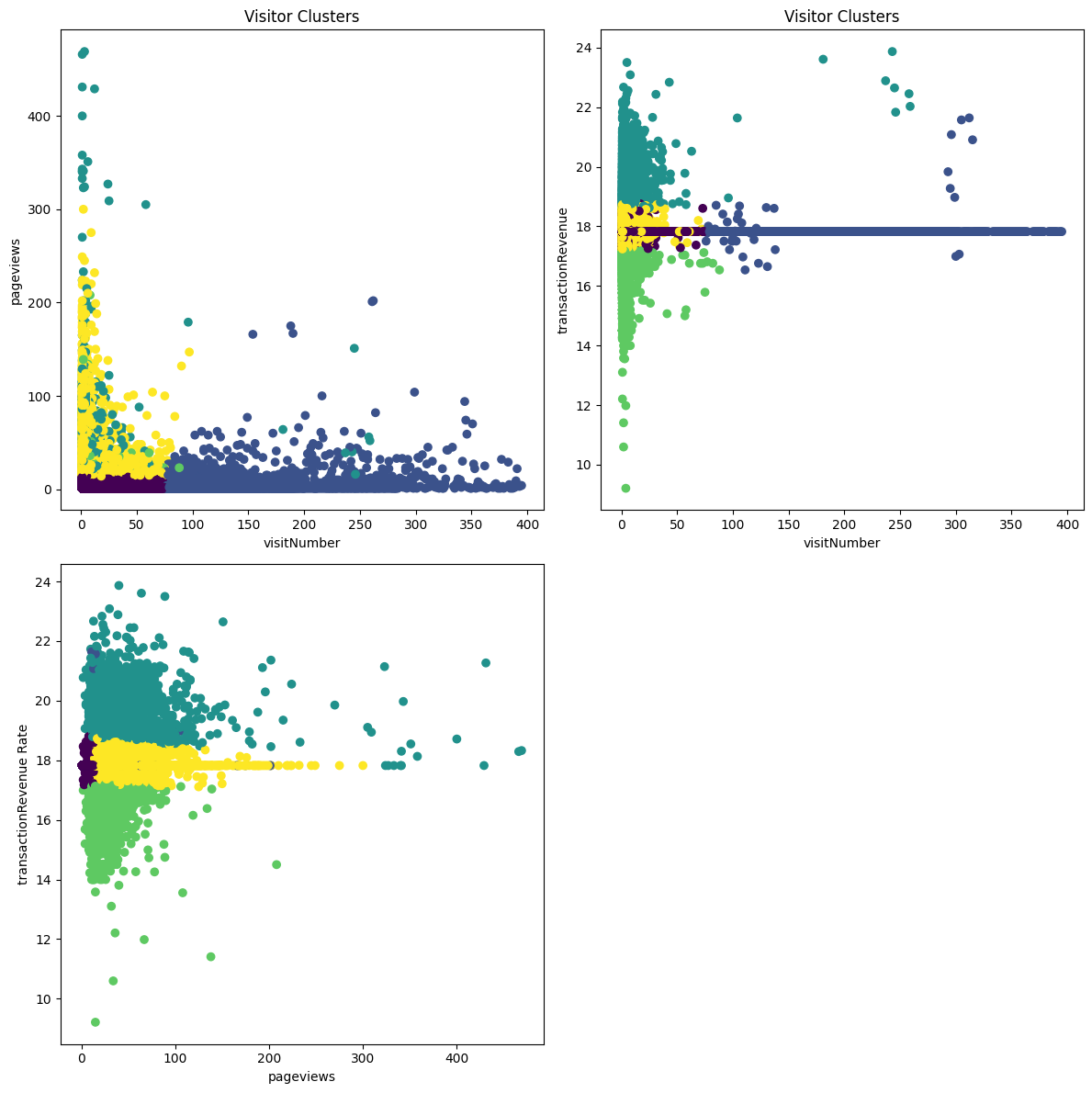

To visualize what each of the clusters look like, we have to view the relationship between 2 variables at a time (since we cannot realistically interpret with our visual senses anything that is more than 2-dimensional)

fig, axs = plt.subplots(nrows=2, ncols=2, figsize=(12, 12))# Plot 1

axs[0, 0].scatter(firstclust['visitNumber'], firstclust['pageviews'],c=clusters)

axs[0, 0].set_xlabel('visitNumber')

axs[0, 0].set_ylabel('pageviews')

axs[0, 0].set_title('Visitor Clusters')

# Plot 2

axs[0, 1].scatter(firstclust['visitNumber'], firstclust['transactionRevenue'],c=clusters)

axs[0, 1].set_xlabel('visitNumber')

axs[0, 1].set_ylabel('transactionRevenue')

axs[0, 1].set_title('Visitor Clusters')

# Plot 3

axs[1, 0].scatter(firstclust['pageviews'], firstclust['transactionRevenue'],c=clusters)

axs[1, 0].set_xlabel('pageviews')

axs[1, 0].set_ylabel('transactionRevenue Rate')

# Remove empty subplot

fig.delaxes(axs[1, 1])

# Adjust the spacing between subplots

fig.tight_layout()

# Display the plots

plt.show()

What we find is that there are approximately 4 clusters based on visitNumbers, transaction revenue and pageviews.

- (Green): Low pageviews, lower transaction revenue, low visitNumbers

- (Yellow): Low-medium pageviews, low visitNumbers and medium transaction revenue

- (Dark blue): High visitNumbers, medium transactionRevenue and very few pageViews

- (Dark green): Low to medium pageviews, High transactionRevenue, high visitNumbers

Until now we have been only using 3 numerical variables to find clusters. Now we can use the other dataset related to this Google store that includes more numerical variables like Sessions, Average Session Duration, Bounce Rate, Transactions and Goal Conversion Rate.

We load and merge these into our data set “renamed which was prepared for feature selection.

train_store_1 = pd.read_csv('Train_external_data.csv', low_memory=False, skiprows=6, dtype={"Client Id":'str'})

train_store_2 = pd.read_csv('Train_external_data_2.csv', low_memory=False, skiprows=6, dtype={"Client Id":'str'})

The way to merge this is through the Client Id but only part of it after the ‘.’ which becomes the same visitID in our original renamed dataset.

for df in [train_store_1, train_store_2]:

df["visitId"] = df["Client Id"].apply(lambda x: x.split('.', 1)[1]).astype(np.int64)merged = renamed.merge(pd.concat([train_store_1, train_store_2], sort=False), how="left", on="visitId")

We can still drop the categorical variables and other ids as before

secondclust = merged.drop(['visitorID','channel','hits', 'date', 'sessionId',

'visitStartTime', 'browser','bounces', 'newVisits',

'device', 'os',

'city', 'continent', 'country',

'metro', 'networkDomain', 'region',

'subContinent', 'adContent', 'campaign', 'keyword',

'medium', 'referralPath',

'source','Revenue','Hour','Client Id'], axis=1)

We still need to log transform transactionRevenue, convert Avg.Session Duration to seconds and also remove the % symbols from Bounce Rate and Goal Conversion Rate

#Converted transactions into log

secondclust['transactionRevenue'] = np.log(secondclust['transactionRevenue'])

In this clustering attempt, we assign the Avg. Session Duration to be 0 if it is missing.

secondclust['Avg. Session Duration'] = secondclust['Avg. Session Duration'].apply(lambda x: 0 if isinstance(x, float) else sum(int(i) * 60 ** j for j, i in enumerate(x.split(":")[::-1])))secondclust["Bounce Rate"] = secondclust["Bounce Rate"].astype(str).apply(lambda x: x.replace('%', '')).astype(float)

secondclust["Goal Conversion Rate"] = secondclust["Goal Conversion Rate"].astype(str).apply(lambda x: x.replace('%', '')).astype(float)

secondclust = secondclust.loc[secondclust['Avg. Session Duration'] != 0]

# Replace missing values with mean

imputer2 = SimpleImputer(strategy='mean')

secondclust[['visitNumber','pageviews','transactionRevenue','Sessions','Avg. Session Duration','Bounce Rate','Transactions','Goal Conversion Rate']] = imputer2.fit_transform(secondclust[['visitNumber','pageviews','transactionRevenue','Sessions','Avg. Session Duration','Bounce Rate','Transactions','Goal Conversion Rate']])

scaler2 = StandardScaler()

df2_scaled = scaler2.fit_transform(secondclust[['visitNumber','pageviews','transactionRevenue','Sessions','Avg. Session Duration','Bounce Rate','Transactions','Goal Conversion Rate']])

kmeans2 = KMeans(n_clusters=5, random_state=42)

clusters2 = kmeans.fit_predict(df2_scaled)

# Inverse transform to get original data

original_data2 = scaler2.inverse_transform(df2_scaled)

Just like before we visualize the relation between 2 variables at a time in each of the clusters through the scatterplot.

fig, axs = plt.subplots(nrows=3, ncols=2, figsize=(12, 12))# Plot 1

axs[0, 0].scatter(original_data2[:, 1], original_data2[:, 4], c=clusters2)

axs[0, 0].set_xlabel('Page Views')

axs[0, 0].set_ylabel('Avg. Session Duration')

axs[0, 0].set_title('Visitor Clusters')

# Plot 2

axs[0, 1].scatter(original_data2[:, 2], original_data2[:, 4], c=clusters2)

axs[0, 1].set_xlabel('transactionRevenue')

axs[0, 1].set_ylabel('Avg. Session Duration')

axs[0, 1].set_title('Visitor Clusters')

# Plot 3

axs[1, 0].scatter(original_data2[:, 4], original_data2[:, 5], c=clusters2)

axs[1, 0].set_xlabel('Avg. Session Duration')

axs[1, 0].set_ylabel('Bounce Rate')

# Plot 4

axs[1, 1].scatter(original_data2[:, 5], original_data2[:, 6], c=clusters2)

axs[1, 1].set_xlabel('Bounce Rate')

axs[1, 1].set_ylabel('Transactions')

# Plot 5

axs[2, 0].scatter(original_data2[:, 1], original_data2[:, 5], c=clusters2)

axs[2, 0].set_xlabel('Page Views')

axs[2, 0].set_ylabel('Bounce Rate')

# Plot 6

axs[2, 1].scatter(original_data2[:, 1], original_data2[:, 6], c=clusters2)

axs[2, 1].set_xlabel('Page Views')

axs[2, 1].set_ylabel('Transactions')

plt.show()

Based on the results of this clustering, we could say there are 3 clear clusters, dark purple, green and dark blue

- (Green): Low pageviews, low session duration, low BounceRate, few transactions

- (Dark blue): Low session duration, few transactions, low to medium pageviews

- (Purple): Low to medium page views, low bounce rate, medium to high transaction revenue, generally high session duration

NOTE: Replacing the NaN values of Avg. Session Duration with 0 may not make sense. For example, some of these visits with NaN durations have high transactionRevenue and PageViews. So a simpler option is to remove these NaN rows altogether first.

Now we include the categorical variables as well, and see what the clusters look like.

We first select the dataframe for clustering with the necessary variables. This includes the numerical variables we previously selected, and new categorical variables. But we don’t want to select all categorical variables as some may have too many categories, like cities or metros.

Let’s only choose channel’, ‘browser’, ‘device’,‘os’, ‘continent’, ‘campaign’ as these have manageable categories.

fourthclust = merged.drop(['visitorID','hits', 'date', 'sessionId',

'visitStartTime','bounces', 'newVisits',

'city', 'country',

'metro', 'networkDomain', 'region',

'subContinent', 'adContent', 'keyword',

'medium', 'referralPath',

'source','Revenue','Hour','Client Id'], axis=1)# Convert categorical variables to binary features using one-hot encoding

ohe = OneHotEncoder(sparse=False)

cat_vars = ['channel', 'browser','device', 'os','continent','campaign']

cat_data = ohe.fit_transform(fourthclust[cat_vars])

cat_df = pd.DataFrame(cat_data, columns=ohe.get_feature_names_out(cat_vars))

numandcat = pd.concat([fourthclust, cat_df], axis=1)

catclust = numandcat.drop(cat_vars, axis=1) #Removing the original categorical variables

#Dropping the NaN values

catclusttemp = catclust.dropna()

#Now cleaning the same numerical variables

catclusttemp['Avg. Session Duration'] = catclusttemp['Avg. Session Duration'].apply(lambda x: sum(int(i) * 60 ** j for j, i in enumerate(x.split(":")[::-1])))

catclusttemp["Bounce Rate"] = catclusttemp["Bounce Rate"].astype(str).apply(lambda x: x.replace('%', '')).astype(float)

catclusttemp["Goal Conversion Rate"] = catclusttemp["Goal Conversion Rate"].astype(str).apply(lambda x: x.replace('%', '')).astype(float)

# Log transform the 'totals.transactionRevenue' column

catclusttemp['transactionRevenue'] = np.log(catclusttemp['transactionRevenue'])

# Scale all the numerical variables

scaler = StandardScaler()

df4_scaled = scaler.fit_transform(catclusttemp[['visitNumber','pageviews','transactionRevenue','Sessions','Avg. Session Duration','Bounce Rate','Transactions','Goal Conversion Rate']])

catencoded = catclusttemp.drop(['visitId','visitNumber','pageviews','transactionRevenue','Sessions','Avg. Session Duration','Bounce Rate','Transactions','Goal Conversion Rate'], axis=1)

#A minor matter of converting the df4_scaled scaled numericals into a dataframe, as they were an array during the scaling process.

num_vars_scaled = pd.DataFrame(df4_scaled, columns=['visitNumber','totals.pageviews','totals.transactionRevenue','Sessions','Avg. Session Duration','Bounce Rate','Transactions','Goal Conversion Rate'], index=catclusttemp.index)

dataframe = pd.concat([catencoded, num_vars_scaled], axis=1)

# Now finally perform kmeans clustering

kmeans = KMeans(n_clusters=6, random_state=42)

clusters4 = kmeans.fit_predict(dataframe)

To visualise the distribution of the categorical variables in each of the clusters, we have to first attach the clusters to the dataset with the original categorical variables.

final_og_df = numandcat.dropna()

final_og_df['Cluster'] = clusters4forcatviz = final_og_df[['channel','browser','device','os','continent','campaign','Cluster']]

# Create the figure and axes

fig, axes = plt.subplots(3, 2, figsize=(12, 16))

# Plot 1

ax1 = axes[0, 0]

ct1 = pd.crosstab(forcatviz['Cluster'], forcatviz['channel'], normalize='index')

ct1.plot(kind='bar', stacked=True, figsize=(10, 6), ax=ax1)

ax1.set_title("Distribution of channel by Cluster")

ax1.set_xlabel('Cluster')

ax1.set_ylabel('Proportion')

# Plot 2

ax2 = axes[0, 1]

ct2 = pd.crosstab(forcatviz['Cluster'], forcatviz['browser'], normalize='index')

ct2.plot(kind='bar', stacked=True, figsize=(10, 6), ax=ax2)

ax2.set_title("Distribution of browser by Cluster")

ax2.set_xlabel('Cluster')

ax2.set_ylabel('Proportion')

# Plot 3

ax3 = axes[1, 0]

ct3 = pd.crosstab(forcatviz['Cluster'], forcatviz['device'], normalize='index')

ct3.plot(kind='bar', stacked=True, figsize=(10, 6), ax=ax3)

ax3.set_title("Distribution of device by Cluster")

ax3.set_xlabel('Cluster')

ax3.set_ylabel('Proportion')

# Plot 4

ax4 = axes[1, 1]

ct4 = pd.crosstab(forcatviz['Cluster'], forcatviz['os'], normalize='index')

ct4.plot(kind='bar', stacked=True, figsize=(10, 6), ax=ax4)

ax4.set_title("Distribution of os by Cluster")

ax4.set_xlabel('Cluster')

ax4.set_ylabel('Proportion')

# Plot 5

ax5 = axes[2, 0]

ct5 = pd.crosstab(forcatviz['Cluster'], forcatviz['continent'], normalize='index')

ct5.plot(kind='bar', stacked=True, figsize=(10, 6), ax=ax5)

ax5.set_title("Distribution of continent by Cluster")

ax5.set_xlabel('Cluster')

ax5.set_ylabel('Proportion')

# Plot 6

ax6 = axes[2, 1]

ct6 = pd.crosstab(forcatviz['Cluster'], forcatviz['campaign'], normalize='index')

ct6.plot(kind='bar', stacked=True, figsize=(10, 6), ax=ax6)

ax6.set_title("Distribution of campaign by Cluster")

ax6.set_xlabel('Cluster')

ax6.set_ylabel('Proportion')

fig.tight_layout()

plt.show()

Visualising the distribution of categorical variables in each cluster — Cluster 4 seems to be exclusively Mac users who click on external links to the Google Store, on Chrome browsers in their desktops — Cluster 0 has the most mobile visitors, Safari users and on an iPhone — Cluster 3 has the most Android phone visitors, the most organic search visitors and also the most Windows users — Cluster 1 has the most Linux users, Direct visits, some mobile users and some Safari users

catclusttemp['Cluster'] = clusters4# Visualize the clusters on the original_data, 2 numerical variables at a time

fig, axs = plt.subplots(nrows=3, ncols=2, figsize=(12, 12))

# Plot 1

axs[0, 0].scatter(catclusttemp['pageviews'], catclusttemp['Avg. Session Duration'], c=catclusttemp['Cluster'])

axs[0, 0].set_xlabel('Page Views')

axs[0, 0].set_ylabel('Avg. Session Duration')

axs[0, 0].set_title('Visitor Clusters')

# Plot 2

axs[0, 1].scatter(catclusttemp['transactionRevenue'], catclusttemp['Avg. Session Duration'], c=catclusttemp['Cluster'])

axs[0, 1].set_xlabel('transactionRevenue')

axs[0, 1].set_ylabel('Avg. Session Duration')

axs[0, 1].set_title('Visitor Clusters')

# Plot 3

axs[1, 0].scatter(catclusttemp['Avg. Session Duration'], catclusttemp['Bounce Rate'], c=catclusttemp['Cluster'])

axs[1, 0].set_xlabel('Avg. Session Duration')

axs[1, 0].set_ylabel('Bounce Rate')

# Plot 4

axs[1, 1].scatter(catclusttemp['Bounce Rate'], catclusttemp['Transactions'], c=catclusttemp['Cluster'])

axs[1, 1].set_xlabel('Bounce Rate')

axs[1, 1].set_ylabel('Transactions')

# Plot 5

axs[2, 0].scatter(catclusttemp['pageviews'], catclusttemp['Bounce Rate'], c=catclusttemp['Cluster'])

axs[2, 0].set_xlabel('Page Views')

axs[2, 0].set_ylabel('Bounce Rate')

# Plot 6

axs[2, 1].scatter(catclusttemp['pageviews'], catclusttemp['Transactions'], c=catclusttemp['Cluster'])

axs[2, 1].set_xlabel('Page Views')

axs[2, 1].set_ylabel('Transactions')

Numerical variables in each cluster

- (Green): Low pageviews, lower Avg. Session Duration, low Bounce rate low-medium transactionRevenue

- (Yellow): Medium pageviews, Avg.Session Duration, medium-high transaction revenue, low bounce rate

- (Purple): Low pageviews and session duration, medium to high transactionRevenue and high bounce rate

- (Dark green): Not very clear, coincides with others in these 2 dimensional visualisations

Based on this final clustering, it is possible to separate the website visitors into clusters based on the collection of available features — both categorical and numerical — that are typical of their behaviors. For example, the 4 main clusters that could be of use to a marketing team could be:

Cluster 1 : Visitors are Mac users who arrive at the Google Store from external links to the Google Store, on Chrome browsers in their desktops AND view only a few pages, don’t spend much time on them but spend a moderate amount of money

So an appropriate strategy for this cluster could be phrased as: “Let’s create content and product info that is Apple compatible, that can be shared by other websites, and not have each page be too long”

Cluster 2 : Most mobile visitors are from this, they use Safari on an iPhone, view a moderate number of pages and spend some decent amount of time on the session and also spend a decent amount of money.

For which, a strategy could be: “Let’s mobile-optimize most of our content, focus on products that are also Apple-compatible, spread across a few pages and with enough detail to help them make the purchase”

Cluster 3 : Most Android phone users on a Windows OS are from here, but they tend to search on Google and click links to land on the Google Store, view only a few pages and spend little time but spend a lot of money.

Where the strategy could be phrased as: “Let’s also focus on Android and non-Mac users, create SEO optimized content but not too many pages of them”

Cluster 4 : Has the most Linux users, Direct visits, some mobile users and some Safari users but all other behaviour remains similar to cluster 1.

For which the approach could be: “Maybe it is also worth appealing to Linux users, especially those who return a lot, on mobile”

This analysis shows an approach to website audience clustering that marketers can adopt. It uses unsupervised machine learning in the form of the kMeans clustering algorithm.

Roughly speaking, the idea is to give marketers a data-driven method to categorize their website audience into different groups, while taking into account all the available features of their visits at the same time, instead of one or two.

It is important to note however, that the clustering approach can also be subjective to a certain extent, so marketing teams can choose a clustering that best fits their needs. For example, the very choice of the number of clusters is something that needs to be arrived at based on an educated guess, experience with real world interactions with customers or industry best practices.

It is also worth remembering that the 2D-visualizations we see above may not clearly show a separation between the observations in each cluster. But this may not be a major issue as in the N-Dimensional space of all the features included in the clustering algorithm, it may still show clear and distinct clusters.

This analysis can also be improved further by ingesting additional data from other sources. For example, since the above clustering is still purely based on web analytics data, it can be combined with CRM data or other sales data acquired through sales teams, surveys or feedback forms. This will mean more accurate sets of variables that closely capture audience and buyer characteristics. This will allow marketers for example, to do more targeted ad campaigns for high spending customers.