Emotion recognition using machine learning is an exciting and practical field, combining computer vision and artificial intelligence to interpret human emotions. In this tutorial, we’ll explore how to create a real-time sentiment recognition algorithm in Python. We will focus on detecting faces in a video stream and classifying emotions using a pre-trained model.

Sentiment recognition technology has advanced rapidly, finding different applications in various software domains. An important area is customer service, where sentiment detection can assess customer satisfaction and tailor responses, improving the user experience. In the field of mental health, apps can use this technology to monitor patients’ emotional states, offering timely support or intervention. In marketing and advertising, understanding emotional responses to content can drive personalized strategies and improve engagement. In educational software, emotion recognition helps tailor the learning material to the learner, potentially increasing effectiveness. In addition, in entertainment and gaming, it creates more immersive and interactive experiences by adjusting content in real time based on the player’s emotional state. These applications demonstrate the enormous potential of emotion recognition to enhance software interactivity, personalization and responsiveness, making it a valuable tool in the digital age.

Github link: https://github.com/tonserrobo/Emotion-detection

Before diving into the code, make sure you have the following:

- Python: A Python operating environment (Python 3.6 or later recommended).

- Libraries: TensorFlow, OpenCV, MTCNN and NumPy. Install them using pip if you haven’t already:

pip install tensorflow opencv-python mtcnn numpy

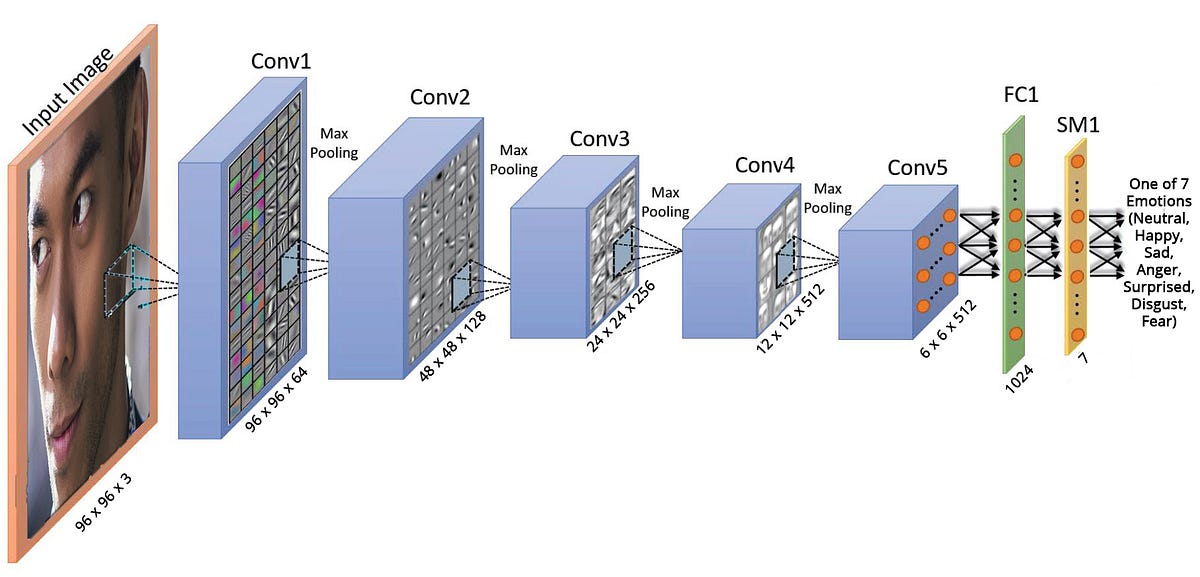

3. Pre-trained model: We will use a VGGFace model trained on the FER2013 dataset. You can download the model from here.

- Clone the repository: Start by cloning the repository containing the necessary code and model:

git clone https://github.com/travistangvh/emotion-detection-in-real-time.git

2. Install dependencies: Change to the cloned directory and install the required libraries:

pip install -r ../REQUIREMENTS.txt

3. Place model: Place the downloaded trained_vggface.h5 model in ../datasets/trained_models/.

The core of this project is inside emotion_webcam_demo.py. Here’s a breakdown of what happens in the scenario:

- Model Loading: The VGGFace model is loaded using TensorFlow.

- Webcam feed: We capture video from the webcam using OpenCV.

- Face Detection: MTCNN is used for real-time face detection in video stream.

- Emotion classification: The detected faces are fed to the model, which classifies the emotion.

- Radar Graph Visualization: A radar graph is drawn on the video frame to visualize the probabilities of different emotions.

Let’s dive into the main aspects of the code:

- Load the model and dependencies: Import the necessary libraries and load the pretrained model.

import cv2

import numpy as np

from tensorflow.keras import models

from mtcnn.mtcnn import MTCNNtrained_model = models.load_model('../datasets/trained_models/trained_vggface.h5', compile=False)

2. Start Webcam: Start recording video from the webcam.

cap = cv2.VideoCapture(0)

3. Real-time face detection and emotion classification: Process each video frame, detect faces and classify emotions.

while True:

ret, frame = cap.read()

if not ret:

break

# Process frame for face detection and emotion classification

# ...

4. Display Results: Display the results in the video frame and plot the radar plot for sentiment probabilities.

cv2.imshow('Video', frame)

if cv2.waitKey(1) & 0xFF == ord('q'):

break

5. Cleanup: Release the webcam and close all OpenCV windows.

cap.release()

cv2.destroyAllWindows()

To see emotion recognition in action:

- Go to the directory it contains

emotion_webcam_demo.py. - Run the script:

python3 emotion_webcam_demo.py

3. You should see a window showing the webcam feed with real-time emotion detection and classification.

Congratulations! You’ve just built a real-time sentiment recognition system using Python. This system can be further enhanced with more features or integrated into larger projects such as interactive installations, user behavior analysis or customer experience improvement tools.

Remember, the key to successful implementation lies in understanding the model, the data it was trained on, and the limitations of current technology in interpreting complex human emotions. Happy coding!