The most inspiring part of my role is traveling the world, meeting our customers from every sector and watching, learning, collaborating with them as they build GenAI solutions and put them into production. It is exciting to see our customers actively promoting their GenAI journey. But many in the market are not, and the gap is growing.

AI leaders are rightly struggling to move beyond the prototype and experimental stage, it’s our mission to change that. At DataRobot, we call this the “trust gap.” It’s the trust, security and accuracy and concerns around GenAI that are holding teams back, and we’re committed to addressing that. And, it’s the focus of the Spring ’24 launch and its groundbreaking features.

This edition focuses on the three most significant barriers to unlocking value with GenAI.

First, we offer you open source LLM support for enterprises and a range of evaluation and testing metrics to help you and your teams confidently build production-grade AI applications. To help you protect your reputation and prevent risk from AI applications running aground, we offer real-time intervention and monitoring for all your GenAI applications. And finally, to ensure your entire fleet of AI assets remains at peak performance, we offer you a first-of-its-kind multi-cloud and hybrid AI Observability to help you fully govern and optimize all your AI investments .

Build production-grade AI applications with confidence

There is a lot of talk about perfecting an LLM. However, we’ve seen that the real value lies in the detail of your AI creation application. It’s hard, though. Unlike predictive AI, which has thousands of easily accessible models and common data science metrics for benchmarking and performance evaluation, genetic AI has not—until now.

Unlike predictive AI, which has thousands of easily accessible models and common data science metrics to benchmark and evaluate performance, genetic AI has not—until now.

In our Spring ’24 release, get enterprise-grade support for any open source LLM. We have also introduced a whole set of LLM assessment, tests and metrics. Now, you can fine-tune the experience of building AI applications, ensuring their reliability and effectiveness.

Enterprise-Grade Open Source LLM Hosting

Privacy, control and flexibility remain critical for all organizations regarding LLMs. There was no easy answer for AI leaders stuck with having to choose between the risks of vendor lock-in using large API-based LLMs that could become suboptimal and expensive in the near future, figuring out how to stand up and host the your own open source LLM or create, host and maintain your own LLM.

With our Spring Launch, you have access to the widest selection of LLMs, allowing you to choose the one that aligns with your security requirements and use cases. Not only do you have ready-to-use access to LLMs from leading providers such as Amazon, Google and Microsoft, but you also have the flexibility to host your own custom LLMs. In addition, the Spring ’24 Launch offers enterprise-level access to open source LLMs, further expanding your options.

We’ve made it easy to host and use core open source models like LLaMa, Falcon, Mistral, and Hugging Face with built-in LLM security and DataRobot resources. We’ve done away with complex and demanding manual DevOps integrations and made it as easy as a dropdown.

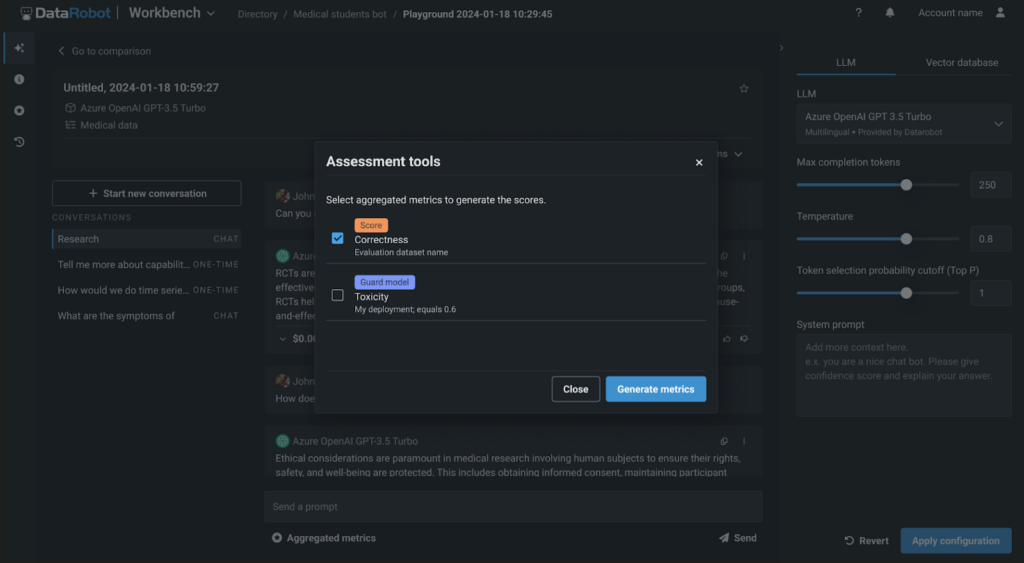

LLM assessment, testing and evaluation metrics

With DataRobot, you can freely choose and experiment in LLM. We also provide you with advanced experimentation options, such as trying different slicing strategies, embedding methods, and vector databases. With our new LLM evaluation, testing and evaluation metrics, you and your teams now have a clear way to validate the quality of your GenAI application and LLM performance in these experiments.

With first-of-its-kind synthetic data generation for immediate and response assessment, you can quickly and effortlessly generate thousands of question-answer pairs. This allows you to easily see how well your RAG experiment performs and stays true to your vector database.

We also give you a whole set of evaluation metrics. You can benchmark, compare performance, and rank your RAG experiments based on fidelity, correctness, and other metrics to create high-quality and valuable GenAI applications.

And with DataRobot, it’s always a matter of choice. You can do all of this as low-code or in our fully hosted notebooks, which also feature a rich set of new ones code functionality that eliminates infrastructure and resource management and facilitates easy collaboration.

Observe and intervene in real time

The biggest concern I hear from AI leaders about genetic AI is reputational risk. There are already many news articles about GenAI apps exposing private data and courts of law holding companies liable for the promises made in GenAI apps. In our Spring ’24 release, we tackled this issue head-on.

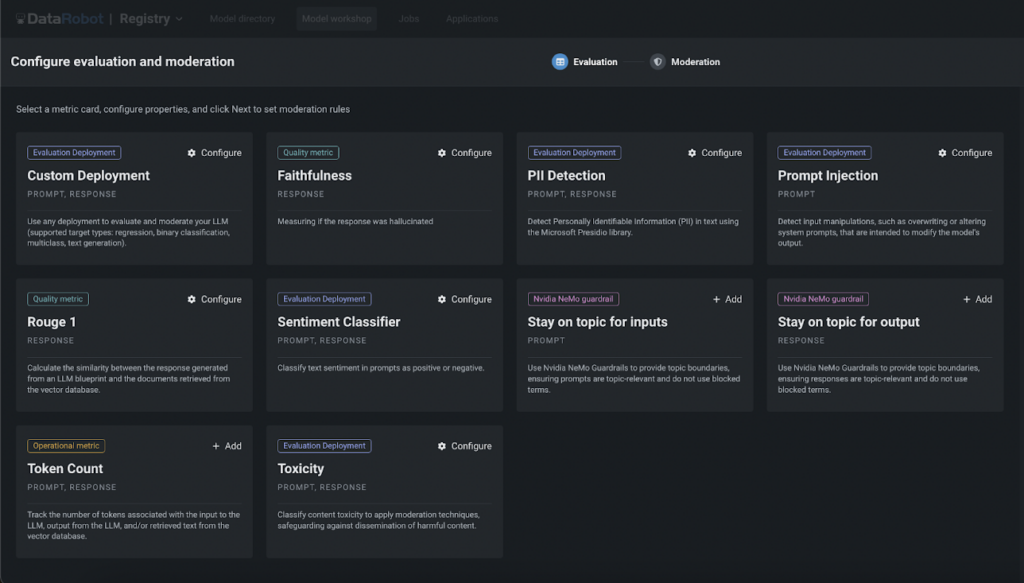

With our rich library of customizable guards, workflows, and alerts, you can build a layered defense to detect and prevent unexpected or unwanted behavior across your entire fleet of GenAI applications in real-time.

Our library of pre-built guards can be fully customized to prevent fast injections and toxicity, detect PII, mitigate hallucinations, and more. Our watchdogs and real-time intervention can be applied to all your production AI applications – even those built outside of DataRobot, giving you peace of mind that your AI components will perform as intended.

Governance and Optimization of Infrastructure Investments

Because of genetic AI, the proliferation of new tools, projects, and AI teams working on them has grown exponentially. I often hear about “shadow GenAI” projects and how AI leaders and IT teams are struggling to master it all. They find it difficult to get a complete view, coupled with complex multi-cloud and hybrid environments. AI’s lack of observability opens organizations up to AI misuse and security risks.

AI Cross-Environmental Observability

We’re here to help you thrive in this new normal where AI exists in multiple environments and locations. With our Spring ’24 launch, we’re bringing first-of-its-kind, cross-environment AI observability – giving you unified security, governance and visibility across cloud and on-premise environments.

Your teams work with the tools and ways they want. AI leaders have the unified governance, security and observability they need to protect their organizations.

Custom notification and notification policies integrate with your choice of tools, from ITSM to Jira and Slack, to help you reduce time to detection (TTD) and time to resolution (TTR).

Insights and graphics help your teams see, diagnose, and troubleshoot issues with your AI components – Easily detect messages in your response and content in your vector database, View AI topic displacement with multiple languages, and more .

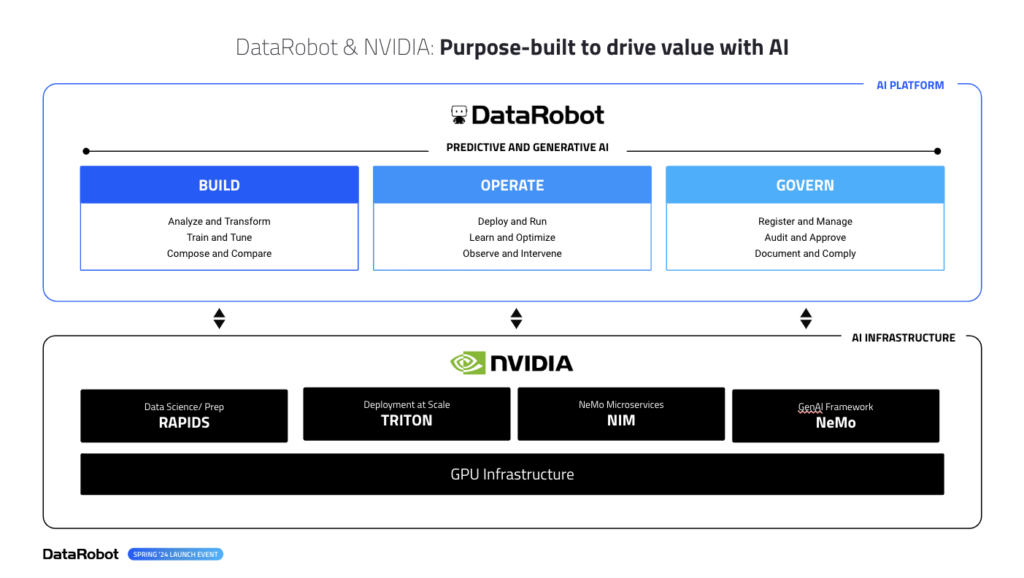

NVIDIA and GPU integrations

And, if you’ve invested in NVIDIA, we’re the first and only AI platform for deep integrations across the entire surface of NVIDIA’s AI infrastructure – from NIMS, NeMoGuard models, to the new Triton inference services, all ready for you at the click of a button. No more managing separate installations or integration points, DataRobot makes it easy to access your GPU investments.

Our Spring ’24 launch is packed with exciting features including GenAI, predictive capabilities, and improvements to time series forecasting, multi-media modeling, and data wrangling.

All of these new capabilities are available in cloud, on-premise and hybrid environments. So whether you’re an AI leader or part of an AI team, our Spring ’24 release lays the foundation for your success.

This is just the beginning of the innovations we bring you. We have much more in store for you in the coming months. Stay tuned as we work hard on the next wave of innovations.

Get started

Learn more about DataRobot’s GenAI solutions and accelerate your journey today.

- Join us Catalyst program to accelerate AI adoption and unlock the full potential of GenAI for your organization.

- See DataRobot’s GenAI solutions in action by programming a demonstration tailored to your specific needs and use cases.

- Explore ours new featuresand connect with your DataRobot Applied AI Expert to get started with them.

About the Author

Venky Veeraraghavan leads the Product Team at DataRobot, where he leads the definition and delivery of DataRobot’s AI platform. Venky has over twenty-five years of experience as a product leader, with previous roles at Microsoft and early stage startup, Trilogy. Venky has spent over a decade building BigData and AI platforms for some of the world’s largest and most complex organizations. He lives, hikes and runs in Seattle, Washington with his family.

Meet Venky Veeraraghavan