We devised TacticAI as a geometric deep learning pipeline, further expanded in this section. We process labelled spatio-temporal football data into graph representations, and train and evaluate on benchmarking tasks cast as classification or regression. These steps are presented in sequence, followed by details on the employed computational architecture.

Raw corner kick data

The raw dataset consisted of 9693 corner kicks collected from the 2020–21, 2021–22, and 2022–23 (up to January 2023) Premier League seasons. The dataset was provided by Liverpool FC and comprises four separate data sources, described below.

Our primary data source is spatio-temporal trajectory frames (tracking data), which tracked all on-pitch players and the ball, for each match, at 25 frames per second. In addition to player positions, their velocities are derived from position data through filtering. For each corner kick, we only used the frame in which the kick is being taken as input information.

Secondly, we also leverage event stream data, which annotated the events or actions (e.g., passes, shots and goals) that have occurred in the corresponding tracking frames.

Thirdly, the line-up data for the corresponding games, which recorded the players’ profiles, including their heights, weights and roles, is also used.

Lastly, we have access to miscellaneous game data, which contains the game days, stadium information, and pitch length and width in meters.

Graph representation and construction

We assumed that we were provided with an input graph \({{{{{{{\mathcal{G}}}}}}}}=({{{{{{{\mathcal{V}}}}}}}},\,{{{{{{{\mathcal{E}}}}}}}})\) with a set of nodes \({{{{{{{\mathcal{V}}}}}}}}\) and edges \({{{{{{{\mathcal{E}}}}}}}}\subseteq {{{{{{{\mathcal{V}}}}}}}}\times {{{{{{{\mathcal{V}}}}}}}}\). Within the context of football games, we took \({{{{{{{\mathcal{V}}}}}}}}\) to be the set of 22 players currently on the pitch for both teams, and we set \({{{{{{{\mathcal{E}}}}}}}}={{{{{{{\mathcal{V}}}}}}}}\times {{{{{{{\mathcal{V}}}}}}}}\); that is, we assumed all pairs of players have the potential to interact. Further analyses, leveraging more specific choices of \({{{{{{{\mathcal{E}}}}}}}}\), would be an interesting avenue for future work.

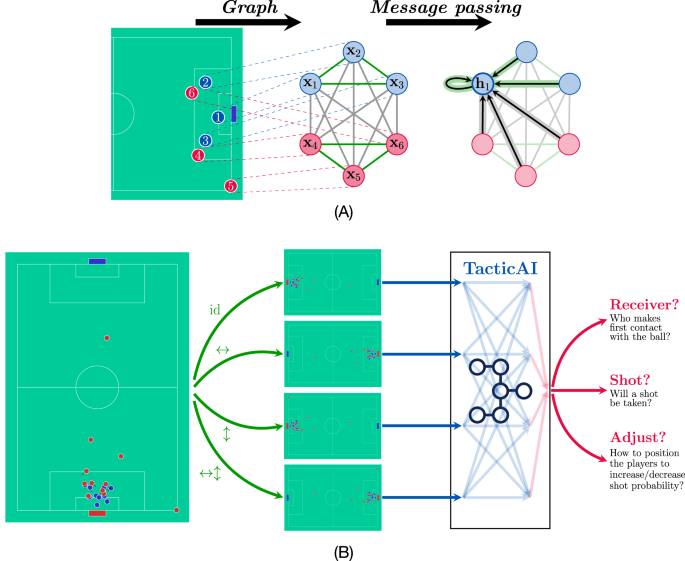

Additionally, we assume that the graph is appropriately featurised. Specifically, we provide a node feature matrix, \({{{{{{{\bf{X}}}}}}}}\in {{\mathbb{R}}}^{| {{{{{{{\mathcal{V}}}}}}}}| \times k}\), an edge feature tensor, \({{{{{{{\bf{E}}}}}}}}\in {{\mathbb{R}}}^{| {{{{{{{\mathcal{V}}}}}}}}| \times | {{{{{{{\mathcal{V}}}}}}}}| \times l}\), and a graph feature vector, \({{{{{{{\bf{g}}}}}}}}\in {{\mathbb{R}}}^{m}\). The appropriate entries of these objects provide us with the input features for each node, edge, and graph. For example, \({{{{{{{{\bf{x}}}}}}}}}_{u}\in {{\mathbb{R}}}^{k}\) would provide attributes of an individual player \(u\in {{{{{{{\mathcal{V}}}}}}}}\), such as position, height and weight, and \({{{{{{{{\bf{e}}}}}}}}}_{uv}\in {{\mathbb{R}}}^{l}\) would provide the attributes of a particular pair of players \((u,\, v)\in {{{{{{{\mathcal{E}}}}}}}}\), such as their distance, and whether they belong to the same team. The graph feature vector, g, can be used to store global attributes of interest to the corner kick, such as the game time, current score, or ball position. For a simplified visualisation of how a graph neural network would process such an input, refer to Fig. 1A.

To construct the input graphs, we first aligned the four data sources with respect to their game IDs and timestamps and filtered out 2517 invalid corner kicks, for which the alignment failed due to missing data, e.g., missing tracking frames or event labels. This filtering yielded 7176 valid corner kicks for training and evaluation. We summarised the exact information that was used to construct the input graphs in Table 2. In particular, other than player heights (measured in centimeters (cm)) and weights (measured in kilograms (kg)), the players were anonymous in the model. For the cases in which the player profiles were missing, we set their heights and weights to 180 cm and 75 kg, respectively, as defaults. In total, we had 385 such occurrences out of a total of 213,246( = 22 × 9693) during data preprocessing. We downscaled the heights and weights by a factor of 100. Moreover, for each corner kick, we zero-centred the positions of on-pitch players and normalised them onto a 10 m × 10 m pitch, and their velocities were re-scaled accordingly. For the cases in which the pitch dimensions were missing, we used a standard pitch dimension of 110 m × 63 m as default.

We summarised the grouping of the features in Table 1. The actual features used in different benchmark tasks may differ, and we will describe this in more detail in the next section. To focus on modelling the high-level tactics played by the attacking and defending teams, other than a binary indicator for ball possession—which is 1 for the corner kick taker and 0 for all other players—no information of ball movement, neither positions nor velocities, was used to construct the input graphs. Additionally, we do not have access to the player’s vertical movement, therefore only information on the two-dimensional movements of each player is provided in the data. We do however acknowledge that such information, when available, would be interesting to consider in a corner kick outcome predictor, considering the prevalence of aerial battles in corners.

Benchmark tasks construction

TacticAI consists of three predictive and generative models, which also correspond to three benchmark tasks implemented in this study. Specifically, (1) Receiver prediction, (2) Threatening shot prediction, and (3) Guided generation of team positions and velocities (Table 1). The graphs of all the benchmark tasks used the same feature space of nodes and edges, differing only in the global features.

For all three tasks, our models first transform the node features to a latent node feature matrix, \({{{{{{{\bf{H}}}}}}}}={f}_{{{{{{{{\mathcal{G}}}}}}}}}({{{{{{{\bf{X}}}}}}}},\, {{{{{{{\bf{E}}}}}}}},\, {{{{{{{\bf{g}}}}}}}})\), from which we could answer queries: either about individual players—in which case we learned a relevant classifier or regressor over the hu vectors (the rows of H)—or about the occurrence of a global event (e.g. shot taken)—in which case we classified or regressed over the aggregated player vectors, ∑uhu. In both cases, the classifiers were trained using stochastic gradient descent over an appropriately chosen loss function, such as categorical cross-entropy for classifiers, and mean squared error for regressors.

For different tasks, we extracted the corresponding ground-truth labels from either the event stream data or the tracking data. Specifically, (1) We modelled receiver prediction as a node classification task and labelled the first player to touch the ball after the corner was taken as the target node. This player could be either an attacking or defensive player. (2) Shot prediction was modelled as graph classification. In particular, we considered a next-ball-touch action by the attacking team as a shot if it was a direct corner, a goal, an aerial, hit on the goalposts, a shot attempt saved by the goalkeeper, or missing target. This yielded 1736 corners labelled as a shot being taken, and 5440 corners labelled as a shot not being taken. (3) For guided generation of player position and velocities, no additional label was needed, as this model relied on a self-supervised reconstruction objective.

The entire dataset was split into training and evaluation sets with an 80:20 ratio through random sampling, and the same splits were used for all tasks.

Graph neural networks

The central model of TacticAI is the graph neural network (GNN)9, which computes latent representations on a graph by repeatedly combining them within each node’s neighbourhood. Here we define a node’s neighbourhood, \({{{{{{{{\mathcal{N}}}}}}}}}_{u}\), as the set of all first-order neighbours of node u, that is, \({{{{{{{{\mathcal{N}}}}}}}}}_{u}=\{v\,| \,(v,\, u)\in {{{{{{{\mathcal{E}}}}}}}}\}\). A single GNN layer then transforms the node features by passing messages between neighbouring nodes17, following the notation of related work10, and the implementation of the CLRS-30 benchmark baselines18:

$${{{{{{{{\bf{h}}}}}}}}}_{u}^{(t)}=\phi \left({{{{{{{{\bf{h}}}}}}}}}_{u}^{(t-1)},\, \mathop{\bigoplus}\limits_{v\in {{{{{{{{\mathcal{N}}}}}}}}}_{u}}\psi \left({{{{{{{{\bf{h}}}}}}}}}_{u}^{(t-1)},\, {{{{{{{{\bf{h}}}}}}}}}_{v}^{(t-1)},\, {{{{{{{{\bf{e}}}}}}}}}_{vu},\, {{{{{{{\bf{g}}}}}}}}\right)\right)$$

(2)

where \(\psi :{{\mathbb{R}}}^{k}\times {{\mathbb{R}}}^{k}\times {{\mathbb{R}}}^{l}\times {{\mathbb{R}}}^{m}\to {{\mathbb{R}}}^{{k}^{{\prime} }}\) and \(\phi :{{\mathbb{R}}}^{k}\times {{\mathbb{R}}}^{{k}^{{\prime} }}\to {{\mathbb{R}}}^{{k}^{{\prime} }}\) are two learnable functions (e.g. multilayer perceptrons), \({{{{{{{{\bf{h}}}}}}}}}_{u}^{(t)}\) are the features of node u after t GNN layers, and ⨁ is any permutation-invariant aggregator, such as sum, max, or average. By definition, we set \({{{{{{{{\bf{h}}}}}}}}}_{u}^{(0)}={{{{{{{{\bf{x}}}}}}}}}_{u}\), and iterate Eq. (2) for T steps, where T is a hyperparameter. Then, we let \({{{{{{{\bf{H}}}}}}}}={f}_{{{{{{{{\mathcal{G}}}}}}}}}({{{{{{{\bf{X}}}}}}}},\, {{{{{{{\bf{E}}}}}}}},\, {{{{{{{\bf{g}}}}}}}})={{{{{{{{\bf{H}}}}}}}}}^{(T)}\) be the final node embeddings coming out of the GNN.

It is well known that Eq. (2) is remarkably general; it can be used to express popular models such as Transformers19 as a special case, and it has been argued that all discrete deep learning models can be expressed in this form20,21. This makes GNNs a perfect framework for benchmarking various approaches to modelling player–player interactions in the context of football.

Different choices of ψ, ϕ and ⨁ yield different architectures. In our case, we utilise a message function that factorises into an attentional mechanism, \(a:{{\mathbb{R}}}^{k}\times {{\mathbb{R}}}^{k}\times {{\mathbb{R}}}^{l}\times {{\mathbb{R}}}^{m}\to {\mathbb{R}}\):

$${{{{{{{{\bf{h}}}}}}}}}_{u}^{(t)}=\phi \left({{{{{{{{\bf{h}}}}}}}}}_{u}^{(t-1)},\mathop{\bigoplus}\limits_{v\in {{{{{{{{\mathcal{N}}}}}}}}}_{u}}a\left({{{{{{{{\bf{h}}}}}}}}}_{u}^{(t-1)},\, {{{{{{{{\bf{h}}}}}}}}}_{v}^{(t-1)},\, {{{{{{{{\bf{e}}}}}}}}}_{vu},\, {{{{{{{\bf{g}}}}}}}}\right)\psi \left({{{{{{{{\bf{h}}}}}}}}}_{v}^{(t-1)}\right)\right)$$

(3)

yielding the graph attention network (GAT) architecture12. In our work, specifically, we use a two-layer multilayer perceptron for the attentional mechanism, as proposed by GATv211:

$$a\left({{{{{{{{\bf{h}}}}}}}}}_{u}^{(t-1)},\, {{{{{{{{\bf{h}}}}}}}}}_{v}^{(t-1)},\, {{{{{{{{\bf{e}}}}}}}}}_{vu},\, {{{{{{{\bf{g}}}}}}}}\right)=\mathop{{{{{{{{\rm{softmax}}}}}}}}}\limits_{v\in {{{{{{{{\mathcal{N}}}}}}}}}_{u}}\,{{{{{{{{\bf{a}}}}}}}}}^{\top }{{{{{{{\rm{LeakyReLU}}}}}}}}\left({{{{{{{{\bf{W}}}}}}}}}_{1}{{{{{{{{\bf{h}}}}}}}}}_{u}^{(t-1)}+{{{{{{{{\bf{W}}}}}}}}}_{2}{{{{{{{{\bf{h}}}}}}}}}_{v}^{(t-1)}+{{{{{{{{\bf{W}}}}}}}}}_{e}{{{{{{{{\bf{e}}}}}}}}}_{vu}+{{{{{{{{\bf{W}}}}}}}}}_{g}{{{{{{{\bf{g}}}}}}}}\right)$$

(4)

where \({{{{{{{{\bf{W}}}}}}}}}_{1},\, {{{{{{{{\bf{W}}}}}}}}}_{2}\in {{\mathbb{R}}}^{k\times h}\), \({{{{{{{{\bf{W}}}}}}}}}_{e}\in {{\mathbb{R}}}^{l\times h}\), \({{{{{{{{\bf{W}}}}}}}}}_{g}\in {{\mathbb{R}}}^{m\times h}\) and \({{{{{{{\bf{a}}}}}}}}\in {{\mathbb{R}}}^{h}\) are the learnable parameters of the attentional mechanism, and LeakyReLU is the leaky rectified linear activation function. This mechanism computes coefficients of interaction (a single scalar value) for each pair of connected nodes (u, v), which are then normalised across all neighbours of u using the \({{{{{{{\rm{softmax}}}}}}}}\) function.

Through early-stage experimentation, we have ascertained that GATs are capable of matching the performance of more generic choices of ψ (such as the MPNN17) while being more scalable. Hence, we focus our study on the GAT model in this work. More details can be found in the subsection “Ablation study” section.

Geometric deep learning

In spite of the power of Eq. (2), using it in its full generality is often prone to overfitting, given the large number of parameters contained in ψ and ϕ. This problem is exacerbated in the football analytics domain, where gold-standard data is generally very scarce—for example, in the English Premier League, only a few hundred games are played every season.

In order to tackle this issue, we can exploit the immense regularity of data arising from football games. Strategically equivalent game states are also called transpositions, and symmetries such as arriving at the same chess position through different move sequences have been exploited computationally since the 1960s22. Similarly, game rotations and reflections may yield equivalent strategic situations23. Using the blueprint of geometric deep learning (GDL)10, we can design specialised GNN architectures that exploit this regularity.

That is, geometric deep learning is a generic methodology for deriving mathematical constraints on neural networks, such that they will behave predictably when inputs are transformed in certain ways. In several important cases, these constraints can be directly resolved, directly informing neural network architecture design. For a comprehensive example of point clouds under 3D rotational symmetry, see Fuchs et al.24.

To elucidate several aspects of the GDL framework on a high level, let us assume that there exists a group of input data transformations (symmetries), \({\mathfrak{G}}\) under which the ground-truth label remains unchanged. Specifically, if we let y(X, E, g) be the label given to the graph featurised with X, E, g, then for every transformation \({\mathfrak{g}}\in {\mathfrak{G}}\), the following property holds:

$$y({\mathfrak{g}}({{{{{{{\bf{X}}}}}}}}),\, {\mathfrak{g}}({{{{{{{\bf{E}}}}}}}}),\, {\mathfrak{g}}({{{{{{{\bf{g}}}}}}}}))=y({{{{{{{\bf{X}}}}}}}},\, {{{{{{{\bf{E}}}}}}}},\, {{{{{{{\bf{g}}}}}}}})$$

(5)

This condition is also referred to as \({\mathfrak{G}}\)-invariance. Here, by \({\mathfrak{g}}({{{{{{{\bf{X}}}}}}}})\) we denote the result of transforming X by \({\mathfrak{g}}\)—a concept also known as a group action. More generally, it is a function of the form \({\mathfrak{G}}\times {{{{{{{\mathcal{S}}}}}}}}\to {{{{{{{\mathcal{S}}}}}}}}\) for some state set \({{{{{{{\mathcal{S}}}}}}}}\). Note that a single group element, \({\mathfrak{g}}\in {\mathfrak{G}}\) can easily produce different actions on different \({{{{{{{\mathcal{S}}}}}}}}\)—in this case, \({{{{{{{\mathcal{S}}}}}}}}\) could be \({{\mathbb{R}}}^{| {{{{{{{\mathcal{V}}}}}}}}| \times k}\) (X), \({{\mathbb{R}}}^{| {{{{{{{\mathcal{V}}}}}}}}| \times | {{{{{{{\mathcal{V}}}}}}}}| \times l}\) (E) and \({{\mathbb{R}}}^{m}\) (g).

It is worth noting that GNNs may also be derived using a GDL perspective if we set the symmetry group \({\mathfrak{G}}\) to \({S}_{| {{{{{{{\mathcal{V}}}}}}}}}|\), the permutation group of \(| {{{{{{{\mathcal{V}}}}}}}}|\) objects. Owing to the design of Eq. (2), its outputs will not be dependent on the exact permutation of nodes in the input graph.

Frame averaging

A simple mechanism to enforce \({\mathfrak{G}}\)-invariance, given any predictor \({f}_{{{{{{{{\mathcal{G}}}}}}}}}({{{{{{{\bf{X}}}}}}}},\, {{{{{{{\bf{E}}}}}}}},\, {{{{{{{\bf{g}}}}}}}})\), performs frame averaging across all \({\mathfrak{G}}\)-transformed inputs:

$${f}_{{{{{{{{\mathcal{G}}}}}}}}}^{{{{{{{{\rm{inv}}}}}}}}}({{{{{{{\bf{X}}}}}}}},\, {{{{{{{\bf{E}}}}}}}},\, {{{{{{{\bf{g}}}}}}}})=\frac{1}{| {\mathfrak{G}}| }\mathop{\sum}\limits_{{\mathfrak{g}}\in {\mathfrak{G}}}\, {f}_{{{{{{{{\mathcal{G}}}}}}}}}({\mathfrak{g}}({{{{{{{\bf{X}}}}}}}}),\, {\mathfrak{g}}({{{{{{{\bf{E}}}}}}}}),\, {\mathfrak{g}}({{{{{{{\bf{g}}}}}}}}))$$

(6)

This ensures that all \({\mathfrak{G}}\)-transformed versions of a particular input (also known as that input’s orbit) will have exactly the same output, satisfying Eq. (5). A variant of this approach has also been applied in the AlphaGo architecture25 to encode symmetries of a Go board.

In our specific implementation, we set \({\mathfrak{G}}={D}_{2}=\{{{{{{{{\rm{id}}}}}}}},\leftrightarrow,\updownarrow,\leftrightarrow \updownarrow \}\), the dihedral group. Exploiting D2-invariance allows us to encode quadrant symmetries. Each element of the D2 group encodes the presence of vertical or horizontal reflections of the input football pitch. Under these transformations, the pitch is assumed completely symmetric, and hence many predictions, such as which player receives the corner kick, or takes a shot from it, can be safely assumed unchanged. As an example of how to compute transformed features in Eq. (6), ↔(X) horizontally reflects all positional features of players in X (e.g. the coordinates of the player), and negates the x-axis component of their velocity.

Group convolutions

While the frame averaging approach of Eq. (6) is a powerful way to restrict GNNs to respect input symmetries, it arguably misses an opportunity for the different \({\mathfrak{G}}\)-transformed views to interact while their computations are being performed. For small groups such as D2, a more fine-grained approach can be assumed, operating over a single GNN layer in Eq. (2), which we will write shortly as \({{{{{{{{\bf{H}}}}}}}}}^{(t)}={g}_{{{{{{{{\mathcal{G}}}}}}}}}({{{{{{{{\bf{H}}}}}}}}}^{(t-1)},\, {{{{{{{\bf{E}}}}}}}},\, {{{{{{{\bf{g}}}}}}}})\). The condition that we need a symmetry-respecting GNN layer to satisfy is as follows, for all transformations \({\mathfrak{g}}\in {\mathfrak{G}}\):

$${g}_{{{{{{{{\mathcal{G}}}}}}}}}({\mathfrak{g}}({{{{{{{{\bf{H}}}}}}}}}^{(t-1)}),\, {\mathfrak{g}}({{{{{{{\bf{E}}}}}}}}),\, {\mathfrak{g}}({{{{{{{\bf{g}}}}}}}}))={\mathfrak{g}}({g}_{{{{{{{{\mathcal{G}}}}}}}}}({{{{{{{{\bf{H}}}}}}}}}^{(t-1)},\, {{{{{{{\bf{E}}}}}}}},\, {{{{{{{\bf{g}}}}}}}}))$$

(7)

that is, it does not matter if we apply \({\mathfrak{g}}\) it to the input or the output of the function \({g}_{{{{{{{{\mathcal{G}}}}}}}}}\)—the final answer is the same. This condition is also referred to as \({\mathfrak{G}}\)-equivariance, and it has recently proved to be a potent paradigm for developing powerful GNNs over biochemical data24,26.

To satisfy D2-equivariance, we apply the group convolution approach13. Therein, views of the input are allowed to directly interact with their \({\mathfrak{G}}\)-transformed variants, in a manner very similar to grid convolutions (which is, indeed, a special case of group convolutions, setting \({\mathfrak{G}}\) to be the translation group). We use \({{{{{{{{\bf{H}}}}}}}}}_{{\mathfrak{g}}}^{(t)}\) to denote the \({\mathfrak{g}}\)-transformed view of the latent node features at layer t. Omitting E and g inputs for brevity, and using our previously designed layer \({g}_{{{{{{{{\mathcal{G}}}}}}}}}\) as a building block, we can perform a group convolution as follows:

$${{{{{{{{\bf{H}}}}}}}}}_{{\mathfrak{g}}}^{(t)}={g}_{{{{{{{{\mathcal{G}}}}}}}}}^{{{{{{{{\rm{equiv}}}}}}}}}({{{{{{{{\bf{H}}}}}}}}}_{{\mathfrak{g}}}^{(t-1)})=\frac{1}{| {\mathfrak{G}}| }\mathop{\sum}\limits_{{\mathfrak{h}}\in {\mathfrak{G}}}{g}_{{{{{{{{\mathcal{G}}}}}}}}}\left({{{{{{{{\bf{H}}}}}}}}}_{{\mathfrak{h}}}^{(t-1)}\parallel {{{{{{{{\bf{H}}}}}}}}}_{{{\mathfrak{g}}}^{-1}{\mathfrak{h}}}^{(t-1)}\right)$$

(8)

Here, ∥ is the concatenation operation, joining the two node feature matrices column-wise; \({{\mathfrak{g}}}^{-1}\) is the inverse transformation to \({\mathfrak{g}}\) (which must exist as \({\mathfrak{G}}\) is a group); and \({{\mathfrak{g}}}^{-1}{\mathfrak{h}}\) is the composition of the two transformations.

Effectively, Eq. (8) implies our D2-equivariant GNN needs to maintain a node feature matrix \({{{{{{{{\bf{H}}}}}}}}}_{{\mathfrak{g}}}^{(t)}\) for every \({\mathfrak{G}}\)-transformation of the current input, and these views are recombined by invoking \({g}_{{{{{{{{\mathcal{G}}}}}}}}}\) on all pairs related together by applying a transformation \({\mathfrak{h}}\). Note that all reflections are self-inverses, hence, in D2, \({\mathfrak{g}}={{\mathfrak{g}}}^{-1}\).

It is worth noting that both the frame averaging in Eq. (6) and group convolution in Eq. (8) are similar in spirit to data augmentation. However, whereas standard data augmentation would only show one view at a time to the model, a frame averaging/group convolution architecture exhaustively generates all views and feeds them to the model all at once. Further, group convolutions allow these views to explicitly interact in a way that does not break symmetries. Here lies the key difference between the two approaches: frame averaging and group convolutions rigorously enforce the symmetries in \({\mathfrak{G}}\), whereas data augmentation only provides implicit hints to the model about satisfying them. As a consequence of the exhaustive generation, Eqs. (6) and (8) are only feasible for small groups like D2. For larger groups, approaches like Steerable CNNs27 may be employed.

Network architectures

While the three benchmark tasks we are performing have minor differences in the global features available to the model, the neural network models designed for them all have the same encoder–decoder architecture. The encoder has the same structure in all tasks, while the decoder model is tailored to produce appropriately shaped outputs for each benchmark task.

Given an input graph, TacticAI’s model first generates all relevant D2-transformed versions of it, by appropriately reflecting the player coordinates and velocities. We refer to the original input graph as the identity view, and the remaining three D2-transformed graphs as reflected views.

Once the views are prepared, we apply four group convolutional layers (Eq. (8)) with a GATv2 base model (Eqs. (3) and (4)) as the \({g}_{{{{{{{{\mathcal{G}}}}}}}}}\) function. Specifically, this means that, in Eqs. (3) and (4), every instance of \({{{{{{{{\bf{h}}}}}}}}}_{u}^{(t-1)}\) is replaced by the concatenation of \({({{{{{{{{\bf{h}}}}}}}}}_{{\mathfrak{h}}}^{(t-1)})}_{u}\parallel {({{{{{{{{\bf{h}}}}}}}}}_{{{\mathfrak{g}}}^{-1}{\mathfrak{h}}}^{(t-1)})}_{u}\). Each GATv2 layer has eight attention heads and computes four latent features overall per player. Accordingly, once the four group convolutions are performed, we have a representation of \({{{{{{{\bf{H}}}}}}}}\in {{\mathbb{R}}}^{4\times 22\times 4}\), where the first dimension corresponds to the four views (\({{{{{{{{\bf{H}}}}}}}}}_{{{{{{{{\rm{id}}}}}}}}},\, {{{{{{{{\bf{H}}}}}}}}}_{\leftrightarrow },\, {{{{{{{{\bf{H}}}}}}}}}_{\updownarrow },\, {{{{{{{{\bf{H}}}}}}}}}_{\leftrightarrow \updownarrow }\in {{\mathbb{R}}}^{22\times 4}\)), the second dimension corresponds to the players (eleven on each team), and the third corresponds to the 4-dimensional latent vector for each player node in this particular view. How this representation is used by the decoder depends on the specific downstream task, as we detail below.

For receiver prediction, which is a fully invariant function (i.e. reflections do not change the receiver), we perform simple frame averaging across all views, arriving at

$${{{{{{{{\bf{H}}}}}}}}}^{{{{{{{{\rm{node}}}}}}}}}=\frac{{{{{{{{{\bf{H}}}}}}}}}_{{{{{{{{\rm{id}}}}}}}}}+{{{{{{{{\bf{H}}}}}}}}}_{\leftrightarrow }+{{{{{{{{\bf{H}}}}}}}}}_{\updownarrow }+{{{{{{{{\bf{H}}}}}}}}}_{\leftrightarrow \updownarrow }}{4}$$

(9)

and then learn a node-wise classifier over the rows of \({{{{{{{{\bf{H}}}}}}}}}^{{{{{{{{\rm{node}}}}}}}}}\in {{\mathbb{R}}}^{22\times 4}\). We further decode Hnode into a logit vector \({{{{{{{\bf{O}}}}}}}}\in {{\mathbb{R}}}^{22}\) with a linear layer before computing the corresponding softmax cross entropy loss.

For shot prediction, which is once again fully invariant (i.e. reflections do not change the probability of a shot), we can further average the frame-averaged features across all players to get a global graph representation:

$${{{{{{{{\bf{h}}}}}}}}}^{{{{{{{{\rm{graph}}}}}}}}}=\frac{1}{22}\mathop{\sum }\limits_{u=1}^{22}{{{{{{{{\bf{h}}}}}}}}}_{u}^{{{{{{{{\rm{node}}}}}}}}}$$

(10)

and then learn a binary classifier over \({{{{{{{{\bf{h}}}}}}}}}^{{{{{{{{\rm{graph}}}}}}}}}\in {{\mathbb{R}}}^{4}\). Specifically, we decode the hidden vector into a single logit with a linear layer and compute the sigmoid binary cross-entropy loss with the corresponding label.

For guided generation (position/velocity adjustments), we generate the player positions and velocities with respect to a particular outcome of interest for the human coaches, predicted over the rows of the hidden feature matrix. For example, the model may adjust the defensive setup to decrease the shot probability by the attacking team. The model output is now equivariant rather than invariant—reflecting the pitch appropriately reflects the predicted positions and velocity vectors. As such, we cannot perform frame averaging, and take only the identity view’s features, \({{{{{{{{\bf{H}}}}}}}}}_{{{{{{{{\rm{id}}}}}}}}}\in {{\mathbb{R}}}^{22\times 4}\). From this latent feature matrix, we can then learn a conditional distribution from each row, which models the positions or velocities of the corresponding player. To do this, we extend the backbone encoder with conditional variational autoencoder (CVAE28,29). Specifically, for the u-th row of Hid, hu, we first map its latent embedding to the parameters of a two-dimensional Gaussian distribution \({{{{{{{\mathcal{N}}}}}}}}({\mu }_{u}| {\sigma }_{u})\), and then sample the coordinates and velocities from this distribution. At training time, we can efficiently propagate gradients through this sampling operation using the reparameterisation trick28: sample a random value \({\epsilon }_{u} \sim {{{{{{{\mathcal{N}}}}}}}}(0,1)\) for each player from the unit Gaussian distribution, and then treat μu + σuϵu as the sample for this player. In what follows, we omit edge features for brevity. For each corner kick sample X with the corresponding outcome o (e.g. a binary value indicating a shot event), we extend the standard VAE loss28,29 to our case of outcome-conditional guided generation as

$${{{{{{{\mathcal{L}}}}}}}}(\theta,\, \phi )=-{{\mathbb{E}}}_{{{{{{{{{\bf{h}}}}}}}}}_{u} \sim {q}_{\theta }({{{{{{{{\bf{h}}}}}}}}}_{u}| {{{{{{{\bf{X}}}}}}}},{{{{{{{\bf{o}}}}}}}})}[\log {p}_{\phi }({{{{{{{{\bf{x}}}}}}}}}_{u}| {{{{{{{{\bf{h}}}}}}}}}_{u},\, {{{{{{{\bf{o}}}}}}}})]+{\mathbb{KL}}({q}_{\theta }({{{{{{{{\bf{h}}}}}}}}}_{u}| {{{{{{{\bf{X}}}}}}}},\, {{{{{{{\bf{o}}}}}}}})\parallel p({{{{{{{{\bf{h}}}}}}}}}_{u}| {{{{{{{\bf{o}}}}}}}}))$$

(11)

where hu is the player embedding corresponding to the uth row of Hid, and \({\mathbb{KL}}\) is Kullback–Leibler (KL) divergence. Specifically, the first term is the generation loss between the real player input xu and the reconstructed sample decoded from hu with the decoder pϕ. Using the KL term, the distribution of the latent embedding hu is regularised towards p(hu∣o), which is a multivariate Gaussian in our case.

A complete high-level summary of the generic encoder–decoder equivariant architecture employed by TacticAI can be summarised in Supplementary Fig. 2. In the following section, we will provide empirical evidence for justifying these architectural decisions. This will be done through targeted ablation studies on our predictive benchmarks (receiver prediction and shot prediction).

Ablation study

We leveraged the receiver prediction task as a way to evaluate various base model architectures, and directly quantitatively assess the contributions of geometric deep learning in this context. We already see that the raw corner kick data can be better represented through geometric deep learning, yielding separable clusters in the latent space that could correspond to different attacking or defending tactics (Fig. 2). In addition, we hypothesise that these representations can also yield better performance on the task of receiver prediction. Accordingly, we ablate several design choices using deep learning on this task, as illustrated by the following four questions:

Does a factorised graph representation help? To assess this, we compare it against a convolutional neural network (CNN30) baseline, which does not leverage a graph representation.

Does a graph structure help? To assess this, we compare against a Deep Sets31 baseline, which only models each node in isolation without considering adjacency information—equivalently, setting each neighbourhood \({{{{{{{{\mathcal{N}}}}}}}}}_{u}\) to a singleton set {u}.

Are attentional GNNs a good strategy? To assess this, we compare against a message passing neural network32, MPNN baseline, which uses the fully potent GNN layer from Eq. (2) instead of the GATv2.

Does accounting for symmetries help? To assess this, we compare our geometric GATv2 baseline against one which does not utilise D2 group convolutions but utilises D2 frame averaging, and one which does not explicitly utilise any aspect of D2 symmetries at all.

Each of these models has been trained for a fixed budget of 50,000 training steps. The test top-k receiver prediction accuracies of the trained models are provided in Supplementary Table 2. As already discussed in the section “Results”, there is a clear advantage to using a full graph structure, as well as directly accounting for reflection symmetry. Further, the usage of the MPNN layer leads to slight overfitting compared to the GATv2, illustrating how attentional GNNs strike a good balance of expressivity and data efficiency for this task. Our analysis highlights the quantitative benefits of both graph representation learning and geometric deep learning for football analytics from tracking data. We also provide a brief ablation study for the shot prediction task in Supplementary Table 3.

Training details

We train each of TacticAI’s models in isolation, using NVIDIA Tesla P100 GPUs. To minimise overfitting, each model’s learning objective is regularised with an L2 norm penalty with respect to the network parameters. During training, we use the Adam stochastic gradient descent optimiser33 over the regularised loss.

All models, including baselines, have been given an equal hyperparameter tuning budget, spanning the number of message passing steps ({1, 2, 4}), initial learning rate ({0.0001, 0.00005}), batch size ({128, 256}) and L2 regularisation coefficient ({0.01, 0.005, 0.001, 0.0001, 0}). We summarise the chosen hyperparameters of each TacticAI model in Supplementary Table 1.