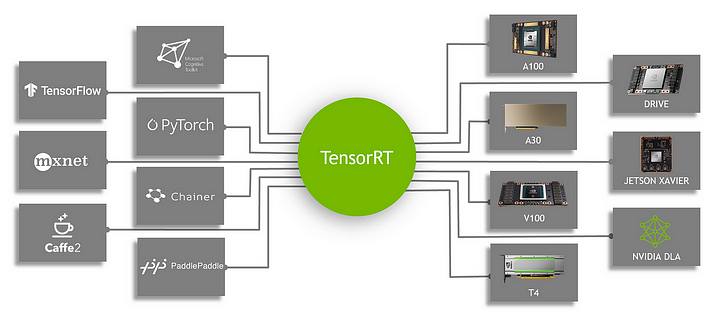

TensorRT is a C++ inference framework that can run on NVIDIA’s various GPU hardware platforms. We use Pytorch, TF, or other frameworks to train the model, which can be converted to TensorRT format, and then use the TensorRT inference engine to run our model, thereby improving the speed of the model on NVIDIA GPUs [1].

TensorRT supports almost all major deep learning frameworks, converting python frameworks to C++ TensorRT to accelerate inference.

- Operator fusion (layer and tensor fusion): To put it simply, it is to reduce the number of data flows and the frequent use of video memory by fusing some computing OPs or removing some redundant OPs.

- Quantization: Quantization is the use of IN8 quantization or FP16 and TF32 with accuracy that is different from conventional FP32, which can significantly improve the execution speed of the model without maintaining the accuracy of the original model.

- Automatic core adjustment: Select different optimization strategies and calculation methods based on different graphics card architectures, SM numbers, and core frequencies (such as 1080TI and 2080TI) to find the most suitable computing method for the current architecture.

- Dynamic tensor memory: We all know that the opening and release of memory is time-consuming, and the number of these operations in the model can be reduced by adjusting some strategies, which can reduce the time it takes for the model to run.

- Multi-stream execution: The stream technology in CUDA is used to maximize parallel operations.

TensorRT officially provides three open-source tools in its repository, which can be used later if needed.

Three tools are used in general [1]:

- ONNX GraphSurgeon You can modify the ONNX model we exported, add or cut out certain nodes, change the name or dimension, etc

- Polygraphy A collection of various gadgets, such as comparing the accuracy of the ONNX and trt models, observing the output of each layer of the trt model, etc., is mainly used to debug some model information

- PyTorch-Quantization You can add simulated quantization operations during Pytorch training or inference to improve the accuracy and speed of the quantization model, and support the export of ONNX and TRT from the quantized trained model.

Here’s my computer environment:

- Operating system: Windows 10

- Graphics: GeForce 1050Ti

- CUDA version: 11.6

- Pytorch version: 1.13.1+cu116

- Pytorch audio: 0.13.1+cu116

- Pytorch vision: 0.14.1+cu116

- Python (Anaconda) version: 3.9.10

Cuda Version on Windows Powershell :

-- nvcc --version

First of all, you need to go to the Nvidia official website to download the corresponding Cuda version of the TensorRT installation package.

I’m downloading the one ticked in red, and this version supports CUDA11.0–11.7.

After downloading, unzip the archive and then go to the Anaconda environment to go to the following folders and install the whl files.

cd TensorRT-8.4.3.1\python pip install tensorrt-8.4.3.1-cp.xxx-none-win_amd64.whl #change -cp.xxx to your python version

cd TensorRT-8.4.3.1\graphsurgeon pip install graphsurgeon-0.4.6-py2.py3-none-any.whl

cd TensorRT-8.4.3.1\onnx_graphsurgeon pip install onnx_graphsurgeon-0.3.12-py2.py3-none-any.whl

cd TensorRT-8.4.3.1\uff pip install uff-0.6.9-py2.py3-none-any.whl

Then you need to move some of the files in the installation package:

- Copy the mid-header file to

TensorRT-8.4.3.1\include*

To C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v11.6\include

- Copy all lib files to

TensorRT-8.4.3.1\lib*

To C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v11.6\lib\x64

- Copy all the dll files to

TensorRT-8.4.3.1\lib*

To C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v11.6\bin

Note: v11.6 here is based on its own Cuda version number

After that, the path needs to be manually added to the user’s Path environment variable toC:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v11.6\bin

Then verify it:

python

import tensorrt

print(tensorrt.__version__)

An error occurred when theimport

FileNotFoundError: Could not find: nvinfer.dll. Is it on your PATH?

At this time, you only need to find the missing files, and then add them to the above directory, I found some files under the lib file in the installed torch, and move what is missing. bin

If no error is reported, verify it again and output the tensorrt version:

If you don’t have pycuda installed, you need to install it again

pip install pycuda

Change the repository to YOLOv5 Folder path and export yolov5.pt model to yolov5.engine TensorRT model using this command line.

cd yolov5

python export.py --weights data.pt --data data/coco128.yaml --include engine --device 0

The source code location of the activation function of pytorch is To C:\anaconda3\envs\python3.7\Lib\sitepackages\torch\nn\modules\activation.py

class SiLU(Module):__constants__ = ['inplace']

inplace: bool

def __init__(self, inplace: bool = False):

super(SiLU, self).__init__()

self.inplace = inplace

def forward(self, input: Tensor) -> Tensor:

return input * torch.sigmoid(input)

return F.silu(input, inplace=self.inplace)

Exporting form YOLOv5n to TensorRT takes time

PS C:\User\muham> & C:/ProgramData/Anaconda3/envs/yolov8/python.exe "e:/Skripsi_(Cepat_Lulus_Kasian_Bapak)/Dataset/Raspi_Mas_Aji/yolov5/export.py" --weights yolov5/bestdataterbaru.pt --data yolov5/dataset(1).yaml --include engine --device 0

export: data=yolov5/dataset, weights=['yolov5/bestdataterbaru.pt'], imgsz=[640, 640], batch_size=1, device=0, half=False, inplace=False, keras=False, optimize=False, int8=False, per_tensor=False, dynamic=False, simplify=False, opset=17, verbose=False, workspace=4, nms=False, agnostic_nms=False, topk_per_class=100, topk_all=100, iou_thres=0.45, conf_thres=0.25, include=['engine']

YOLOv5 v7.0-295-gac6c4383 Python-3.10.7 torch-1.13.1+cu116 CUDA:0 (NVIDIA GeForce GTX 1050 Ti, 4096MiB)Fusing layers...

Model summary: 157 layers, 7029004 parameters, 0 gradients, 15.8 GFLOPs

PyTorch: starting from yolov5\bestdataterbaru.pt with output shape (1, 25200, 12) (13.6 MB)

ONNX: starting export with onnx 1.15.0...

ONNX: export success 1.6s, saved as yolov5\bestdataterbaru.onnx (27.2 MB)

TensorRT: starting export with TensorRT 8.4.3.1...

[03/31/2024-08:32:00] [TRT] [I] [MemUsageChange] Init CUDA: CPU +181, GPU +0, now: CPU 15135, GPU 1528 (MiB)

[03/31/2024-08:32:02] [TRT] [I] [MemUsageChange] Init builder kernel library: CPU +5, GPU +2, now: CPU 15276, GPU 1530 (MiB)

e:\Skripsi_(Cepat_Lulus_Kasian_Bapak)\Dataset\Raspi_Mas_Aji\yolov5\export.py:357: DeprecationWarning: Use set_memory_pool_limit instead.

config.max_workspace_size = workspace * 1 << 30

[03/31/2024-08:32:02] [TRT] [I] ----------------------------------------------------------------

[03/31/2024-08:32:02] [TRT] [I] Input filename: yolov5\bestdataterbaru.onnx

[03/31/2024-08:32:02] [TRT] [I] ONNX IR version: 0.0.7

[03/31/2024-08:32:02] [TRT] [I] Opset version: 12

[03/31/2024-08:32:02] [TRT] [I] Producer name: pytorch

[03/31/2024-08:32:02] [TRT] [I] Producer version: 1.13.1

[03/31/2024-08:32:02] [TRT] [I] Domain:

[03/31/2024-08:32:02] [TRT] [I] Model version: 0

[03/31/2024-08:32:02] [TRT] [I] Doc string:

[03/31/2024-08:32:02] [TRT] [I] ----------------------------------------------------------------

[03/31/2024-08:32:02] [TRT] [W] onnx2trt_utils.cpp:369: Your ONNX model has been generated with INT64 weights, while TensorRT does not natively support INT64. Attempting to cast down to INT32.

TensorRT: input "images" with shape(1, 3, 640, 640) DataType.FLOAT

TensorRT: output "output0" with shape(1, 25200, 12) DataType.FLOAT

TensorRT: building FP32 engine as yolov5\bestdataterbaru.engine

e:\Skripsi_(Cepat_Lulus_Kasian_Bapak)\Dataset\Raspi_Mas_Aji\yolov5\export.py:384: DeprecationWarning: Use build_serialized_network instead.

with builder.build_engine(network, config) as engine, open(f, "wb") as t:

[03/31/2024-08:32:02] [TRT] [I] [MemUsageChange] Init cuBLAS/cuBLASLt: CPU +0, GPU +8, now: CPU 15212, GPU 1538 (MiB)

[03/31/2024-08:32:02] [TRT] [I] [MemUsageChange] Init cuDNN: CPU +0, GPU +8, now: CPU 15212, GPU 1546 (MiB)

[03/31/2024-08:32:02] [TRT] [W] TensorRT was linked against cuDNN 8.4.1 but loaded cuDNN 8.3.2

[03/31/2024-08:32:02] [TRT] [I] Local timing cache in use. Profiling results in this builder pass will not be stored.

[03/31/2024-08:33:06] [TRT] [I] Detected 1 inputs and 4 output network tensors.

[03/31/2024-08:33:07] [TRT] [I] Total Host Persistent Memory: 132976

[03/31/2024-08:33:07] [TRT] [I] Total Device Persistent Memory: 1755648

[03/31/2024-08:33:07] [TRT] [I] [BlockAssignment] Algorithm ShiftNTopDown took 45.5365ms to assign 7 blocks to 130 nodes requiring 35635200 bytes.

[03/31/2024-08:33:07] [TRT] [I] Total Activation Memory: 35635200

[03/31/2024-08:33:07] [TRT] [I] [BlockAssignment] Algorithm ShiftNTopDown took 45.5365ms to assign 7 blocks to 130 nodes requiring 35635200 bytes.

[03/31/2024-08:33:07] [TRT] [I] Total Activation Memory: 35635200 [03/31/2024-08:33:07] [TRT] [I] [MemUsageChange] TensorRT-managed allocation in building engine: CPU +0, GPU +0, now: CPU 0, GPU 0 (MiB)

[03/31/2024-08:33:07] [TRT] [W] The getMaxBatchSize() function should not be used with an engine built from a network created with NetworkDefinitionCreationFlag::kEXPLICIT_BATCH flag. This function will always return 1.

[03/31/2024-08:33:07] [TRT] [W] The getMaxBatchSize() function should not be used with an engine built from a network created with NetworkDefinitionCreationFlag::kEXPLICIT_BATCH flag. This function will always return 1.

TensorRT: export success 69.5s, saved as yolov5\bestdataterbaru.engine (34.6 MB)

Export complete (73.2s)

Results saved to E:\Skripsi_(Cepat_Lulus_Kasian_Bapak)\Dataset\Raspi_Mas_Aji\yolov5

Detect: python detect.py --weights yolov5\bestdataterbaru.engine

Validate: python val.py --weights yolov5\bestdataterbaru.engine

PyTorch Hub: model = torch.hub.load('ultralytics/yolov5', 'custom', 'yolov5\bestdataterbaru.engine')

Visualize: https://netron.app

Export complete takes 69.2 seconds and Not semi-precision Export complete 73.2 seconds with model.engine size 34. MB

using trtexec.exe to visualize TensorRT model with arguments:

(yolov8) C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v11.6\bin>trtexec.exe --loadEngine=E:/Skripsi_(Cepat_Lulus_K

asian_Bapak)/Dataset/Raspi_Mas_Aji/yolov5/bestdataterbaru.engine --dumpLayerInfo

&&&& RUNNING TensorRT.trtexec [TensorRT v8403] # trtexec.exe --loadEngine=E:/Skripsi_(Cepat_Lulus_Kasian_Bapak)/Dataset/Raspi_Mas_Aji/yolov5/bestdataterbaru.engine --dumpLayerInfo

[03/31/2024-19:26:30] [I] === Model Options ===

[03/31/2024-19:26:30] [I] Format: *

[03/31/2024-19:26:30] [I] Model:

[03/31/2024-19:26:30] [I] Output:

[03/31/2024-19:26:30] [I] === Build Options ===

[03/31/2024-19:26:30] [I] Max batch: 1

[03/31/2024-19:26:30] [I] Memory Pools: workspace: default, dlaSRAM: default, dlaLocalDRAM: default, dlaGlobalDRAM: default

[03/31/2024-19:26:30] [I] minTiming: 1

[03/31/2024-19:26:30] [I] avgTiming: 8

[03/31/2024-19:26:30] [I] Precision: FP32

[03/31/2024-19:26:30] [I] LayerPrecisions:

[03/31/2024-19:26:30] [I] Calibration:

[03/31/2024-19:26:30] [I] Refit: Disabled

[03/31/2024-19:26:30] [I] Sparsity: Disabled

[03/31/2024-19:26:30] [I] Safe mode: Disabled

[03/31/2024-19:26:30] [I] DirectIO mode: Disabled

[03/31/2024-19:26:30] [I] Restricted mode: Disabled

[03/31/2024-19:26:30] [I] Build only: Disabled

[03/31/2024-19:26:30] [I] Save engine:

[03/31/2024-19:26:30] [I] Load engine: E:/Skripsi_(Cepat_Lulus_Kasian_Bapak)/Dataset/Raspi_Mas_Aji/yolov5/bestdataterbaru.engine

[03/31/2024-19:26:30] [I] Profiling verbosity: 0

[03/31/2024-19:26:30] [I] Tactic sources: Using default tactic sources

[03/31/2024-19:26:30] [I] timingCacheMode: local

[03/31/2024-19:26:30] [I] timingCacheFile:

[03/31/2024-19:26:30] [I] Input(s)s format: fp32:CHW

[03/31/2024-19:26:30] [I] Output(s)s format: fp32:CHW

[03/31/2024-19:26:30] [I] Input build shapes: model

[03/31/2024-19:26:30] [I] Input calibration shapes: model

[03/31/2024-19:26:30] [I] === System Options ===

[03/31/2024-19:26:30] [I] Device: 0

[03/31/2024-19:26:30] [I] DLACore:

[03/31/2024-19:26:30] [I] Plugins:

[03/31/2024-19:26:30] [I] === Inference Options ===

[03/31/2024-19:26:30] [I] Batch: 1

[03/31/2024-19:26:30] [I] Input inference shapes: model

[03/31/2024-19:26:30] [I] Iterations: 10

[03/31/2024-19:26:30] [I] Duration: 3s (+ 200ms warm up)

[03/31/2024-19:26:30] [I] Sleep time: 0ms

[03/31/2024-19:26:30] [I] Idle time: 0ms

[03/31/2024-19:26:30] [I] Streams: 1

[03/31/2024-19:26:30] [I] ExposeDMA: Disabled

[03/31/2024-19:26:30] [I] Data transfers: Enabled

[03/31/2024-19:26:30] [I] Spin-wait: Disabled

[03/31/2024-19:26:30] [I] Multithreading: Disabled

[03/31/2024-19:26:30] [I] CUDA Graph: Disabled

[03/31/2024-19:26:30] [I] Separate profiling: Disabled

[03/31/2024-19:26:30] [I] Time Deserialize: Disabled

[03/31/2024-19:26:30] [I] Time Refit: Disabled

[03/31/2024-19:26:30] [I] Inputs:

[03/31/2024-19:26:30] [I] === Reporting Options ===

[03/31/2024-19:26:30] [I] Verbose: Disabled

[03/31/2024-19:26:30] [I] Averages: 10 inferences

[03/31/2024-19:26:30] [I] Percentile: 99

[03/31/2024-19:26:30] [I] Dump refittable layers:Disabled

[03/31/2024-19:26:30] [I] Dump output: Disabled

[03/31/2024-19:26:30] [I] Profile: Disabled

[03/31/2024-19:26:30] [I] Export timing to JSON file:

[03/31/2024-19:26:30] [I] Export output to JSON file:

[03/31/2024-19:26:30] [I] Export profile to JSON file:

[03/31/2024-19:26:30] [I]

[03/31/2024-19:26:30] [I] === Device Information ===

[03/31/2024-19:26:30] [I] Selected Device: NVIDIA GeForce GTX 1050 Ti

[03/31/2024-19:26:30] [I] Compute Capability: 6.1

[03/31/2024-19:26:30] [I] SMs: 6

[03/31/2024-19:26:30] [I] Compute Clock Rate: 1.62 GHz

[03/31/2024-19:26:30] [I] Device Global Memory: 4095 MiB

[03/31/2024-19:26:30] [I] Shared Memory per SM: 96 KiB

[03/31/2024-19:26:30] [I] Memory Bus Width: 128 bits (ECC disabled)

[03/31/2024-19:26:30] [I] Memory Clock Rate: 3.504 GHz

[03/31/2024-19:26:30] [I]

[03/31/2024-19:26:30] [I] TensorRT version: 8.4.3

[03/31/2024-19:26:30] [I] Engine loaded in 0.0286742 sec.

[03/31/2024-19:26:31] [I] [TRT] [MemUsageChange] Init CUDA: CPU +268, GPU +0, now: CPU 12906, GPU 796 (MiB)

[03/31/2024-19:26:31] [I] [TRT] Loaded engine size: 34 MiB

[03/31/2024-19:26:32] [I] [TRT] [MemUsageChange] TensorRT-managed allocation in engine deserialization: CPU +0, GPU +0, now: CPU 0, GPU 0 (MiB)

[03/31/2024-19:26:32] [I] Engine deserialized in 1.0952 sec.

[03/31/2024-19:26:32] [I] [TRT] [MemUsageChange] TensorRT-managed allocation in IExecutionContext creation: CPU +0, GPU +0, now: CPU 0, GPU 0 (MiB)

[03/31/2024-19:26:32] [I] Using random values for input images

[03/31/2024-19:26:32] [I] Created input binding for images with dimensions 1x3x640x640

[03/31/2024-19:26:32] [I] Using random values for output output0

[03/31/2024-19:26:32] [I] Created output binding for output0 with dimensions 1x25200x12

[03/31/2024-19:26:32] [I] Layer Information:

[03/31/2024-19:26:32] [I] [TRT] [MemUsageChange] Init CUDA: CPU +0, GPU +0, now: CPU 12966, GPU 876 (MiB)

[03/31/2024-19:26:32] [I] [TRT] The profiling verbosity was set to ProfilingVerbosity::kLAYER_NAMES_ONLY when the engine was built, so only the layer names will be returned. Rebuild the engine with ProfilingVerbosity::kDETAILED to get more verbose layer information.

[03/31/2024-19:26:32] [I] Layers:

/model.0/conv/Conv

PWN(PWN(/model.0/act/Sigmoid), /model.0/act/Mul)

/model.1/conv/Conv

PWN(PWN(/model.1/act/Sigmoid), /model.1/act/Mul)

/model.2/cv1/conv/Conv || /model.2/cv2/conv/Conv

PWN(PWN(/model.2/cv1/act/Sigmoid), /model.2/cv1/act/Mul)

/model.2/m/m.0/cv1/conv/Conv

PWN(PWN(/model.2/m/m.0/cv1/act/Sigmoid), /model.2/m/m.0/cv1/act/Mul)

/model.2/m/m.0/cv2/conv/Conv

PWN(PWN(PWN(/model.2/m/m.0/cv2/act/Sigmoid), /model.2/m/m.0/cv2/act/Mul), /model.2/m/m.0/Add)

PWN(PWN(/model.2/cv2/act/Sigmoid), /model.2/cv2/act/Mul)

/model.2/cv3/conv/Conv

PWN(PWN(/model.2/cv3/act/Sigmoid), /model.2/cv3/act/Mul)

/model.3/conv/Conv

PWN(PWN(/model.3/act/Sigmoid), /model.3/act/Mul)

/model.4/cv1/conv/Conv || /model.4/cv2/conv/Conv

PWN(PWN(/model.4/cv1/act/Sigmoid), /model.4/cv1/act/Mul)

/model.4/m/m.0/cv1/conv/Conv

PWN(PWN(/model.4/m/m.0/cv1/act/Sigmoid), /model.4/m/m.0/cv1/act/Mul)

/model.4/m/m.0/cv2/conv/Conv

PWN(PWN(PWN(/model.4/m/m.0/cv2/act/Sigmoid), /model.4/m/m.0/cv2/act/Mul), /model.4/m/m.0/Add)

/model.4/m/m.1/cv1/conv/Conv

PWN(PWN(/model.4/m/m.1/cv1/act/Sigmoid), /model.4/m/m.1/cv1/act/Mul)

/model.4/m/m.1/cv2/conv/Conv

PWN(PWN(PWN(/model.4/m/m.1/cv2/act/Sigmoid), /model.4/m/m.1/cv2/act/Mul), /model.4/m/m.1/Add)

PWN(PWN(/model.4/cv2/act/Sigmoid), /model.4/cv2/act/Mul)

/model.4/cv3/conv/Conv

PWN(PWN(/model.4/cv3/act/Sigmoid), /model.4/cv3/act/Mul)

/model.5/conv/Conv

PWN(PWN(/model.5/act/Sigmoid), /model.5/act/Mul)

/model.6/cv1/conv/Conv || /model.6/cv2/conv/Conv

PWN(PWN(/model.6/cv1/act/Sigmoid), /model.6/cv1/act/Mul)

/model.6/m/m.0/cv1/conv/Conv

PWN(PWN(/model.6/m/m.0/cv1/act/Sigmoid), /model.6/m/m.0/cv1/act/Mul)

/model.6/m/m.0/cv2/conv/Conv

PWN(PWN(PWN(/model.6/m/m.0/cv2/act/Sigmoid), /model.6/m/m.0/cv2/act/Mul), /model.6/m/m.0/Add)

/model.6/m/m.1/cv1/conv/Conv

PWN(PWN(/model.6/m/m.1/cv1/act/Sigmoid), /model.6/m/m.1/cv1/act/Mul)

/model.6/m/m.1/cv2/conv/Conv

PWN(PWN(PWN(/model.6/m/m.1/cv2/act/Sigmoid), /model.6/m/m.1/cv2/act/Mul), /model.6/m/m.1/Add)

/model.6/m/m.2/cv1/conv/Conv

PWN(PWN(/model.6/m/m.2/cv1/act/Sigmoid), /model.6/m/m.2/cv1/act/Mul)

/model.6/m/m.2/cv2/conv/Conv

PWN(PWN(PWN(/model.6/m/m.2/cv2/act/Sigmoid), /model.6/m/m.2/cv2/act/Mul), /model.6/m/m.2/Add)

PWN(PWN(/model.6/cv2/act/Sigmoid), /model.6/cv2/act/Mul)

/model.6/cv3/conv/Conv

PWN(PWN(/model.6/cv3/act/Sigmoid), /model.6/cv3/act/Mul)

/model.7/conv/Conv

PWN(PWN(/model.7/act/Sigmoid), /model.7/act/Mul)

/model.8/cv1/conv/Conv || /model.8/cv2/conv/Conv

PWN(PWN(/model.8/cv1/act/Sigmoid), /model.8/cv1/act/Mul)

/model.8/m/m.0/cv1/conv/Conv

PWN(PWN(/model.8/m/m.0/cv1/act/Sigmoid), /model.8/m/m.0/cv1/act/Mul)

/model.8/m/m.0/cv2/conv/Conv

PWN(PWN(PWN(/model.8/m/m.0/cv2/act/Sigmoid), /model.8/m/m.0/cv2/act/Mul), /model.8/m/m.0/Add)

PWN(PWN(/model.8/cv2/act/Sigmoid), /model.8/cv2/act/Mul)

/model.8/cv3/conv/Conv

PWN(PWN(/model.8/cv3/act/Sigmoid), /model.8/cv3/act/Mul)

/model.9/cv1/conv/Conv

PWN(PWN(/model.9/cv1/act/Sigmoid), /model.9/cv1/act/Mul)

/model.9/m/MaxPool

/model.9/m_1/MaxPool

/model.9/m_2/MaxPool

/model.9/cv1/act/Mul_output_0 copy

/model.9/m/MaxPool_output_0 copy

/model.9/m_1/MaxPool_output_0 copy

/model.9/cv2/conv/Conv

PWN(PWN(/model.9/cv2/act/Sigmoid), /model.9/cv2/act/Mul)

/model.10/conv/Conv

PWN(PWN(/model.10/act/Sigmoid), /model.10/act/Mul)

/model.11/Resize

/model.11/Resize_output_0 copy

/model.13/cv1/conv/Conv || /model.13/cv2/conv/Conv

PWN(PWN(/model.13/cv1/act/Sigmoid), /model.13/cv1/act/Mul)

/model.13/m/m.0/cv1/conv/Conv

PWN(PWN(/model.13/m/m.0/cv1/act/Sigmoid), /model.13/m/m.0/cv1/act/Mul)

/model.13/m/m.0/cv2/conv/Conv

PWN(PWN(/model.13/m/m.0/cv2/act/Sigmoid), /model.13/m/m.0/cv2/act/Mul)

PWN(PWN(/model.13/cv2/act/Sigmoid), /model.13/cv2/act/Mul)

/model.13/cv3/conv/Conv

PWN(PWN(/model.13/cv3/act/Sigmoid), /model.13/cv3/act/Mul)

/model.14/conv/Conv

PWN(PWN(/model.14/act/Sigmoid), /model.14/act/Mul)

/model.15/Resize

/model.15/Resize_output_0 copy

/model.17/cv1/conv/Conv || /model.17/cv2/conv/Conv

PWN(PWN(/model.17/cv1/act/Sigmoid), /model.17/cv1/act/Mul)

/model.17/m/m.0/cv1/conv/Conv

PWN(PWN(/model.17/m/m.0/cv1/act/Sigmoid), /model.17/m/m.0/cv1/act/Mul)

/model.17/m/m.0/cv2/conv/Conv

PWN(PWN(/model.17/m/m.0/cv2/act/Sigmoid), /model.17/m/m.0/cv2/act/Mul)

PWN(PWN(/model.17/cv2/act/Sigmoid), /model.17/cv2/act/Mul)

/model.17/cv3/conv/Conv

PWN(PWN(/model.17/cv3/act/Sigmoid), /model.17/cv3/act/Mul)

/model.18/conv/Conv

PWN(PWN(/model.18/act/Sigmoid), /model.18/act/Mul)

/model.14/act/Mul_output_0 copy

/model.20/cv1/conv/Conv || /model.20/cv2/conv/Conv

PWN(PWN(/model.20/cv1/act/Sigmoid), /model.20/cv1/act/Mul)

/model.20/m/m.0/cv1/conv/Conv

PWN(PWN(/model.20/m/m.0/cv1/act/Sigmoid), /model.20/m/m.0/cv1/act/Mul)

/model.20/m/m.0/cv2/conv/Conv

PWN(PWN(/model.20/m/m.0/cv2/act/Sigmoid), /model.20/m/m.0/cv2/act/Mul)

PWN(PWN(/model.20/cv2/act/Sigmoid), /model.20/cv2/act/Mul)

/model.20/cv3/conv/Conv

PWN(PWN(/model.20/cv3/act/Sigmoid), /model.20/cv3/act/Mul)

/model.21/conv/Conv

PWN(PWN(/model.21/act/Sigmoid), /model.21/act/Mul)

/model.10/act/Mul_output_0 copy

/model.23/cv1/conv/Conv || /model.23/cv2/conv/Conv

PWN(PWN(/model.23/cv1/act/Sigmoid), /model.23/cv1/act/Mul)

/model.23/m/m.0/cv1/conv/Conv

PWN(PWN(/model.23/m/m.0/cv1/act/Sigmoid), /model.23/m/m.0/cv1/act/Mul)

/model.23/m/m.0/cv2/conv/Conv

PWN(PWN(/model.23/m/m.0/cv2/act/Sigmoid), /model.23/m/m.0/cv2/act/Mul)

PWN(PWN(/model.23/cv2/act/Sigmoid), /model.23/cv2/act/Mul)

/model.23/cv3/conv/Conv

PWN(PWN(/model.23/cv3/act/Sigmoid), /model.23/cv3/act/Mul)

/model.24/m.0/Conv

/model.24/Reshape + /model.24/Transpose

PWN(/model.24/Sigmoid)

/model.24/Split

/model.24/Split_0

/model.24/Split_1

/model.24/Constant_2_output_0

PWN(PWN(/model.24/Constant_1_output_0 + (Unnamed Layer* 204) [Shuffle] + /model.24/Mul, /model.24/Add), /model.24/Constant_3_output_0 + (Unnamed Layer* 209) [Shuffle] + /model.24/Mul_1)

/model.24/Constant_6_output_0

PWN(PWN(/model.24/Constant_5_output_0 + (Unnamed Layer* 215) [Shuffle], PWN(/model.24/Constant_4_output_0 + (Unnamed Layer* 212) [Shuffle] + /model.24/Mul_2, /model.24/Pow)), /model.24/Mul_3)

/model.24/Mul_1_output_0 copy

/model.24/Mul_3_output_0 copy

/model.24/Reshape_1

/model.24/Reshape_1_copy_output

/model.24/m.1/Conv

/model.24/Reshape_2 + /model.24/Transpose_1

PWN(/model.24/Sigmoid_1)

/model.24/Split_1_4

/model.24/Split_1_5

/model.24/Split_1_6

/model.24/Constant_10_output_0

PWN(PWN(/model.24/Constant_9_output_0 + (Unnamed Layer* 229) [Shuffle] + /model.24/Mul_4, /model.24/Add_1), /model.24/Constant_11_output_0 + (Unnamed Layer* 234) [Shuffle] + /model.24/Mul_5)

/model.24/Constant_14_output_0

PWN(PWN(/model.24/Constant_13_output_0 + (Unnamed Layer* 240) [Shuffle], PWN(/model.24/Constant_12_output_0 + (Unnamed Layer* 237) [Shuffle] + /model.24/Mul_6, /model.24/Pow_1)), /model.24/Mul_7)

/model.24/Mul_5_output_0 copy

/model.24/Mul_7_output_0 copy

/model.24/Reshape_3

/model.24/Reshape_3_copy_output

/model.24/m.2/Conv

/model.24/Reshape_4 + /model.24/Transpose_2

PWN(/model.24/Sigmoid_2)

/model.24/Split_2

/model.24/Split_2_9

/model.24/Split_2_10

/model.24/Constant_18_output_0

PWN(PWN(/model.24/Constant_17_output_0 + (Unnamed Layer* 254) [Shuffle] + /model.24/Mul_8, /model.24/Add_2), /model.24/Constant_19_output_0 + (Unnamed Layer* 259) [Shuffle] + /model.24/Mul_9)

/model.24/Constant_22_output_0

PWN(PWN(/model.24/Constant_21_output_0 + (Unnamed Layer* 265) [Shuffle], PWN(/model.24/Constant_20_output_0 + (Unnamed Layer* 262) [Shuffle] + /model.24/Mul_10, /model.24/Pow_2)), /model.24/Mul_11)

/model.24/Mul_9_output_0 copy

/model.24/Mul_11_output_0 copy

/model.24/Reshape_5

/model.24/Reshape_5_copy_outputBindings:

images

output0

[03/31/2024-19:26:32] [I] Starting inference

[03/31/2024-19:26:35] [I] Warmup completed 15 queries over 200 ms

[03/31/2024-19:26:35] [I] Timing trace has 220 queries over 3.03217 s

[03/31/2024-19:26:35] [I]

[03/31/2024-19:26:35] [I] === Trace details ===

[03/31/2024-19:26:35] [I] Trace averages of 10 runs:

[03/31/2024-19:26:35] [I] Average on 10 runs - GPU latency: 12.1579 ms - Host latency: 12.6706 ms (enqueue 1.08868 ms)

[03/31/2024-19:26:35] [I] Average on 10 runs - GPU latency: 11.4727 ms - Host latency: 11.992 ms (enqueue 1.09192 ms)

[03/31/2024-19:26:35] [I] Average on 10 runs - GPU latency: 11.6241 ms - Host latency: 12.1402 ms (enqueue 1.22079 ms)

[03/31/2024-19:26:35] [I] Average on 10 runs - GPU latency: 12.0684 ms - Host latency: 12.6277 ms (enqueue 1.45154 ms)

[03/31/2024-19:26:35] [I] Average on 10 runs - GPU latency: 13.4649 ms - Host latency: 14.0317 ms (enqueue 1.39036 ms)

[03/31/2024-19:26:35] [I] Average on 10 runs - GPU latency: 13.536 ms - Host latency: 14.0795 ms (enqueue 1.43072 ms)

[03/31/2024-19:26:35] [I] Average on 10 runs - GPU latency: 13.9222 ms - Host latency: 14.4413 ms (enqueue 1.28059 ms)

[03/31/2024-19:26:35] [I] Average on 10 runs - GPU latency: 13.2781 ms - Host latency: 13.8023 ms (enqueue 1.14445 ms)

[03/31/2024-19:26:35] [I] Average on 10 runs - GPU latency: 13.1395 ms - Host latency: 13.65 ms (enqueue 1.16509 ms)

[03/31/2024-19:26:35] [I] Average on 10 runs - GPU latency: 13.0399 ms - Host latency: 13.5614 ms (enqueue 1.11987 ms)

[03/31/2024-19:26:35] [I] Average on 10 runs - GPU latency: 13.0849 ms - Host latency: 13.5952 ms (enqueue 1.12455 ms)

[03/31/2024-19:26:35] [I] Average on 10 runs - GPU latency: 13.4768 ms - Host latency: 14.0196 ms (enqueue 1.15608 ms)

[03/31/2024-19:26:35] [I] Average on 10 runs - GPU latency: 13.5992 ms - Host latency: 14.1209 ms (enqueue 1.38413 ms)

[03/31/2024-19:26:35] [I] Average on 10 runs - GPU latency: 13.5926 ms - Host latency: 14.1487 ms (enqueue 1.13781 ms)

[03/31/2024-19:26:35] [I] Average on 10 runs - GPU latency: 13.5007 ms - Host latency: 14.0171 ms (enqueue 1.29099 ms)

[03/31/2024-19:26:35] [I] Average on 10 runs - GPU latency: 13.5047 ms - Host latency: 14.0203 ms (enqueue 1.75901 ms)

[03/31/2024-19:26:35] [I] Average on 10 runs - GPU latency: 13.5858 ms - Host latency: 14.1088 ms (enqueue 1.12991 ms)

[03/31/2024-19:26:35] [I] Average on 10 runs - GPU latency: 13.6431 ms - Host latency: 14.1659 ms (enqueue 1.10955 ms)

[03/31/2024-19:26:35] [I] Average on 10 runs - GPU latency: 13.5172 ms - Host latency: 14.0271 ms (enqueue 1.23049 ms)

[03/31/2024-19:26:35] [I] Average on 10 runs - GPU latency: 13.7 ms - Host latency: 14.229 ms (enqueue 2.15166 ms)

[03/31/2024-19:26:35] [I] Average on 10 runs - GPU latency: 13.5072 ms - Host latency: 14.0273 ms (enqueue 1.7925 ms)

[03/31/2024-19:26:35] [I] Average on 10 runs - GPU latency: 13.6215 ms - Host latency: 14.1377 ms (enqueue 1.28486 ms)

[03/31/2024-19:26:35] [I]

[03/31/2024-19:26:35] [I] === Performance summary ===

[03/31/2024-19:26:35] [I] Throughput: 72.5552 qps

[03/31/2024-19:26:35] [I] Latency: min = 11.75 ms, max = 14.8162 ms, mean = 13.7097 ms, median = 13.9788 ms, percentile(99%) = 14.739 ms

[03/31/2024-19:26:35] [I] Enqueue Time: min = 0.924103 ms, max = 5.29688 ms, mean = 1.31525 ms, median = 1.21631 ms, percentile(99%) = 3.40771 ms

[03/31/2024-19:26:35] [I] H2D Latency: min = 0.394531 ms, max = 0.620544 ms, mean = 0.414878 ms, median = 0.401672 ms, percentile(99%) = 0.545349 ms

[03/31/2024-19:26:35] [I] GPU Compute Time: min = 11.2486 ms, max = 14.2521 ms, mean = 13.1835 ms, median = 13.4702 ms, percentile(99%) = 14.2121 ms

[03/31/2024-19:26:35] [I] D2H Latency: min = 0.102539 ms, max = 0.208496 ms, mean = 0.111349 ms, median = 0.107422 ms, percentile(99%) = 0.158401 ms

[03/31/2024-19:26:35] [I] Total Host Walltime: 3.03217 s

[03/31/2024-19:26:35] [I] Total GPU Compute Time: 2.90037 s

[03/31/2024-19:26:35] [W] * GPU compute time is unstable, with coefficient of variance = 5.38162%.

[03/31/2024-19:26:35] [W] If not already in use, locking GPU clock frequency or adding --useSpinWait may improve the stability.

[03/31/2024-19:26:35] [I] Explanations of the performance metrics are printed in the verbose logs.

[03/31/2024-19:26:35] [I]

&&&& PASSED TensorRT.trtexec [TensorRT v8403] # trtexec.exe --loadEngine=E:/Skripsi_(Cepat_Lulus_Kasian_Bapak)/Dataset/Raspi_Mas_Aji/yolov5/bestdataterbaru.engine --dumpLayerInf

Using netron.app for visualize the output of ONNX format.

YOLOv5 Output Detect.pt model using netron.app

python detect.py --weights bestdataterbaru.pt

python detect.py --weights bestdataterbaru.engine

python detect.py --weights bestdataterbaru.onnx

TensorRT model posibbly achieve 50± FPS, it’s much more powerfull with utilizing CUDA on experimental enviroment.

TensorRT provides support for various deep learning frameworks, including PyTorch and TensorFlow, allowing users to convert models trained in these frameworks to TensorRT format for accelerated inference. In summary, TensorRT is a powerful tool for optimizing and accelerating deep learning inference on NVIDIA GPUs, offering a range of optimization techniques and tools for seamless innotegration into your deep learning workflow.

[1] TensorRT Getting Started in Detail: https://blog.csdn.net/IAMoldpan/article/details/117908232

[2]https://blog.csdn.net/weixin_43917589/article/details/122578198

[3]https://blog.csdn.net/qq_44523137/article/details/123178290?spm=1001.2014.3001.550