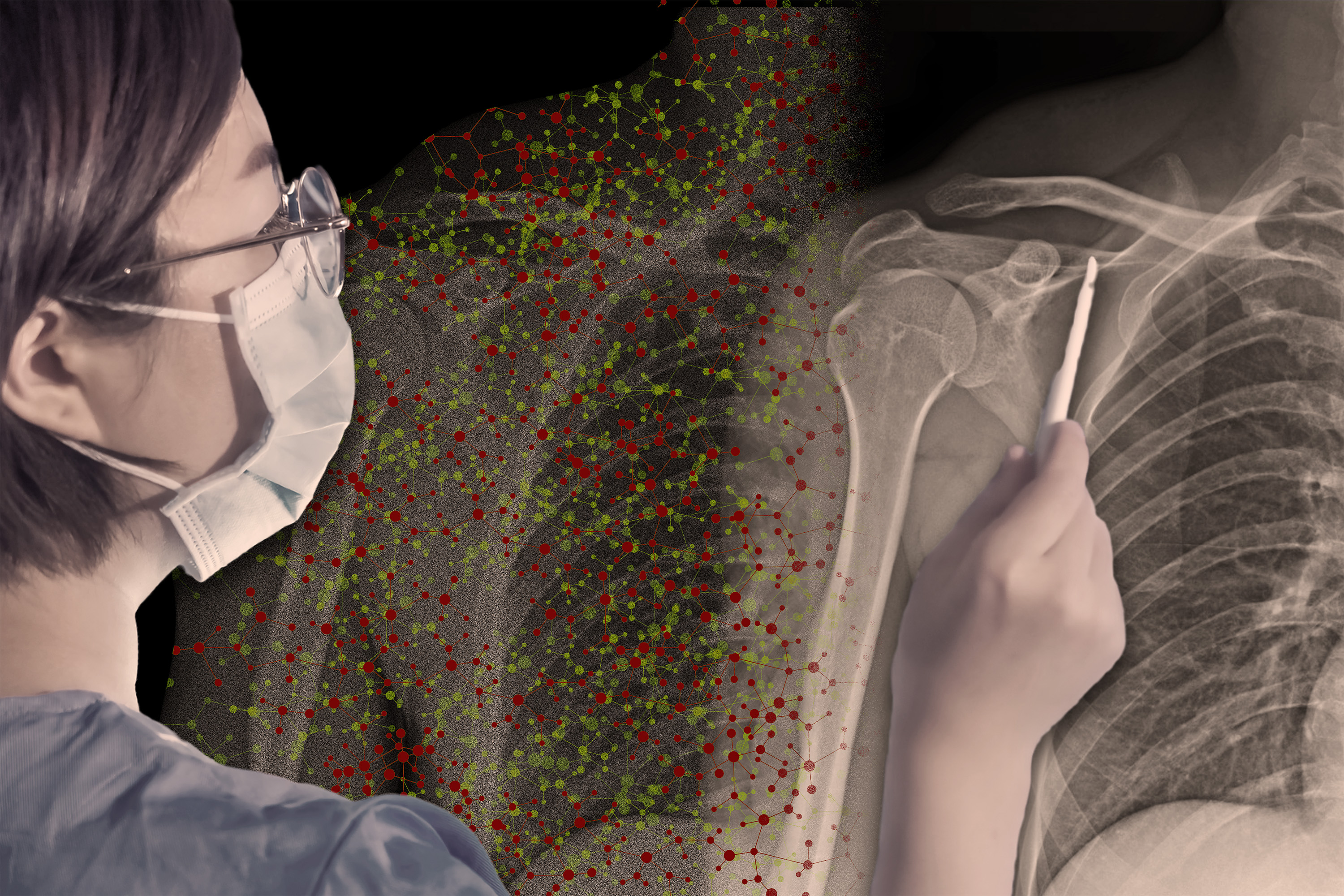

AI models that pick out patterns in images can often do it better than human eyes — but not always. If a radiologist uses an artificial intelligence model to help her determine whether a patient’s x-rays show signs of pneumonia, when should she trust the model’s advice and when should she ignore it?

A custom integration process could help that radiologist answer that question, according to researchers at MIT and the MIT-IBM Watson AI Lab. They designed a system that teaches a user when to work with an AI assistant.

In this case, the training method can find situations where the radiologist trusts the model’s advice — except they shouldn’t because the model is wrong. The system automatically learns rules for how it should work with the AI and describes them in natural language.

During onboarding, the radiologist practices working with the AI using training exercises based on these rules, receiving feedback on her performance and the AI’s performance.

The researchers found that this integration process led to about a 5 percent improvement in accuracy when humans and AI worked together on an image prediction task. Their results also show that telling the user when to trust the AI, without training, led to worse performance.

Importantly, the researchers’ system is fully automated, so it learns to create the integration process based on input from humans and AI performing a specific task. It can also be adapted to different tasks, so it can be scaled and used in many situations where humans and AI models work together, such as in social media content moderation, writing and programming.

“So often, people are given these AI tools to use without any training to help them understand when it’s going to be useful. We don’t do that with almost every other tool that people use — there’s almost always some kind of learning involved. But for AI, that seems to be missing. We try to address this problem from a methodological and behavioral perspective,” says Hussein Mozannar, a graduate student in the Social and Engineering Systems PhD program at the Institute for Data, Systems and Society (IDSS) and lead author of a paper on this educational process.

The researchers envision that such integration will be a critical part of training for medical professionals.

“One could imagine, for example, that doctors making treatment decisions with the help of artificial intelligence would first have to do training similar to what we propose. We may need to rethink everything from continuing medical education to how clinical trials are designed,” says senior author David Sontag, EECS professor, member of the MIT-IBM Watson AI Lab and the MIT Jameel Clinic, and led by the Clinical Machine Learning Group of the Computer Science and Artificial Intelligence Laboratory (CSAIL).

Mozannar, who is also a researcher in the Clinical Machine Learning Group, is joined by Jimin J. Lee, an electrical and computer science undergraduate. Dennis Wei, senior researcher at IBM Research. and Prasanna Sattigeri and Subhro Das, research staff members at the MIT-IBM Watson AI Lab. The paper will be presented at the Conference on Neural Information Processing Systems.

Training that evolves

Existing integration methods for human-AI collaboration often consist of training materials produced by human experts for specific use cases, making them difficult to upgrade. Some related techniques rely on explanations, where the AI tells the user their confidence in each decision, but research has shown that explanations are rarely helpful, Mozannar says.

“The capabilities of the AI model are constantly evolving, so the use cases where a human could potentially benefit from it are increasing over time. At the same time, the user’s perception of the model continues to change. So we need an educational process that also evolves over time,” he adds.

To achieve this, their integration method is automatically learned from data. It is constructed from a dataset containing multiple instances of a task, such as detecting the presence of a traffic light from a blurred image.

The first step of the system is to collect data about the human and the artificial intelligence performing this task. In this case, the human will try to predict, with the help of artificial intelligence, whether the blurry images contain traffic lights.

The system embeds these data points into a latent space, which is a representation of data in which similar data points are closest together. It uses an algorithm to discover areas of this space where the human is working incorrectly with the AI. These areas record cases where the human trusted the AI’s prediction but the prediction was wrong and vice versa.

Perhaps the human mistrusts the AI when the images show a freeway at night.

After the regions are discovered, a second algorithm uses a large language model to describe each region by rule, using natural language. The algorithm iteratively adjusts the rules by finding counterexamples. He could describe this area as “ignore the AI when it’s a highway at night.”

These rules are used to construct training exercises. The onboarding system shows the human an example, in this case a blurry highway scene at night, as well as the AI prediction, and asks the user if the image shows traffic lights. User can answer yes, no or use AI prediction.

If the human makes a mistake, they are shown the correct answers and performance statistics for the human and the AI in those instances of the task. The system does this for each region, and at the end of the training process, repeats the exercises that the human did wrong.

“After that, the human learned something about these regions that they can hopefully remove in the future to make more accurate predictions,” says Mozannar.

Integration enhances accuracy

The researchers tested this system with users on two tasks — detecting traffic lights in blurry images and answering multiple-choice questions from several fields (such as biology, philosophy, computer science, etc.).

They first showed users a card with information about the AI model, how it was trained, and an analysis of its performance across major categories. Users were divided into five groups: Some were given just the card, some were given the researcher onboarding process, some went through a basic onboarding process, some went through the researcher onboarding process and were given recommendations on when to or not to trust the artificial intelligence and others were only given the recommendations.

Only the process of incorporating researchers without recommendations significantly improved users’ accuracy, boosting their performance on the traffic light prediction task by about 5 percent without slowing them down. However, integration was not as effective for the question-answering task. The researchers believe this is because the AI model, ChatGPT, provided explanations with each answer that indicate whether it should be trusted.

But providing recommendations without integration had the opposite effect—users not only performed worse, but took longer to make predictions.

“When you give someone only recommendations, they seem confused and don’t know what to do. It derails their process. Also, people don’t like being told what to do, so that’s a factor as well,” says Mozannar.

Providing recommendations alone could harm the user if those recommendations are wrong, he adds. With embedding, on the other hand, the biggest limitation is the amount of data available. If there isn’t enough data, the onboarding stage won’t be as effective, he says.

In the future, he and his colleagues want to conduct larger studies to assess the short- and long-term effects of integration. They also want to leverage unlabeled data for the integration process and find methods to efficiently reduce the number of regions without missing important examples.

“People are adopting AI systems willy-nilly, and indeed AI offers great potential, but these AI agents still sometimes make mistakes. So it’s important for AI developers to devise methods that help people know when it’s safe to rely on AI suggestions,” says Dan Weld, professor emeritus in the Paul G. Allen School of Computer Science and Engineering at the University of Washington. was not involved in this research. “Mozannar et al. have created an innovative method for identifying situations where AI is trustworthy and (most importantly) for describing them to humans in a way that leads to better human-AI team interactions.”

This work is funded, in part, by the MIT-IBM Watson AI Lab.