Speaking at the Nov. 28 “Generative AI: Shaping the Future” symposium, MIT’s inaugural Generative AI Weekkeynote speaker and iRobot co-founder Rodney Brooks cautioned attendees against uncritically overstating the potential of this emerging technology, which underpins increasingly powerful tools like OpenAI’s ChatGPT and Google’s Bard.

“Exaggeration leads to hubris, and hubris leads to hubris, and hubris leads to failure,” warned Brooks, who is also an MIT professor emeritus, former director of the Computer Science and Artificial Intelligence Laboratory (CSAIL) and founder of Robust . .ALL INCLUDED.

“No technology has ever surpassed anything else,” he added.

The banquet, which drew hundreds of attendees from academia and industry to the Institute’s Kresge Auditorium were treated to messages of hope about the opportunities generative AI offers to make the world a better place, including through art and creativity, interspersed with cautionary tales of what could go wrong if these AI tools are not developed responsibly.

Generative AI is a term that describes machine learning models that learn to create new material that resembles the data they were trained on. These models have demonstrated some incredible capabilities, such as the ability to produce human-like creative writing, translate languages, generate working computer code, or create realistic images from text messages.

In her opening remarks to kick off the symposium, MIT President Sally Kornbluth highlighted several projects that faculty and students have undertaken to use genetic artificial intelligence to make a positive impact on the world. For example, the work of the Axim Collaborative, an online education initiative started by MIT and Harvard, involves exploring the educational aspects of genetic artificial intelligence to help underserved students.

The Institute also recently announced seed grants for 27 interdisciplinary faculty research projects focused on how artificial intelligence will transform people’s lives across society.

By hosting Generative AI Week, MIT hopes not only to showcase this kind of innovation, but also to create “collaborative collisions” among participants, Kornbluth said.

Collaboration with academics, policymakers and industry will be critical if we are to safely integrate a rapidly evolving technology like genetic AI in ways that are humane and help people solve problems, he told the audience.

“I honestly can’t think of a challenge more closely aligned with MIT’s mission. It’s a deep responsibility, but I have every confidence that we can face it if we face it head on and if we face it as a community,” he said.

While genetic artificial intelligence has the potential to help solve some of the planet’s most pressing problems, the emergence of these powerful machine learning models has blurred the line between science fiction and reality, CSAIL director Daniela Rus said in her opening remarks . It’s no longer a question of whether we can build machines that produce new content, he said, but how we can use these tools to strengthen businesses and ensure sustainability.

“Today, we will discuss the possibility of a future where genetic artificial intelligence exists not just as a technological marvel, but as a source of hope and a force for good,” said Rus, who is also the Andrew and Erna Viterbi Professor in the Department of of Electrical Engineering and Computer Science.

But before the discussion delved into the potential of genetic AI, attendees were asked to reflect on their own humanity as MIT professor Joshua Bennett read an original poem.

Bennett, a professor in MIT’s Department of Literature and Distinguished Chair in the Humanities, was asked to write a poem about what it means to be human and was inspired by his daughter, who was born three weeks ago.

The poem told of his experiences as a watching boy Star Trek with his father and touched on the importance of passing on traditions to the next generation.

In his keynotes, Brooks set out to unpack some of the deep, scientific questions surrounding genetic AI, as well as explore what the technology can tell us about ourselves.

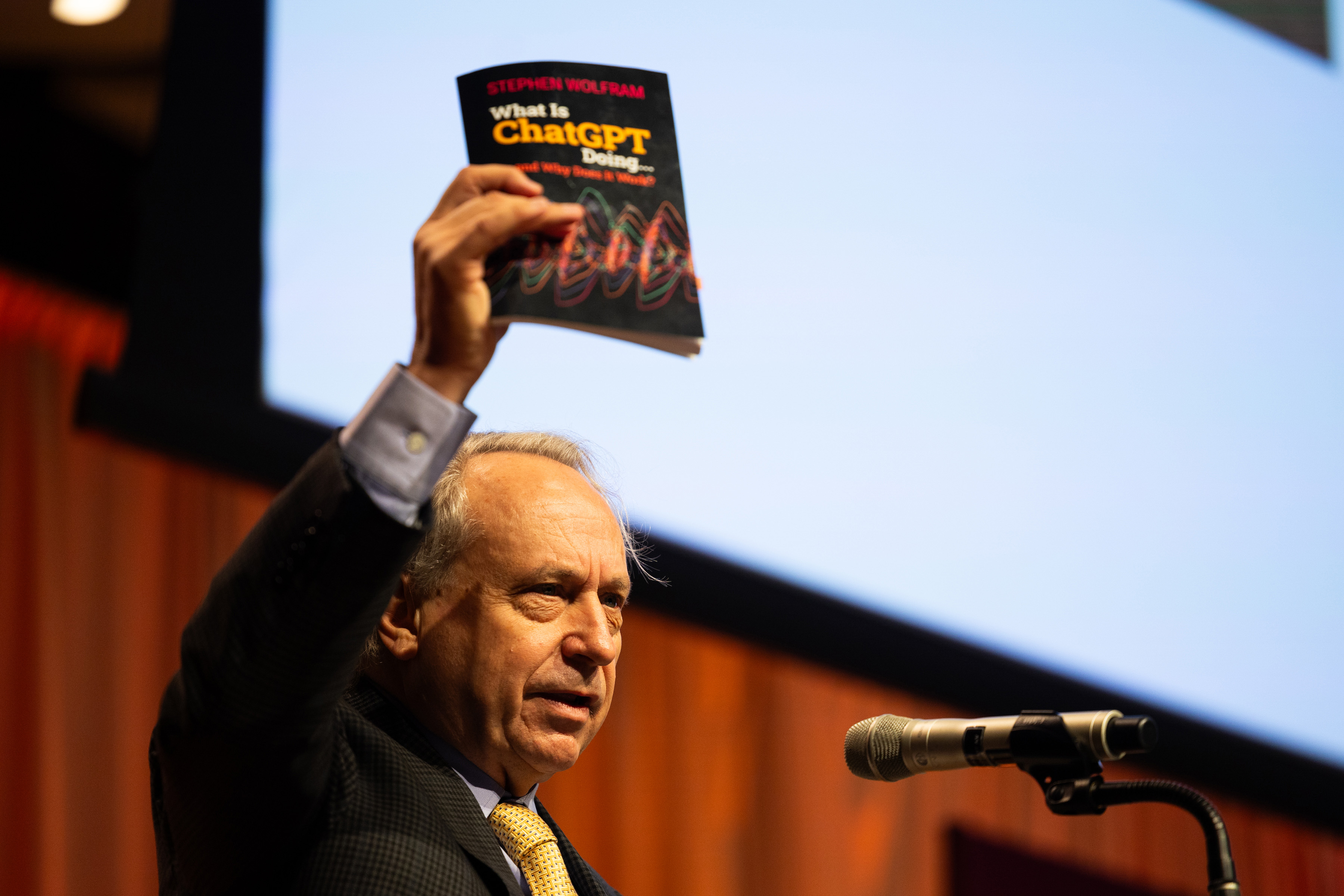

First, he tried to dispel some of the mystery swirling around AI generation tools like ChatGPT by explaining the basics of how this large language model works. ChatGPT, for example, generates text one word at a time, determining what the next word should be in the context of what it has already written. While a human can write a story by thinking of whole sentences, ChatGPT focuses only on the next word, Brooks explained.

ChatGPT 3.5 is built on a machine learning model that has 175 billion parameters and has been exposed to billions of pages of text on the web during training. (The latest iteration, ChatGPT 4, is even bigger.) It learns the associations between words in this huge body of text and uses that knowledge to suggest which word might come next when given a prompt.

The model has demonstrated some incredible capabilities, including the ability to write a robot sonnet in the style of Shakespeare’s famous Sonnet 18. During his talk, Brooks presented the sonnet he asked ChatGPT to write side-by-side with his own sonnet.

But while researchers still don’t fully understand how these models work, Brooks reassured the audience that the seemingly incredible abilities of genetic AI aren’t magic, and it doesn’t mean these models can do anything.

His biggest fears about genetic AI don’t revolve around models that might one day surpass human intelligence. Instead, he’s more concerned about researchers who might throw away decades of excellent work that came close to a breakthrough, just to jump on shiny new advances in genetic artificial intelligence. venture capital firms blindly flocking to technologies that can yield the highest profit margins; or the possibility that an entire generation of engineers will forget about other forms of software and artificial intelligence.

At the end of the day, those who believe genetic AI can solve the world’s problems and those who believe it will only create new problems have at least one thing in common: Both groups tend to overestimate the technology, he said.

“What’s the conceit with genetic artificial intelligence? The conceit is that it will somehow lead to artificial general intelligence. By itself, it’s not,” Brooks said.

Following Brooks’ presentation, a panel of MIT faculty spoke about their work using genetic AI and engaged in a discussion about future developments, important but unexplored research topics, and regulatory and policy challenges for AI.

The panel consisted of Jacob Andreas, associate professor in MIT’s Department of Electrical Engineering and Computer Science (EECS) and member of CSAIL. Antonio Torralba, the professor of Delta Electronics of EECS and member of CSAIL. Ev Fedorenko, associate professor of brain and cognitive sciences and researcher at the McGovern Institute for Brain Research at MIT. and Armando Solar-Lezama, Distinguished Professor of Computer Science and Associate Director of CSAIL. Moderated by William T. Freeman, the Thomas and Gerd Perkins Professor of EECS and a member of CSAIL.

The panelists discussed several possible future research directions around genetic artificial intelligence, including the possibility of incorporating perceptual systems, relying on human senses such as touch and smell, rather than focusing primarily on language and images. The researchers also spoke about the importance of working with policymakers and the public to ensure that AI tools are produced and developed responsibly.

“One of the big risks with genetic AI today is the risk of digital snake oil. There is a big risk that a lot of products will come out that claim to do miraculous things, but in the long run they could be very harmful,” said Solar-Lezama.

The morning session concluded with an excerpt from the 1925 science fiction novel “Metropolis,” read by senior Joy Ma, a physics and theater major, followed by a roundtable discussion on the future of genetic artificial intelligence. The discussion included Joshua Tenenbaum, a professor in the Department of Brain and Cognitive Sciences and a member of CSAIL. Dina Katabi, Thuan and Nicole Pham Professor at EECS and Principal Investigator at CSAIL and MIT’s Jameel Clinic. and Max Tegmark, professor of physics. and moderated by Daniela Rus.

One focus of the discussion was the possibility of developing AI models that can go beyond what we can do as humans, such as tools that can sense someone’s emotions by using electromagnetic signals to understand how a person’s breathing and heart rate change.

But a key to safely integrating AI into the real world is making sure it can be trusted, Tegmark said. If we know that an AI tool will meet the specifications we insist on, then “we no longer have to fear building really powerful systems that go out and do things for us in the world,” he said.