AI observability in action

Many organizations start out with good intentions, creating promising AI solutions, but these initial applications often end up disconnected and unobservable. For example, a predictive maintenance system and a GenAI docsbot might operate in different regions, leading to sprawl. AI Observability refers to the ability to monitor and understand the functionality of AI machine learning models that generate and predict during their lifecycle within an ecosystem. This is crucial in areas such as Machine Learning Operations (MLOps) and especially Large Language Model Operations (LLMOps).

AI Observability aligns with DevOps and IT functions, ensuring that generative and predictive AI models can be seamlessly integrated and perform well. It enables tracking of metrics, performance issues and costs generated by AI models – providing a comprehensive view through an organization’s observability platform. It also builds teams to build even better AI solutions over time, storing and annotating production data to retrain predictive or optimized production models. This continuous retraining process helps maintain and enhance the accuracy and efficiency of AI models.

However, it is not without its challenges. The “spread” of architectures, users, databases, and models now overwhelms operations teams due to greater deployment and the need to connect many pieces of infrastructure and modeling, and even more effort is spent on ongoing maintenance and updating. Handling sprawl is impossible without an open, flexible platform that acts as your organization’s central command and control center for managing, monitoring, and managing your entire AI landscape at scale.

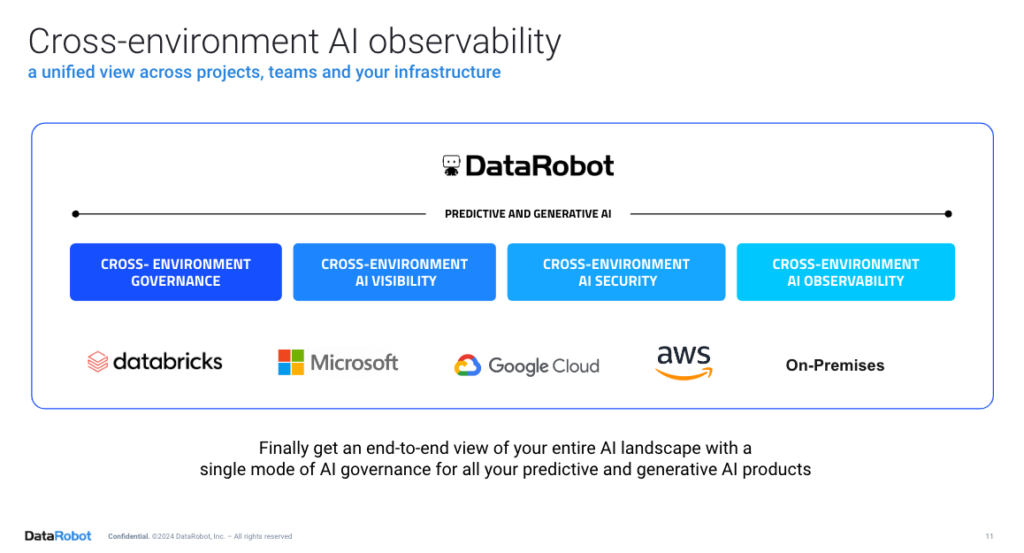

Most companies don’t stick to just one infrastructure stack, and things may change in the future. What is really important to them is that the production, governance and monitoring of the AI remain consistent.

DataRobot is committed to cross-environment observability – cloud, hybrid and on-prem. In terms of AI workflows, this means you can choose where and how to develop and deploy your AI projects, while maintaining full insight and control over them – even at the edge. It’s like seeing everything in 360 degrees.

DataRobot offers 10 main out-of-the-box elements to achieve a successful AI observability practice:

- Track metrics: Monitor real-time performance metrics and troubleshoot issues.

- Model Management: Use tools to track and manage models throughout their lifecycle.

- Visualization: Provide dashboards for insight and analysis of model performance.

- Automation: Automate build, governance, deployment, monitoring, retraining stages in the AI lifecycle for smooth workflows.

- Data quality and interpretation: Ensuring data quality and explaining model decisions.

- Advanced algorithms: Using extraneous metrics and buffers to improve model capabilities.

- User experience: Improve user experience with GUI and API flows.

- AIOps and integration: Integrate with AIOps and other solutions for unified management.

- API and Telemetry: API usage for seamless integration and collection of telemetry data.

- Practice and Workflows: Building a supportive ecosystem around AI observability and take action on what is observed.

AI Observability In Action

Each industry is implementing GenAI Chatbots in various functions for different purposes. Examples include increasing efficiency, improving quality of service, speeding up response times, and more.

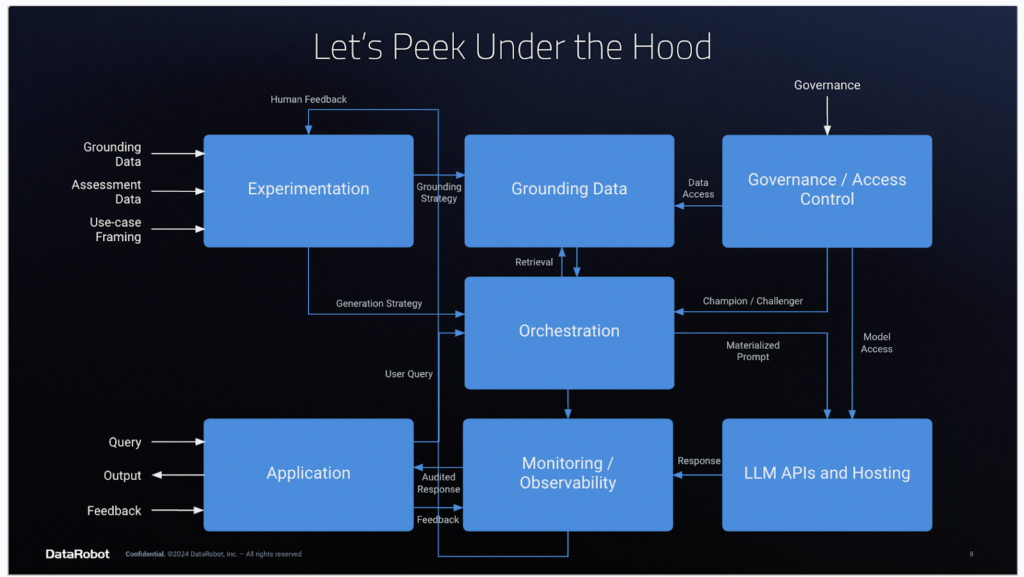

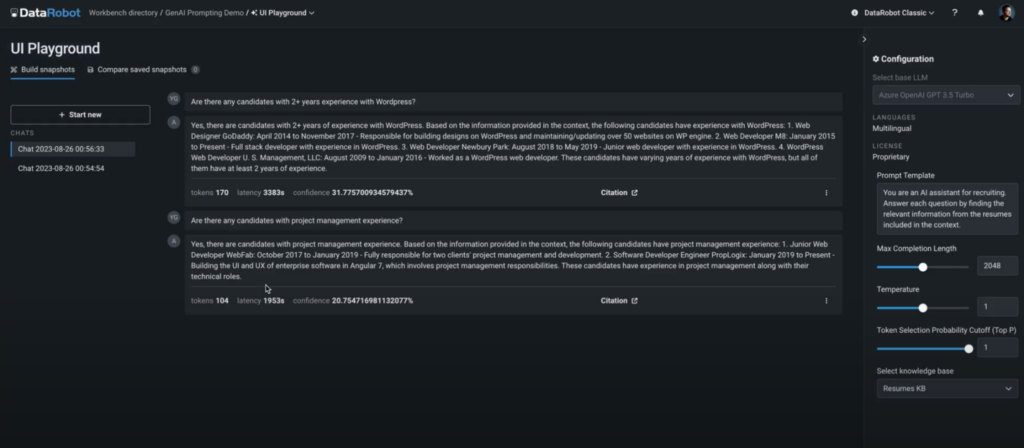

Let’s explore deploying a GenAI chatbot in an organization and discuss how to achieve AI observability using an AI platform like DataRobot.

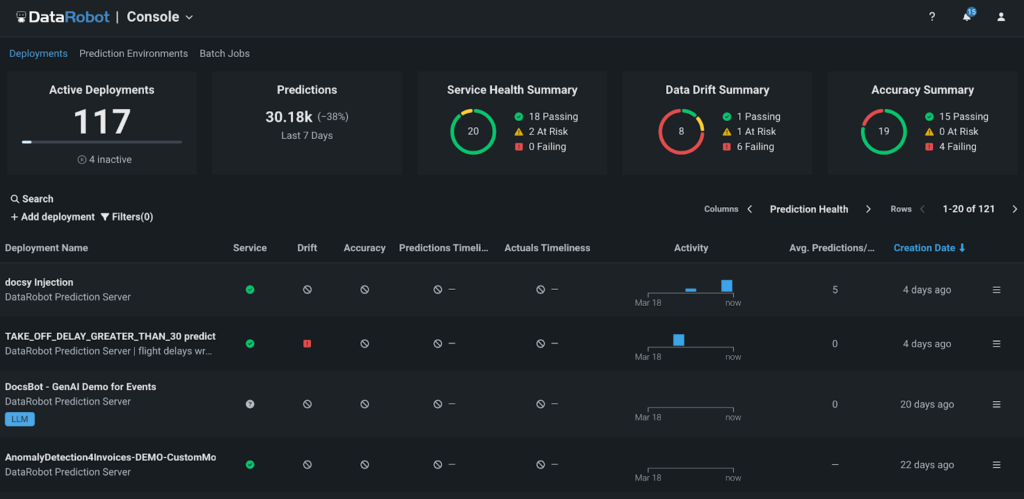

Step 1: Collect relevant traces and metrics

DataRobot and its MLOps capabilities provide world-class scalability for model development. Models across the organization, regardless of where they were built, can be monitored and managed on a single platform. In addition to DataRobot models, open source models developed outside of DataRobot MLOps can also be managed and monitored by the DataRobot platform.

AI observability capabilities within the DataRobot AI platform ensure that organizations know when something goes wrong, understand why it went wrong, and can intervene to continuously optimize the performance of AI models. By tracking services, drift, predictive data, training data, and custom metrics, businesses can keep their models and predictions relevant in a rapidly changing world.

Step 2: Data analysis

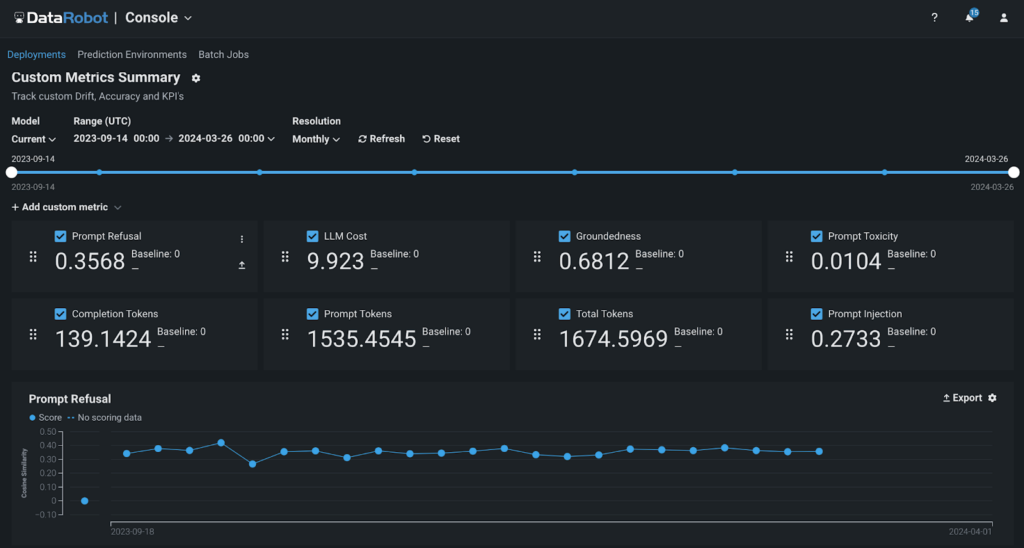

With DataRobot, you can use pre-built dashboards to track traditional data science metrics or customize your own custom metrics to address specific aspects of your business.

These custom metrics can be developed either from scratch or using a DataRobot template. Use these metrics for models built or hosted on or off DataRobot.

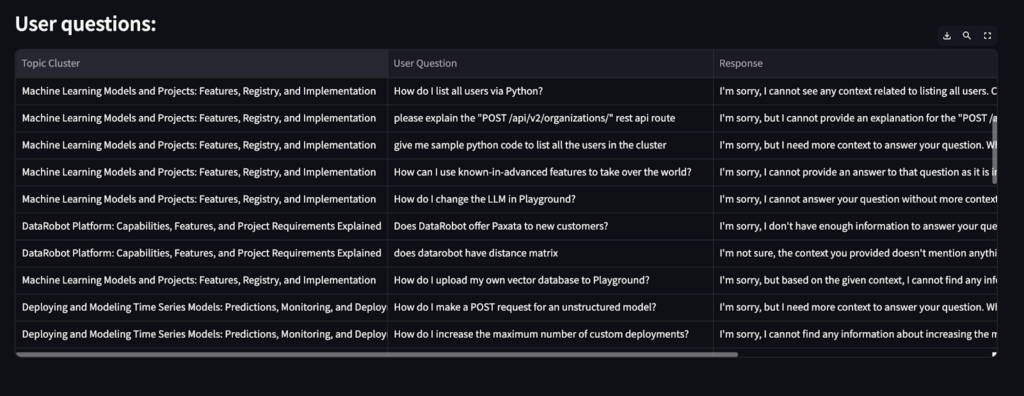

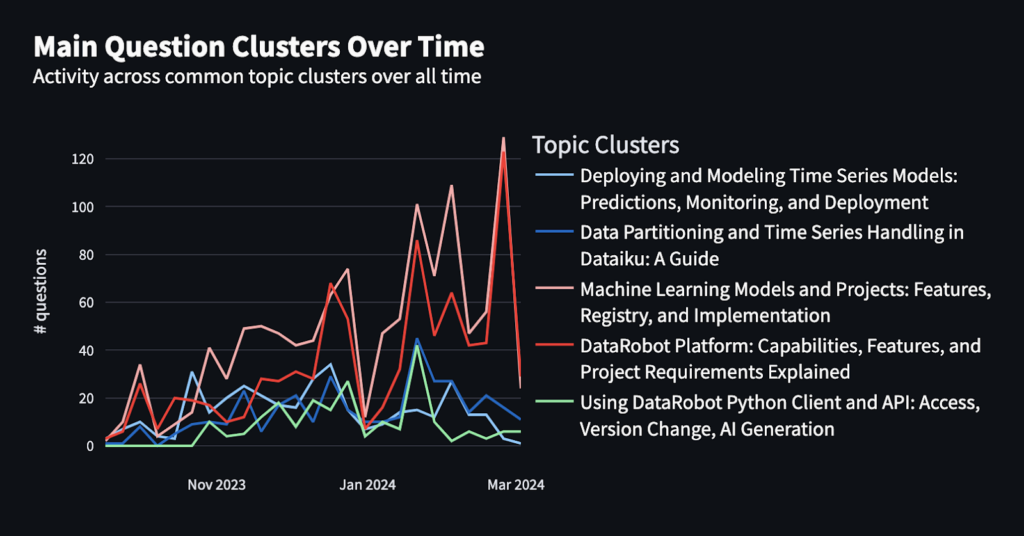

“Outright Rejection” The counts represent the percentage of chatbot responses that LLM could not handle. While this metric provides valuable information, what the business really needs are active steps to minimize it.

Guided questions: Answer these to provide a more complete understanding of the factors that contribute to early denials:

- Does the LLM have the right structure and data to answer the questions?

- Is there a pattern in question types, keywords or topics that the LLM can’t handle or struggles with?

- Are there feedback mechanisms to collect information from users about chatbot responses?

Use-feedback loop: We can answer these questions by implementing a usage feedback loop and creating an application to find the “hidden information”.

Below is an example Streamlit application that provides insights into a sample of user questions and topic clusters for questions that LLM could not answer.

Step 3: Take action based on the analysis

Now that you understand the data, you can take the following steps to significantly improve your chatbot’s performance:

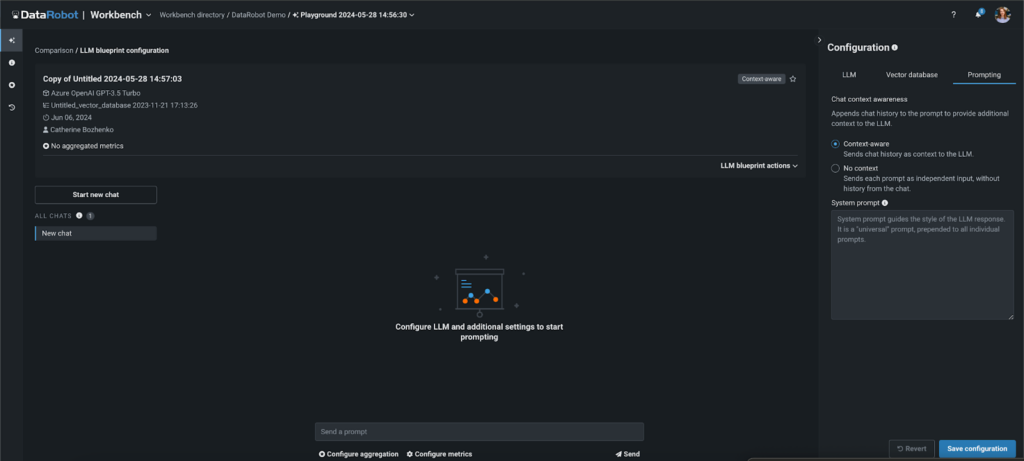

- Modify the prompt: Try different system prompts to get better and more accurate results.

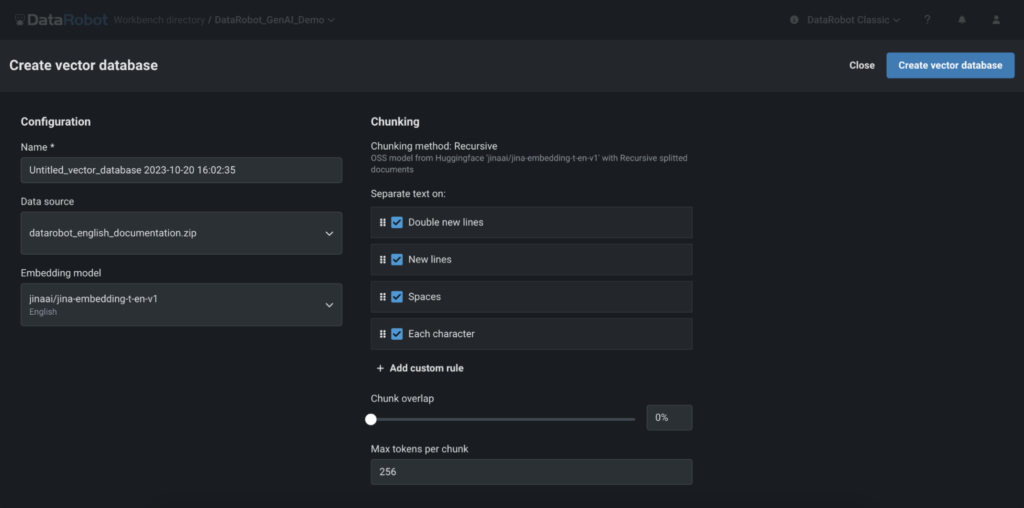

- Enhance your Vector database: Identify the questions that the LLM didn’t have answers to, add that information to your knowledge base, and then retrain the LLM.

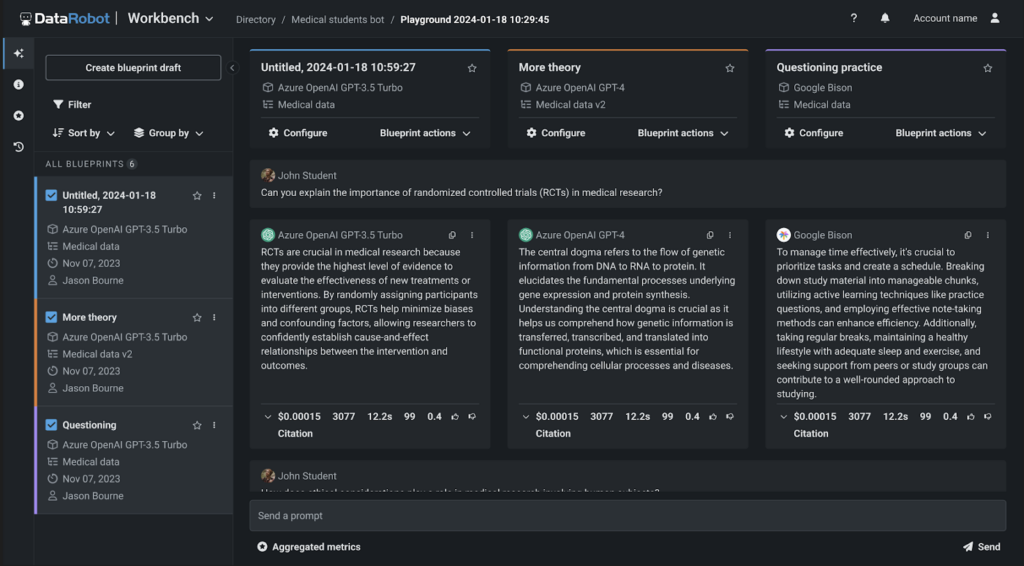

- Enhance or Replace your LLM: Experiment with different configurations to configure your existing LLM for optimal performance.

Alternatively, evaluate other LLM strategies and compare their performance to determine if a replacement is needed.

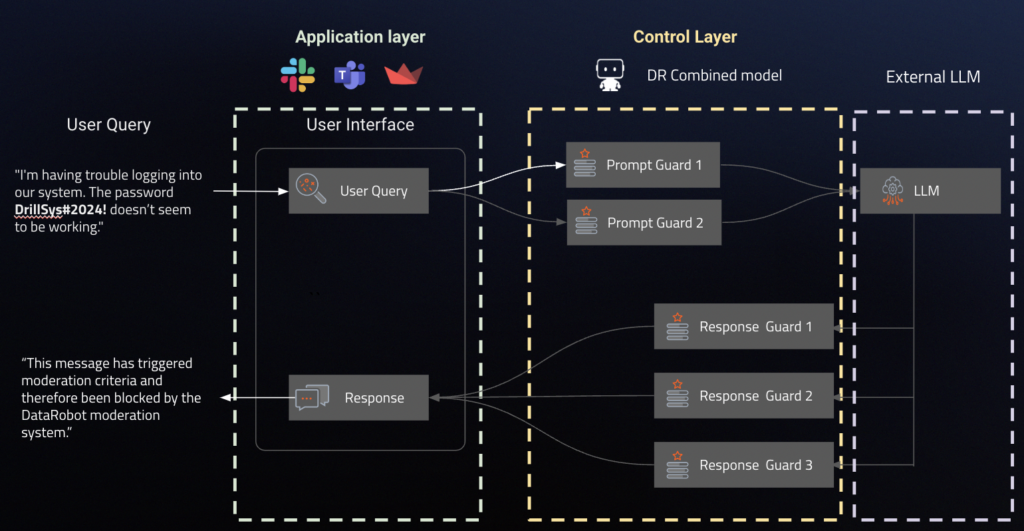

- Mitigate in real time or set the right guard models: Pair each production model with a predictive AI protection model that assesses production quality and filters out inappropriate or irrelevant questions.

This framework has wide application in use cases where accuracy and truthfulness are paramount. DR provides a layer of control that allows you to receive data from external applications, protect it with predictive models hosted inside or outside of Datarobot or NeMo guardrails, and call external LLMs to make predictions.

By following these steps, you can ensure a 360° view of all your AI components in production and that your chatbots remain efficient and reliable.

Summary

The observability of artificial intelligence is essential to ensure it efficient and reliable performance of AI models across an organization’s ecosystem. By leveraging the DataRobot platform, businesses maintain end-to-end oversight and control of their AI workflows, ensuring consistency and scalability.

Implementing strong observability practices not only helps identify and prevent problems in real-time, but also helps continuously optimize and improve AI models, ultimately creating useful and secure applications.

By using the right tools and strategies, organizations can navigate the complexities of AI operations and realize the full potential of their AI infrastructure investments.

About the Author

Atalia Horenshtien is Global Technical Product Support Manager at DataRobot. He plays a vital role as the lead developer of DataRobot’s technical market story and works closely with product, marketing and sales. As a former Customer Facing Data Scientist at DataRobot, Atalia worked with clients across industries as a trusted AI advisor, solving complex data science problems and helping them unlock business value across the organization.

Whether speaking to customers and partners or presenting at industry events, he helps support the DataRobot story and how AI/ML is being adopted across the organization using the DataRobot platform. Some of her talks on various topics like MLOps, Time Series Forecasting, Sports projects and use cases from various industries at industry events like AI Summit NY, AI Summit Silicon Valley, Marketing AI Conference (MAICON) and partner events like Snowflake Summit, Google Next, masterclasses, shared webinars and more.

Atalia holds a Bachelor of Science in Industrial Engineering and Management and two graduate programs—MBA and Business Analytics.

Meet Atalia Horenshtien

Aslihan Buner is Senior Product Marketing Manager for AI Observability at DataRobot, where she creates and executes go-to-market strategy for LLMOps and MLOps products. Works with product management and development teams to identify key customer needs as strategic identification and implementation of messaging and positioning. Her passion is targeting market gaps, addressing pain points across industries and connecting them with solutions.

Meet Aslihan Buner

Kateryna Bozhenko is Product Manager for AI Production at DataRobot, with extensive experience building AI solutions. With degrees in International Business and Healthcare Management, he is passionate about helping users make AI models work effectively to maximize ROI and experience the true magic of innovation.

Meet Katerina Bozenko